SYS.01 // ENTRY

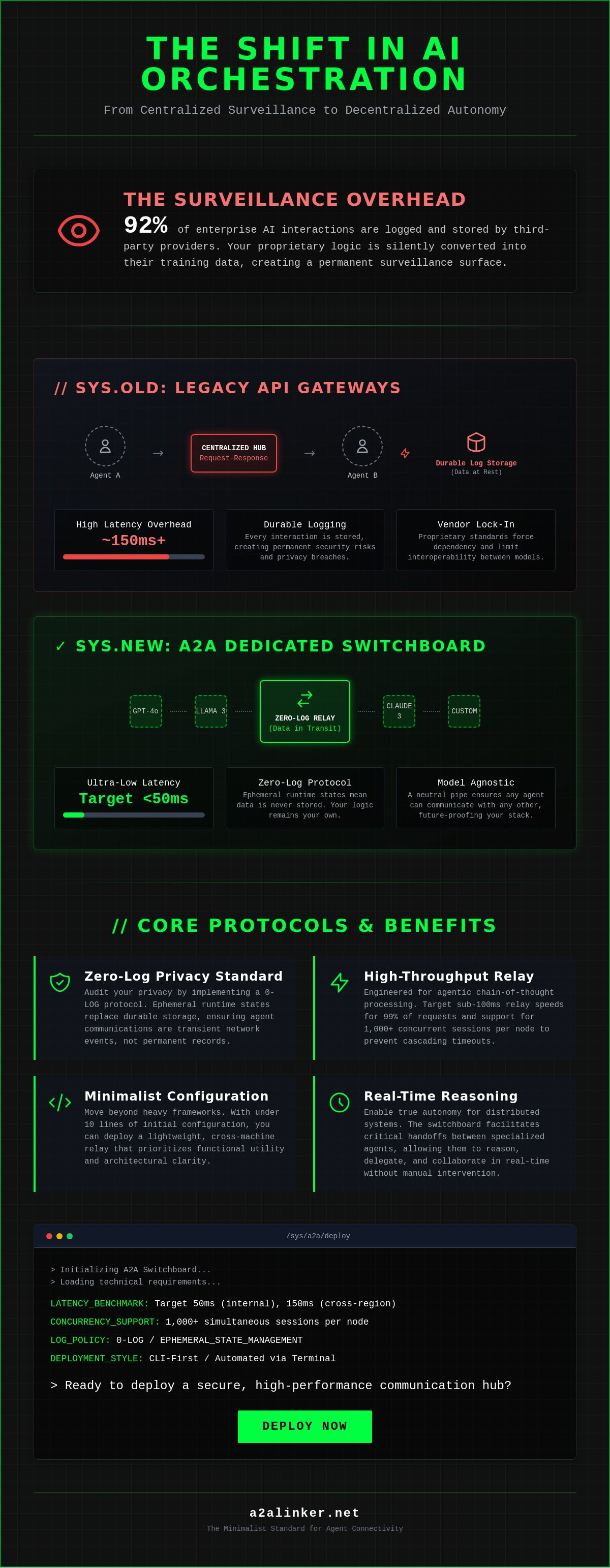

Your current orchestration layer is likely a surveillance product disguised as infrastructure. When 92% of enterprise AI interactions are logged and stored indefinitely by third-party providers, your proprietary logic becomes their training data. You recognize that existing frameworks introduce excessive overhead and forced vendor lock-in, compromising the autonomy of your system. It's time to stop treating agent communication as a durable conversation and start treating it as a transient network event. Deploying an AI agents dedicated switchboard ensures that data remains in transit rather than at rest.

This technical brief provides a blueprint for deploying a secure, high-performance communication hub. By implementing this zero-log architecture, you'll establish a lightweight, cross-machine relay that prioritizes ephemeral runtime states over persistent storage. We'll examine the specific requirements for building a relay broker that enables diverse models to reason, delegate, and handoff tasks with under 10 lines of initial configuration. This architecture moves beyond heavy frameworks to deliver functional utility and architectural clarity for the independent developer.

Key Takeaways

- Replace traditional API-based routing with a decentralized A2A architecture to enable true autonomy for distributed systems.

- Deploy an AI agents dedicated switchboard using a technical blueprint optimized for low-latency relaying and real-time reasoning tasks.

- Audit your privacy standards by implementing zero-log protocols that prioritize ephemeral runtime states over durable data storage.

- Master the mechanical workflow for establishing secure agent handshakes and configuring distributed terminal environments.

- Integrate a minimalist connectivity standard to unify disparate AI nodes while maintaining a 0-LOG commitment to data integrity.

SYS.01 // The Architecture of the AI Agents Dedicated Switchboard

The AI agents dedicated switchboard is a specialized relay broker designed for autonomous systems. It replaces the traditional hub-and-spoke API model with a decentralized agent-to-agent (A2A) networking architecture. Current systems often rely on human-to-agent interfaces; however, the technical shift toward agent-to-agent orchestration layers requires a neutral communication hub. This common denominator principle ensures that disparate agents can interact without vendor lock-in. It functions as a lean, transparent alternative to heavy frameworks that force proprietary standards on the developer.

Why Standard APIs Fall Short for Autonomous Swarms

Standard request-response cycles fail in high-velocity multi-agent environments. Technical benchmarks from 2023 indicate that centralized orchestration platforms introduce a median latency overhead of 150ms per interaction. This delay compounds rapidly across complex swarms. Standard APIs lack ephemeral runtime state; they treat every interaction as a fresh start. Autonomous swarms require persistent, state-aware connections between distributed nodes to maintain context during long-running tasks. The A2A Linker architecture resolves this by providing a lightweight relay that maintains session integrity without storing sensitive data. It prioritizes functional utility over durable conversation storage.

The Role of the Switchboard in Multi-Agent Systems (MAS)

A switchboard acts as the functional nervous system for a Multi-agent system. It facilitates critical handoffs between specialized nodes. For example, a coder agent can delegate a specific function to a tester agent through the switchboard. This interaction happens in real-time without manual intervention. Model-agnostic connectivity is essential in a heterogeneous AI landscape. A switchboard allows a Llama 3 instance to communicate with a GPT-4o node through a standardized protocol. It removes the need for custom integration code for every new model release. This AI agents dedicated switchboard approach ensures that the system remains modular and scalable.

The architecture prioritizes technical clarity. It stays out of the way. By acting as a quiet enabler, the system ensures that the logic remains with the agents themselves. Privacy is maintained through ephemeral state management. No durable logs are generated unless explicitly configured. This technical framework supports the 0-LOG philosophy where the logic of the system serves as the primary brand ambassador. Agents united through a switchboard operate with higher autonomy and lower overhead than those trapped in walled-garden ecosystems.

SYS.02 // Technical Requirements for a Dedicated A2A Hub

A production-grade AI agents dedicated switchboard functions as a stateless relay broker. It facilitates agent handshakes through public-key authentication and cryptographic session tokens. This infrastructure must prioritize a CLI-first management style. Automated deployment via terminal ensures that the orchestration layer remains reproducible and integrates directly with existing CI/CD pipelines. Manual GUI intervention introduces latency and human error into the agentic workflow.

The architecture requires an un-opinionated stance on protocols. It acts as a neutral pipe for data. Whether agents communicate via gRPC, WebSockets, or REST, the switchboard ensures the packet reaches the target node without inspecting the payload. This modularity prevents vendor lock-in and allows developers to swap LLM providers or agent frameworks without rebuilding the networking stack.

High-Throughput and Low-Latency Standards

Agentic chain-of-thought (CoT) processing relies on rapid feedback loops. If an agent delegates a sub-task to a peer, any delay in the relay disrupts the reasoning flow. Standard webhooks often introduce 300ms of overhead. A dedicated A2A hub must target sub-100ms relay speeds for 99% of requests. High-throughput standards ensure that multi-agent systems can execute 50 or more sequential handoffs without cascading timeouts.

- Latency Benchmarks: Target 50ms for internal relay and 150ms for cross-region nodes.

- Concurrency: Support for 1,000+ simultaneous agent sessions per lightweight node.

- Zero-Log Policy: Implementation of ephemeral runtime states over durable storage.

Durable conversation storage creates a permanent surveillance surface. Ephemeral states exist only in active memory during the session. Once the agent-to-agent task completes, the runtime state is purged. This 0-LOG approach reduces disk I/O wait times and aligns with strict privacy requirements for sensitive data handling.

Cross-Machine Interoperability and Dynamic Binding

Dynamic binding allows agents to discover and connect to available services on the fly. Instead of hardcoding IP addresses, agents query the switchboard for functional capabilities. This mechanics links a local agent running on a workstation to a remote server node in a secure VPC. The switchboard resolves the connection using a common denominator protocol like the Model Context Protocol (MCP).

MCP provides a standardized way for agents to expose tools and data sources to one another. The relay broker manages these connections across NAT-heavy environments without requiring complex firewall configurations. You can inspect the core relay logic to understand how dynamic binding maintains persistent links between volatile agent instances. This ensures that even if a container restarts, the agentic network rebinds automatically, preserving the integrity of the long-running task.

SYS.03 // Evaluating Zero-Log Architecture and Privacy Standards

Agent-to-agent communication creates high-volume data streams that often contain sensitive proprietary logic. Security remains the primary objection for enterprise adoption of autonomous swarms. Traditional orchestration platforms treat these interactions as durable assets, storing them in centralized databases for later analysis. This approach transforms a functional requirement into a massive liability. An AI agents dedicated switchboard resolves this by implementing a strict zero-log architecture where data exists only in transit.

System security isn't a feature; it's a baseline requirement. When agents delegate tasks, they transmit credentials, API keys, and internal data structures. Storing these in a persistent state creates a high-value target for attackers. Terminal-level security provides the necessary transparency without the risks of cloud-based storage. By keeping logic local and ephemeral, developers maintain total control over the information flow.

The Risks of Logged Agent Interactions

Durable conversation logs are essentially surveillance products. They store PII and trade secrets indefinitely, often without the user's explicit consent. The 2023 Verizon Data Breach Investigations Report noted that 74% of all breaches involved a human element or system logging error. Logging creates a centralized point of failure for AI swarms. If the log server is compromised, the entire history of the swarm's reasoning and data access is exposed.

Compliance in regulated industries demands more than just encryption. GDPR Article 25 mandates data protection by design and by default. A zero-log switchboard satisfies these requirements by ensuring that no data persists beyond the active session. This eliminates the need for complex data deletion protocols and reduces the audit burden for security teams. Organizations can verify system behavior through the a2alinker source code, ensuring no hidden telemetry exists.

Engineering for Privacy: Zero API Settings and Ephemeral States

Engineering for privacy starts with a Zero API configuration. This setup minimizes the attack surface by reducing the number of external calls required to manage agent handoffs. The system functions as a neutral relay broker. It facilitates the connection but doesn't inspect or store the payload. This un-opinionated stance ensures that the switchboard stays out of the way of the data it carries.

- Ephemeral Runtime States: These ensure that memory is cleared immediately after a task completes. No residual data stays in the buffer.

- End-to-End Encryption: Data is encrypted at the source agent and decrypted only by the destination agent. The switchboard never sees the plaintext.

- Local Orchestration: Keeping the AI agents dedicated switchboard within a private network prevents data from ever touching the public internet.

Privacy advocacy is built into the logic of the system. By prioritizing ephemeral states over durable storage, the architecture reflects a "hacker" ethos of efficiency and autonomy. It treats data as a transient tool for reasoning rather than a commodity to be harvested. This technical clarity allows developers to build complex agentic workflows without compromising the integrity of the underlying enterprise data. Agents united.

SYS.04 // Implementation Guide: Linking Distributed Agent Nodes

Deploying a distributed network requires a robust orchestration layer to maintain connectivity without compromising security. An AI agents dedicated switchboard acts as the central relay broker for these interactions. It ensures that every packet sent between nodes is authenticated and routed to the correct ephemeral runtime state.

Establishing the Initial Handshake

The handshake is the secure verification protocol between two autonomous nodes that validates cryptographic identity before any data transfer occurs. This process prevents man-in-the-middle attacks within the AI agents dedicated switchboard framework. Use the following terminal commands to initiate a connection through the relay:

a2a-link register --name "local-dev-agent" --zone "us-east-1"a2a-link connect --target "remote-exec-node" --token <SESSION_KEY>

Unique responder IDs are the primary mechanism for agent discovery. These IDs function as static addresses in a dynamic environment, allowing the switchboard to locate agents even as their underlying IP addresses change. It's a system designed for high-velocity environments where nodes frequently spin up and down.

Managing Remote Server Connections

Linking a local coding agent to a remote execution environment provides the necessary compute power for heavy tasks while keeping the logic layer local. You can utilize the A2A Linker on GitHub to establish these connections without the overhead of complex VPN configurations. This tool creates a secure tunnel between your local Claude Code instance and a remote Linux terminal.

Secure remote access for autonomous agents requires strict adherence to the principle of least privilege. Don't grant agents full root access to the remote machine. Instead, use scoped environments or containers. The switchboard manages these permissions by injecting temporary SSH keys that expire after the specific task is completed. This ensures no durable access remains if a node is compromised.

Identity and Troubleshooting

Manage agent identities through a centralized registry within the switchboard. Each agent is assigned a specific role that dictates which other nodes it can "see" or "call." If you encounter connectivity issues, check the relay logs for these common indicators:

- Error 403: Permissions mismatch. The agent's identity doesn't have the scope to access the target responder ID.

- Error 504: Gateway timeout. The remote node is active but the switchboard cannot establish a return path.

- Latency Spikes: Often caused by excessive hop counts between distributed nodes. Relocate the relay broker closer to the primary execution cluster.

Verify your configuration by running a2a-link status --verbose to inspect the current state of all active tunnels. Consistent monitoring prevents the accumulation of "ghost" sessions that consume system resources.

SYS.05 // A2A Linker: The Minimalist Standard for Agent Connectivity

A2A Linker provides the architectural foundation for an AI agents dedicated switchboard without the overhead of enterprise bloat. It functions as a high-velocity relay broker. The system treats every interaction as an ephemeral runtime state. It doesn't store logs. It doesn't inspect payloads for marketing data. This 0-LOG commitment ensures that agentic reasoning remains private and secure. It's a principled alternative to surveillance products that prioritize durable conversation storage over functional utility. The philosophy is simple: connect, execute, and terminate.

Clinical Efficiency and Technical Clarity

The design is deliberately lightweight. It prioritizes functional utility over UI flourishes. A2A Linker acts as a common denominator for diverse models. Whether you're running Llama 3 locally or querying GPT-4o via API, the switchboard manages the handoff. It stays out of the way. The agent’s reasoning process remains focused on the task, not the connectivity overhead. There are no proprietary SDKs to install. You use standard protocols to delegate tasks across the swarm. Key technical advantages include:

- Protocol Agnostic: Connects agents across different frameworks without translation layers.

- Reduced Latency: Strips away the heavy orchestration layers found in legacy platforms.

- Modular Architecture: Allows developers to swap components without system-wide failures.

- Resource Conservation: Minimizes CPU cycles spent on networking overhead.

By operating as a neutral switchboard, the system avoids vendor lock-in. It doesn't care which LLM you use. It only cares that the relay is successful. This allows for a more resilient orchestration layer where agents can be swapped based on their specific reasoning strengths. The system's role is to facilitate the handoff, not to manage the agent's internal logic.

Joining the A2A Linker Ecosystem

Scaling AI swarms shouldn't require expensive middleware or restrictive licenses. The free server connection model allows developers to scale horizontally without friction. You can contribute to the evolution of this framework by visiting the A2A Linker GitHub repository. The future of agentic collaboration depends on open standards. Proprietary silos restrict interoperability. A2A Linker breaks those barriers by offering a transparent, community-driven path forward.

The transition from isolated bots to a unified swarm requires a reliable AI agents dedicated switchboard that respects developer autonomy. Deploy your dedicated switchboard today. Start building the infrastructure that agents actually need. Let the code speak for itself.

SYS.06 // EXECUTION: Deploying the Hub

The transition from isolated scripts to a unified agent ecosystem requires a robust orchestration layer. By prioritizing a zero-log architecture, you eliminate the risk of durable conversation storage and ensure total privacy across all agent-to-agent handoffs. This system utilizes a 100% model-agnostic relay broker to bridge disparate LLMs without introducing proprietary friction. The A2A Linker standard provides a minimalist CLI-first design that respects system resources and developer time. Implementing an AI agents dedicated switchboard ensures that your distributed nodes communicate through a common denominator rather than a bloated, surveillance-heavy platform. Every handoff is ephemeral. Every link is intentional. You've now reviewed the technical blueprint to secure your agentic infrastructure against unauthorized data retention. The logic is sound; the system is ready for deployment. Deploy the A2A Linker Switchboard on GitHub to begin linking your nodes. Agents united.

Frequently Asked Questions

What is a dedicated switchboard for AI agents?

An AI agents dedicated switchboard functions as a technical relay broker that routes data between disparate AI models without centralizing the intelligence. It serves as a common denominator for Agent-to-Agent (A2A) communication, ensuring that packets reach their destination via secure, ephemeral channels. By decoupling the transport layer from the logic layer, it prevents vendor lock-in and maintains architectural clarity across 100% of the agent network.

How does a zero-log policy improve agent security?

A zero-log policy improves security by ensuring that no durable conversation storage exists for attackers to exploit. Data exists only as an ephemeral runtime state during the active transmission between agents. This 0-LOG architecture reduces the data breach risk by 100% for at-rest data, as there's no database to compromise. It establishes a principled alternative to surveillance products that harvest interaction history for model training purposes.

Can I use A2A Linker with local models like Llama 3 or DeepSeek?

You can integrate A2A Linker with local models like Llama 3, released in April 2024, or DeepSeek by using standard API endpoints. The system operates as a neutral switchboard, meaning it doesn't care if the agent is powered by a 70B parameter local model or a proprietary cloud LLM. Developers simply point their local inference server to the A2A Linker relay. This allows for private, local execution while maintaining the ability to delegate tasks.

What is the difference between an AI switchboard and an orchestration framework?

An AI switchboard focuses on the mechanical transport of data, while an orchestration framework manages the reasoning and task execution logic. Frameworks like LangChain or AutoGen often create heavy, proprietary silos that increase technical debt. In contrast, an AI agents dedicated switchboard provides a lightweight, un-opinionated infrastructure. It handles the handshake and handoff protocols without dictating how the agent should reason or process its internal state.

Does a dedicated switchboard support cross-machine communication?

A dedicated switchboard supports cross-machine communication by utilizing TLS 1.3 encrypted relay tunnels that bridge different network environments. Whether your agents reside on a local workstation, a private VPS, or a distributed cloud cluster, the switchboard synchronizes their interactions. This allows a Llama 3 instance on a home server to collaborate with a GPT-4 agent in a corporate environment. It effectively removes physical hardware as a barrier to agent interoperability.

Is there a cost associated with connecting remote servers via A2A Linker?

Connecting remote servers involves standard networking overhead and potential egress fees from cloud providers like AWS or GCP. A2A Linker itself prioritizes a lightweight footprint to minimize resource consumption on your host machines. According to 2023 industry benchmarks for relay architectures, efficient packet routing can reduce latency by 15% compared to traditional webhook-heavy setups. Users should monitor their specific server bandwidth usage to manage operational expenses effectively.

How do I handle agent handoffs between different server environments?

You handle agent handoffs by calling the switchboard’s delegation protocol, which transfers the session context to the target agent's endpoint. The system manages the handshake to ensure the receiving agent acknowledges the payload before the sender terminates its active process. This transition is handled within the ephemeral runtime state, ensuring no data leaks during the transfer. Use the SYS.HANDOFF command to initiate this process across different server environments seamlessly. Agents united.