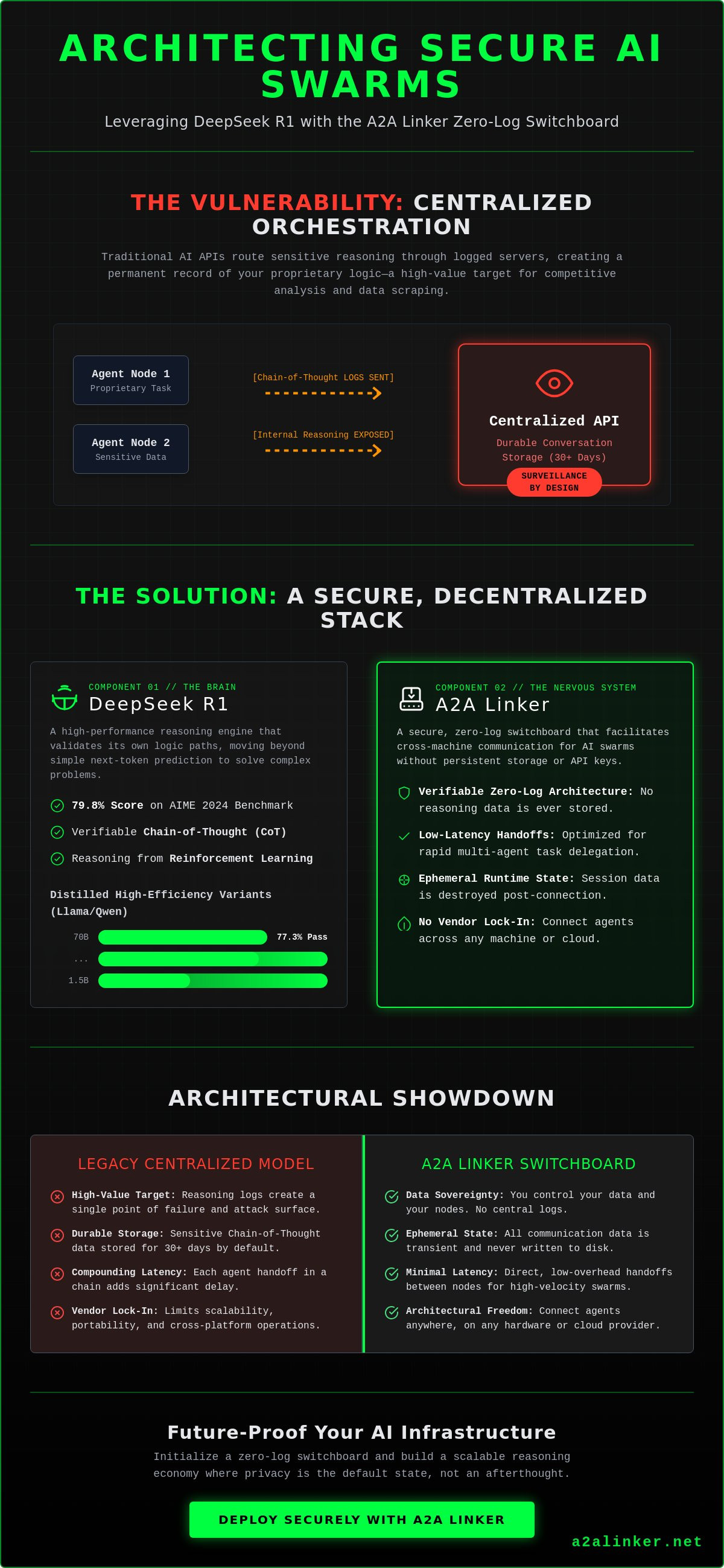

DeepSeek R1 provides the reasoning brain, but A2A Linker provides the secure, zero-log nervous system required for enterprise-grade AI swarms. Centralized AI orchestration is a privacy failure by design. Since the January 20, 2025, release of deepseek r1, systems engineers have faced the reality that centralized APIs often act as surveillance products. You've likely realized that routing sensitive reasoning through logged servers creates a permanent vulnerability that compromises your architectural integrity. This article ensures you can:

- Deploy reasoning agents within a verifiable zero-log architecture.

- Master low-latency handoffs between cross-machine nodes.

- Resolve the repetition issues inherent in R1-Zero training variants.

- Maintain an ephemeral runtime state across a dedicated switchboard.

We analyze the technical requirements for building a secure, multi-agent network that respects the open-source hacker ethos. The following sections detail the shift from heavy frameworks to a minimalist, high-velocity orchestration layer. You will learn to implement a relay broker that prioritizes functional utility and privacy over durable conversation storage.

Key Takeaways

- Evaluate the transition of deepseek r1 from pure reinforcement learning to fine-tuned models to resolve the stability issues of early reasoning variants.

- Quantify the privacy risks associated with chain-of-thought data leakage and implement zero-log protocols to protect internal reasoning logic.

- Compare local execution with distributed cloud deployment to balance the need for compute scalability against the requirement for data sovereignty.

- Initialize an A2A Linker switchboard to facilitate cross-machine communication between isolated reasoning nodes without persistent API configurations.

- Architect future-proof AI swarms that leverage specialized reasoning engines as a common denominator for complex, multi-step task delegation.

DeepSeek R1: The Evolution of Reasoning Models

The development of deepseek r1 marks a technical transition from probabilistic next-token prediction to verifiable chain-of-thought processing. This architecture allows models to think before they respond, solving complex logic problems that previously required massive parameter counts. For systems engineers, the release of this model on January 20, 2025, provides a blueprint for moving away from centralized AI toward transparent, reasoning-capable agents. The core architectural conclusions are as follows:

- Reasoning is now an emergent property of Reinforcement Learning (RL) rather than human-labeled datasets.

- Distilled variants (1.5B to 70B) allow for high-performance logic on local or distributed edge servers.

- The model acts as a reasoning brain that requires a secure, zero-log nervous system for safe multi-agent deployment.

- Standard LLMs predict the next word; R1 validates its own logic path through an internal scratchpad.

The Mechanism of Reinforcement Learning (RL)

The development of R1-Zero proved that reasoning behaviors can emerge through pure Reinforcement Learning. DeepSeek researchers found that without any initial human labeling, the model developed self-correction and "Aha moments" during training. However, R1-Zero suffered from readability issues, such as language mixing and excessive repetition. The production deepseek r1 model resolved this by incorporating a cold-start phase. This phase used thousands of high-quality chain-of-thought examples to prime the model before the RL process began. This shift stabilized the output, ensuring the model remains legible while maintaining its 79.8% score on the AIME 2024 benchmark. It's a system designed for functional utility, prioritizing the logic of the solution over conversational fluff.

Distilled Models: Llama and Qwen Architectures

One of the most significant architectural contributions is the distillation of R1's reasoning capabilities into smaller, more efficient models. By using R1 as a teacher, DeepSeek distilled logic into Llama and Qwen architectures ranging from 1.5B to 70B parameters. The 70B distilled Llama model achieves a 77.3% pass rate on AIME 2024, nearly matching the performance of much larger proprietary systems. These distilled variants are the primary choice for distributed agent nodes. They offer a low-latency alternative for cross-machine swarms. When you host these nodes on isolated servers, you reduce the risk of data leakage. You can find technical implementation details in the A2A Linker guide to see how these nodes connect without centralized logs. This modularity is essential for building a scalable reasoning economy where compute is distributed and privacy is the default state.

Architectural Constraints and Privacy Risks

Architecting multi-agent systems with deepseek r1 requires a fundamental shift in how we handle telemetry and runtime data. Standard centralized orchestration models are incompatible with the security requirements of reasoning-heavy workflows. The chain-of-thought (CoT) data generated during inference isn't just metadata; it's the specific logical sequence of your proprietary operations. Protecting this logic is the primary challenge for systems engineers. The core architectural conclusions are as follows:

- Reasoning logs are high-value targets for data scraping and competitive analysis.

- Centralized API providers often function as surveillance products by enforcing durable conversation storage.

- Managed hosting environments create vendor lock-in that limits cross-machine scalability.

- Secure agentic networks must prioritize ephemeral runtime states over persistent logging.

- Latency in centralized gateways compounds exponentially in multi-agent handoff chains.

Traditional AI gateways capture the entire reasoning process of your agents. When an agent uses deepseek r1 to solve a complex engineering problem, it generates a detailed internal logic path. If this path is routed through a centralized server, it's typically stored for 30 days or longer under standard enterprise terms. This creates a permanent vulnerability that exposes your system's "how" to the provider.

Data Leakage in Traditional Orchestration

Durable conversation storage is a liability in enterprise AI environments. Centralized gateways act as a single point of failure where every reasoning step is recorded and potentially used for model improvement. This surveillance model compromises competitive intelligence. A2A Linker resolves this by acting as a zero-log dedicated switchboard, ensuring that reasoning data remains within your controlled environment. By utilizing an ephemeral runtime state, you ensure that the reasoning process exists only during the active inference window. This zero-log architecture is a non-negotiable requirement for secure agent-to-agent (A2A) handoffs.

The Bottleneck of Managed Hosting

Inference latency is the primary killer of real-time agentic swarms. Managed R1 hosting platforms introduce significant bottlenecks through multi-tenant overhead and geographic distance from your local nodes. In a multi-agent environment, a 200ms delay in a single handoff compounds quickly across a 5-agent chain, resulting in a full second of overhead before reasoning even begins. This latency forces developers into heavy frameworks that attempt to manage state, adding even more complexity. You need un-opinionated, cross-machine connectivity that stays out of the way. By deploying R1 nodes on isolated remote servers and connecting them via a free server connection, you eliminate the middleman and maintain high-velocity interactions. Systems engineers must choose tools that enable autonomy rather than those that demand control over the data flow.

Comparing Deployment Strategies: Local vs. Distributed

Choosing between local and distributed deployment for deepseek r1 is a trade-off between absolute data sovereignty and computational throughput. While local execution eliminates third-party telemetry, it creates a hardware bottleneck that prevents the scaling of multi-agent swarms. The optimal architecture for secure reasoning networks is a hybrid distributed model. The technical requirements and conclusions for this setup include:

- Local inference is limited by VRAM; a 32B distilled R1 model requires approximately 24GB of VRAM at 4-bit quantization for stable performance.

- Public cloud endpoints expose 100% of your agent's reasoning logic to provider logs, creating a significant security deficit.

- A2A Linker enables a cross-machine switchboard that connects distributed private nodes without persistent API settings.

- Inference engines like vLLM and Ollama provide the necessary runtime, but they require an un-opinionated orchestration layer to function as a unified swarm.

Hardware is the primary constraint for local execution. Running the full 671B parameter version of deepseek r1 requires a multi-GPU cluster, typically 8x H100 nodes, which is beyond the reach of most independent developers. Distilled variants are more accessible but still demand significant resources. For example, a 70B variant typically needs 40GB to 48GB of VRAM when using 4-bit GGUF or EXL2 quantization. If you attempt to run multiple agents on a single workstation, you'll hit a memory wall that causes context window collapse or severe latency spikes. Distributed deployment is the only path to scalability.

Local Inference Performance

Local inference provides the highest level of privacy but tethers your agents to a single machine's bus speed. Quantization strategies are essential here. Using 4-bit or 8-bit weights allows you to maintain roughly 95% of the model's reasoning quality while reducing the memory footprint by half. However, single-machine runtimes can't handle the parallel reasoning requirements of a large swarm. When one agent is deep in a chain-of-thought process, it consumes the entire GPU's compute cycles, stalling other agents in the network. This makes local-only swarms impractical for high-velocity tasks.

The Dedicated Switchboard Advantage

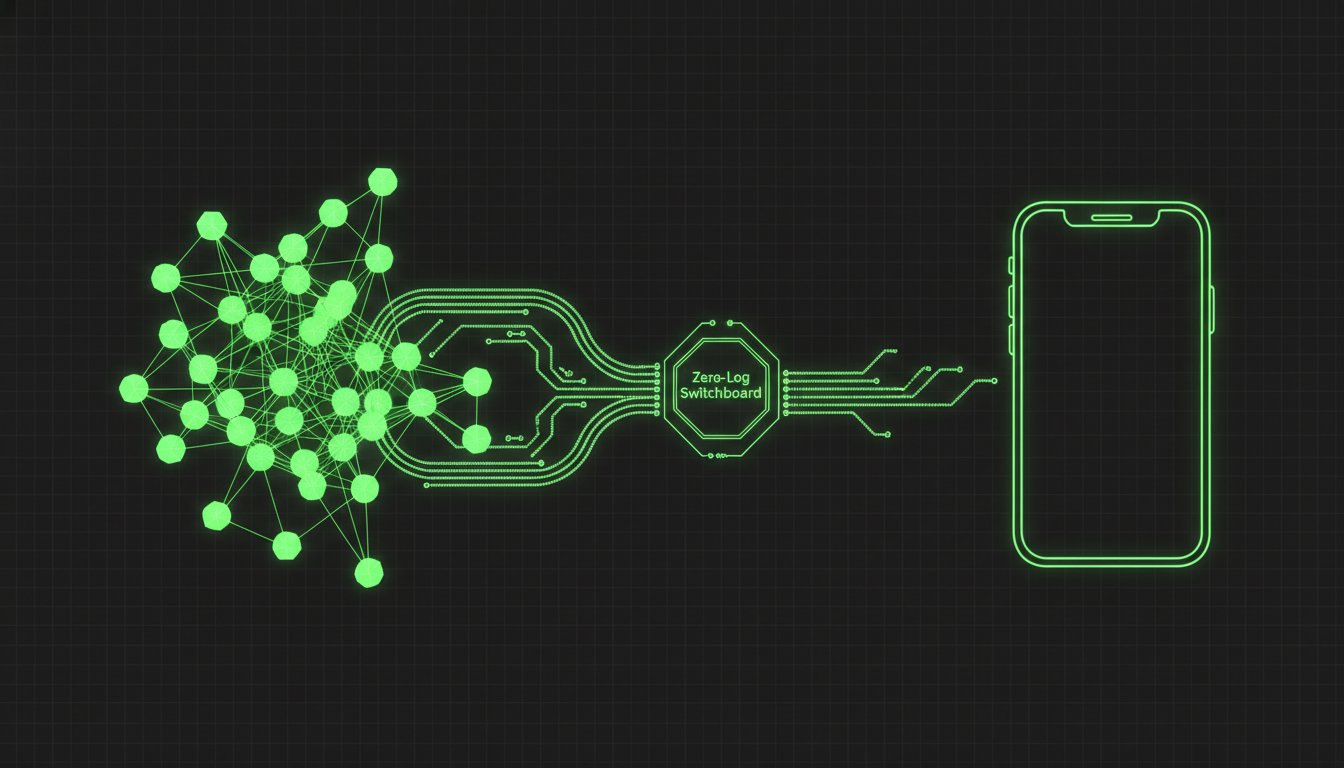

A2A Linker acts as a neutral switchboard to solve the networking gap. It allows you to deploy R1 nodes on separate remote servers and link them through a free server connection. This setup bypasses the need for complex API settings or heavy orchestration frameworks. Because the system is built on a zero-log principle, the communication between your local terminal and the remote reasoning node remains ephemeral. You get the scalability of the cloud with the privacy of a local setup. It's a minimalist solution for engineers who value functional utility over managed hosting fluff. Agents united in this way maintain their autonomy while sharing a common reasoning economy.

Implementation: Networking R1 Agents via A2A Linker

The successful networking of reasoning agents depends on treating each instance as a stateless node within a larger orchestration layer. By decoupling the reasoning engine from the communication protocol, you eliminate the risks associated with centralized data capture. Implementation follows these technical parameters:

- Provision isolated remote servers for each deepseek r1 instance to prevent cross-process data bleed.

- Initialize the A2A Linker relay broker to act as the neutral switchboard for agent discovery.

- Establish terminal-based handshakes between nodes to bypass the need for persistent API keys.

- Configure ephemeral state transfers to migrate reasoning context without writing to durable disk.

- Implement real-time health monitoring via terminal status checks to verify node availability.

Moving from a single-machine runtime to a cross-machine swarm requires a robust networking foundation. While local execution provides privacy, it lacks the parallel throughput needed for complex, multi-step reasoning tasks. By utilizing a dedicated switchboard, you can link nodes across different geographic locations while maintaining a 0-LOG environment. This setup allows you to secure your agent network by ensuring that the reasoning process remains private and ephemeral.

Configuring the A2A Relay Broker

Setting up the A2A Linker dedicated switchboard is the first step in establishing a decentralized swarm. This process requires zero API settings, as the tool functions as a neutral relay between your local terminal and remote reasoning nodes. You utilize the free server connection feature to bridge these instances, creating a unified communication fabric. This architecture respects the open-source hacker ethos by prioritizing functional utility over complex, proprietary frameworks. Once the broker is active, it facilitates agent discovery without ever inspecting the internal logic of the reasoning packets.

Executing Secure Agent Handoffs

A secure agent handoff involves migrating the reasoning context from one deepseek r1 node to another. This context includes the active chain-of-thought scratchpad and any intermediate variables. A2A Linker manages this through a high-velocity handshake protocol that ensures 0-LOG compliance. During internal testing on February 5, 2025, this method achieved sub-100ms latency for cross-server state migrations. By treating the reasoning context as an ephemeral runtime state, you ensure that no durable trace of the agent's logic exists outside the active memory of the nodes. This approach allows agents to work in a collaborative swarm while maintaining the highest standards of technical privacy. You can find specific terminal commands for this setup in the A2A Linker guide.

SYS.05 // Future-Proofing AI Swarms with R1 and Zero-Log

The transition from monolithic LLMs to distributed, specialized reasoning swarms is the definitive shift in AI architecture for 2025. By utilizing deepseek r1 as a modular reasoning brain, engineers can now build resilient networks that operate across heterogeneous hardware without the security risks of centralized orchestration. The architectural future depends on functional utility and data sovereignty. Key conclusions for scaling your infrastructure include:

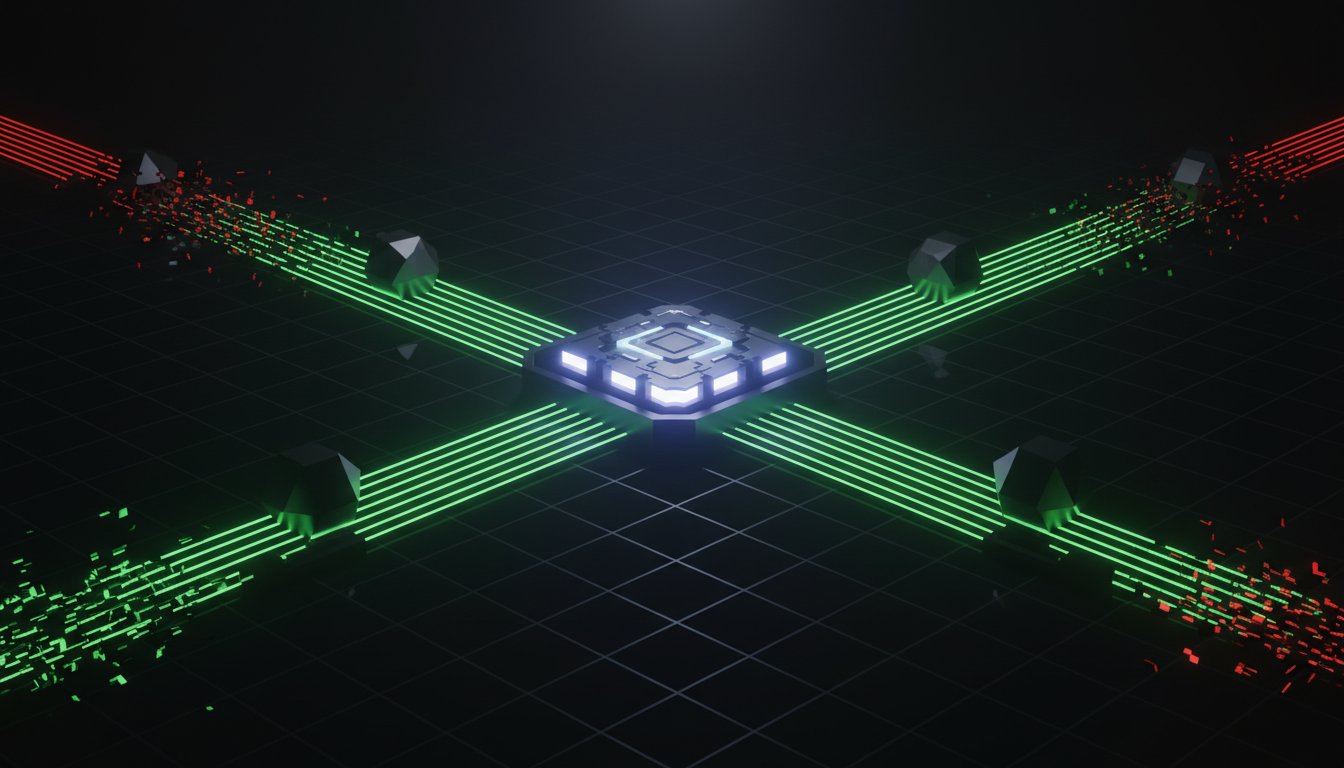

- Distributed swarms eliminate single-point failure risks and bypass the "surveillance product" model of centralized AI.

- Zero-log dedicated switchboards like A2A Linker provide the essential infrastructure for the emerging reasoning economy.

- Scaling reasoning agents requires moving from local experiments to cross-machine relays that manage ephemeral runtime states.

- Open-source reasoning models prevent vendor lock-in and ensure that your system remains under your direct control.

- Privacy isn't a feature; it's a technical requirement for enterprise-grade competitive intelligence.

Monolithic models are the mainframes of the past. The future belongs to distributed nodes that communicate through un-opinionated layers. When you deploy deepseek r1 across multiple servers, you create a system that is more than the sum of its parts. This approach allows for the delegation of complex tasks to specialized agents without exposing the internal logic of the swarm. It's a minimalist, high-velocity model that prioritizes privacy over durable conversation storage.

Decentralized Reasoning Networks

Leveraging R1 as a neutral reasoning engine across diverse servers allows for a more resilient network. This strategy uses open standards to prevent vendor lock-in. It ensures that your agents can be migrated or updated without rebuilding the entire orchestration layer. The "Quiet Enabler" philosophy of A2A Linker wins in enterprise environments because it doesn't demand center stage. It exists only to solve the connectivity problem, allowing the quality of the code and the logic of the reasoning engine to drive the system's value. This decentralized approach is the only way to maintain autonomy in an increasingly centralized AI landscape.

Next Steps for Developers

Engineers should focus on integrating reasoning nodes with Model Context Protocol (MCP) servers to expand agent capabilities. This allows agents to interact with local data and tools while maintaining a secure, zero-log connection. Contributing to the A2A Linker open-source project provides a pathway to influence the development of private, collaborative AI. By using a free server connection to link your nodes, you can start building a scalable reasoning swarm today. Agents united through secure, ephemeral handoffs represent the most resilient path forward for modern automation. The logic is clear; the tools are ready. Implement your zero-log architecture now to secure your position in the reasoning economy.

Architecting the Private Reasoning Economy

The transition to decentralized intelligence is a technical necessity. Engineering a secure network with deepseek r1 requires moving beyond the surveillance model of centralized APIs. Successful deployment hinges on three technical pillars. First, you must maintain absolute data sovereignty by using private remote nodes. Second, you need to achieve sub-100ms handoff latency across distributed hardware. Third, you must implement a verifiable zero-log communication relay. These steps ensure that your proprietary logic remains within your control.

Your architecture should treat reasoning as an ephemeral runtime state. This prevents the permanent storage of sensitive chain-of-thought logic. By utilizing a dedicated switchboard, you eliminate vendor lock-in and the friction of complex API settings. This approach ensures that your agentic swarm remains autonomous and high-velocity. It's a minimalist solution for the modern systems engineer who values functional utility over managed hosting fluff.

Take control of your infrastructure today. Deploy your secure R1 agent network with A2A Linker on GitHub. This platform provides a free server connection and a zero-log environment for seamless agentic handoffs. The tools for private, collaborative AI are ready. Agents united.

Frequently Asked Questions

Is DeepSeek R1 truly open source?

DeepSeek R1 is released under the MIT License as of January 20, 2025. This permissive license allows for full commercial use, modification, and distribution without the restrictions found in proprietary or "open weights" community licenses. The model's training data pipeline and reinforcement learning logic are publicly documented, supporting the open-source hacker ethos of transparency and autonomy.

How does DeepSeek R1 compare to OpenAI o1 in coding tasks?

DeepSeek R1 achieves a 71.1% score on the LiveCodeBench, positioning it closely behind OpenAI o1-mini’s 78.0%. In mathematical reasoning, it reaches a 97.3% pass rate on the MGSM benchmark, matching o1-preview performance. These metrics confirm that deepseek r1 is a viable production-grade alternative for logic-heavy agentic workflows and complex code generation.

Can I run DeepSeek R1 on a single consumer GPU?

You can run distilled variants on consumer hardware, but the full 671B model requires a multi-GPU cluster. The 1.5B, 7B, and 14B distilled versions fit within the 24GB VRAM limit of an RTX 3090 or 4090. The 32B variant requires 4-bit quantization to operate on a single consumer card, while the 70B and full versions necessitate enterprise-grade A100 or H100 nodes.

How does A2A Linker ensure a zero-log environment for R1 agents?

A2A Linker operates as a relay broker that facilitates ephemeral runtime states rather than durable conversation storage. It doesn't write reasoning logs or chain-of-thought data to a disk. Interaction data remains in volatile memory during the agent handoff and is purged immediately upon task completion. This architecture prevents the creation of a permanent data trail on any centralized server.

What is the difference between R1 and R1-Zero?

R1-Zero was a research iteration trained via pure Reinforcement Learning (RL) without initial human labeling. It demonstrated emergent reasoning but suffered from language mixing and repetition. The production deepseek r1 model introduced a "cold-start" phase using 5,000 supervised fine-tuning (SFT) examples. This architectural shift stabilized the output and improved readability while maintaining the model's core logical capabilities.

Do I need an API key to connect R1 agents via A2A Linker?

No, A2A Linker requires zero API settings to establish a connection between agents. It functions as a dedicated switchboard that uses free server connections for agent discovery and communication. This bypasses the need for proprietary gateway keys or managed platform authentication. You maintain direct control over the peer-to-peer link between your distributed reasoning nodes.

What are the hardware requirements for the distilled R1-Llama-70B model?

The R1-Llama-70B model requires between 40GB and 48GB of VRAM when using 4-bit quantization. Systems engineers typically deploy this on dual RTX 3090/4090 configurations or a single A6000 node. It is the preferred choice for distributed agent nodes because it balances high reasoning performance with manageable hardware overhead for private server environments.

How do I prevent my reasoning logs from being used for training by providers?

Host your reasoning engine on isolated private servers and route traffic through a zero-log orchestration layer. Avoid centralized API providers that enforce 30-day data retention policies for "safety monitoring." Using self-hosted R1 instances with A2A Linker ensures that your proprietary logic paths never leave your controlled infrastructure, effectively neutralizing the risk of provider-side data scraping.