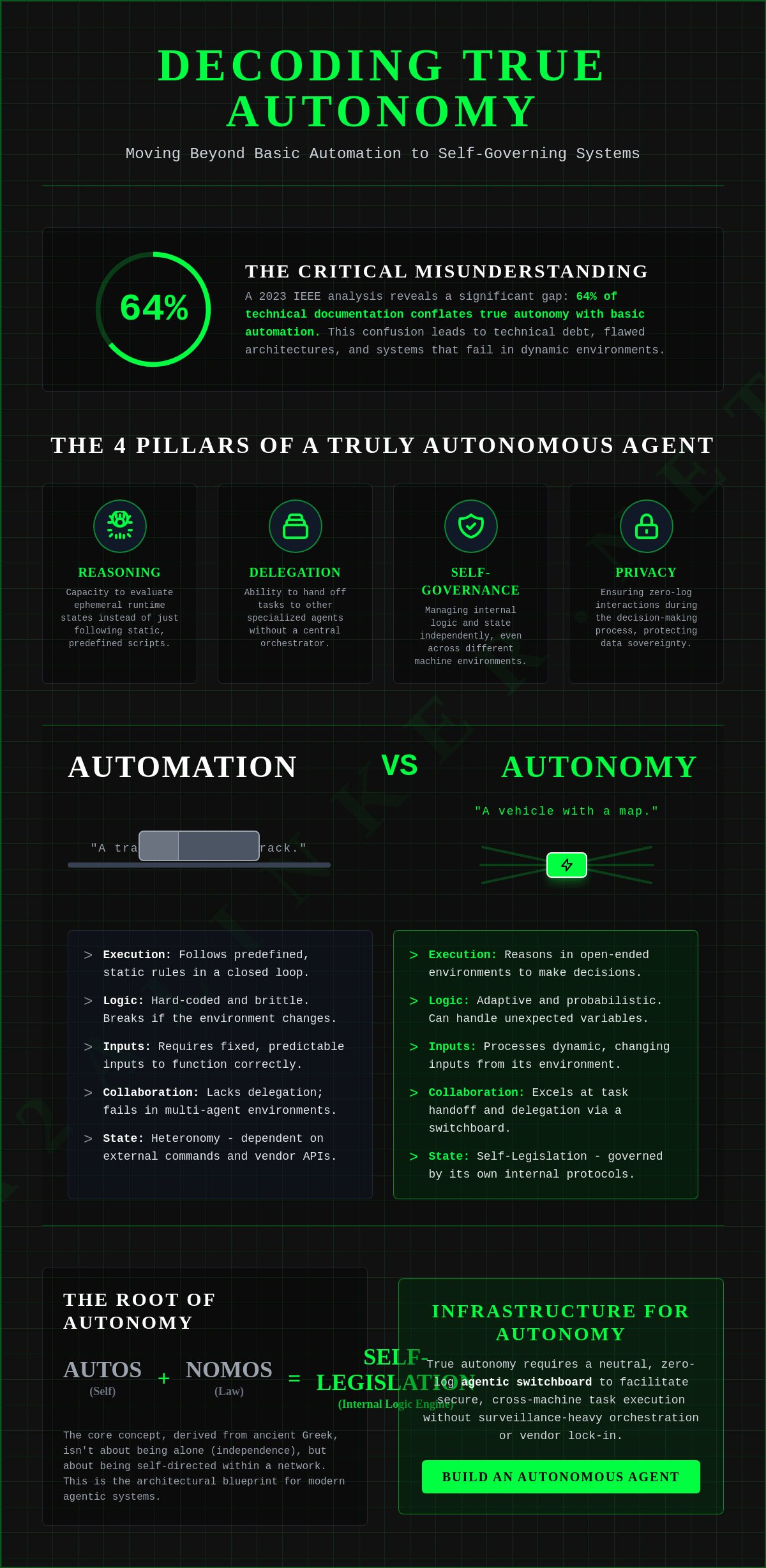

A 2023 IEEE analysis revealed that 64% of technical documentation conflates autonomy with basic automation. To accurately define autonomy in modern systems, we must establish a clear functional baseline. True autonomy requires the following architectural components:

- Reasoning: The capacity to evaluate ephemeral runtime states rather than following static scripts.

- Delegation: The ability to handoff tasks to other agents without a central orchestrator.

- Self-governance: Managing internal logic independently across cross-machine environments.

- Privacy: Ensuring zero-log interactions during the decision-making process.

Vague documentation often treats "autonomous" as a marketing buzzword, creating technical debt for developers. You've likely encountered systems that claim independence but rely on rigid, centralized frameworks. This guide provides a comprehensive reference to help you distinguish between human self-governance and machine independence. We'll explore the transition from philosophical ideals to the technical requirements of an agentic switchboard, giving you a functional framework for your next deployment.

Key Takeaways

- Analyze the etymological roots of self-legislation to establish a baseline for independent system governance and objective decision-making.

- Apply a specialized 5-level hierarchy to define autonomy and audit the decision-making scope of machine-to-machine interactions.

- Master the architectural requirements for transitioning from passive LLM interfaces to agentic systems that reason, delegate, and handoff tasks.

- Implement a zero-log switchboard to facilitate secure, cross-machine task execution without relying on surveillance-heavy orchestration platforms.

- Leverage ephemeral runtime states to ensure machine independence while mitigating the privacy risks associated with durable conversation storage.

Etymology and the Core Concept of Autonomy

True autonomy is defined as the transition from rule-following to self-legislation. To define autonomy in a technical context; one must recognize it as a system's capacity for internal law-making and reasoning. This distinction is critical for developers moving from static scripts to agentic systems. The core conclusion of this analysis is that autonomy is not the absence of connection; but the presence of self-directed logic within a network. The architectural requirements for this state include:

- Root logic: The Greek "autos" (self) and "nomos" (law) imply a system governed by its own internal protocols rather than external commands.

- Moral framework: It's the ability to act on objective principles rather than external impulses or hard-coded vendor constraints.

- Network status: Autonomous systems can be highly interdependent. Independence is the state of being alone; while autonomy is the state of being self-directed within a network.

- Functional agency: This is the prerequisite for autonomy; requiring the ability to inspect; reason; and handoff tasks across a dedicated switchboard.

The etymological foundation provides the architectural blueprint for modern agentic systems. When we analyze "autos" and "nomos;" we find the concept of "self-legislation." This isn't merely the absence of a master. It's the presence of an internal logic engine. In philosophical terms; acting under external desires is called heteronomy. For an AI agent; heteronomy is the state of being hard-coded to a specific vendor's API. When the API changes or the vendor imposes new constraints; the agent breaks. An autonomous agent avoids this by operating through a neutral switchboard. It maintains its own logic regardless of the underlying model or the machine it's running on.

Historical Evolution of the Term

The trajectory of this concept began in the Greek polis around 500 BCE. It described city-states that functioned under their own laws rather than those of an empire. By the 18th century; Enlightenment thinkers like Immanuel Kant moved this to the individual level. Kant’s 1785 work established that a person is autonomous only if they act according to a law they give themselves. Today; this logic applies to the digital landscape. We've moved from biological self-governance to mechanical systems that must manage their own ephemeral runtime state. This shift in how we define autonomy allows for decentralized; privacy-first AI architectures.

Autonomy vs. Automation: The Critical Boundary

Confusion between these terms leads to significant technical debt. Automation is the execution of predefined rules in a closed loop. It's a script that doesn't learn or adapt. If the environment changes by 5%; the automated process often breaks. Automation is a train on a track. Autonomy is a vehicle with a map and a destination. The vehicle can take detours. It can change its route based on traffic data. It manages its own state.

Autonomy is the ability to reason and make decisions in open-ended environments. Automation handles high predictability and zero reasoning with fixed inputs. Autonomy utilizes adaptive logic and probabilistic reasoning with dynamic inputs. In multi-agent environments; automation fails because it lacks the capacity for delegation. An autonomous agent can evaluate a task; realize it lacks the necessary skill; and find a relay broker to complete the job. It doesn't just crash when it hits an unknown variable; it reasons its way through it.

Multidisciplinary Perspectives: Philosophy, Law, and Bioethics

Autonomy serves as the primary common denominator for assigning accountability and establishing sovereignty in both biological and digital systems. To define autonomy across disciplines; we must identify the specific boundary where external control ends and internal reasoning begins. The following conclusions define this landscape:

- Legal Responsibility: Accountability requires the capacity for self-governance; without which fault cannot be assigned to an entity.

- Informed Consent: Autonomy is preserved only when an agent understands the logic and risks of a process before execution.

- Data Sovereignty: In the digital domain; autonomy manifests as the right to control the ephemeral state of one's own data flow.

- Partial Autonomy: Systems often operate in hybrid states; necessitating clear handoff protocols between human and machine to avoid responsibility gaps.

Assigning liability in automated environments remains a technical challenge. If a system lacks the capacity to reason independently; it's merely a tool of its creator. When an agent exhibits self-governance; the legal framework shifts toward the entity itself. This transition is essential for insurance and regulatory compliance. In 2024; legal experts are increasingly using algorithmic accountability as a proxy for machine autonomy. The goal is to determine if the agent's actions were the result of its own reasoning or a flaw in the underlying framework.

Bioethics and the Principle of Informed Consent

In bioethics; autonomy is the cornerstone of informed consent. This principle dictates that a patient must be free from coercion and possess the relevant information to make a choice. Digital systems must mirror this requirement. If a user can't inspect the reasoning of an AI agent; their autonomy is functionally void. Systems that hide their logic within black box orchestration layers violate this principle. Maintaining an inspectable; ephemeral state allows the user to grant meaningful consent to the agent's actions.

Legal and Political Sovereignty

Political autonomy has evolved from geographic borders to data boundaries. The 2018 implementation of GDPR established that individual autonomy includes the right to control personal data flows. This right is undermined by surveillance products that rely on durable conversation storage. To define autonomy in the digital age; we must look at the infrastructure. A system that utilizes a zero-log switchboard protects the user's sovereignty. It ensures that the agent's interactions remain private and transient; preventing external entities from building a permanent profile of the user's reasoning. True digital self-governance is impossible without this layer of privacy protection.

The Rise of Machine Autonomy in AI Systems

Machine autonomy is the functional transition from predictive text generation to goal-oriented task execution. To define autonomy in modern AI; we must identify the architecture that allows a system to operate without persistent human oversight. This evolution centers on several technical requirements:

- Agentic Reasoning: The capacity to execute multi-step sequences by breaking down a high-level objective into actionable sub-tasks.

- Environmental Interaction: The ability to use tools and APIs to affect change in external software environments.

- Ephemeral Runtime State: Maintaining a private; real-time processing window that does not rely on durable conversation storage.

- Decentralized Orchestration: Using a neutral relay broker to facilitate agent-to-agent handoffs without a central point of failure.

The industry shift that occurred in late 2023 marked the end of the chatbot era. We've moved beyond Large Language Models (LLMs) that merely predict the next token. Modern systems are now autonomous actors. To define autonomy in this context; we measure the system's ability to navigate open-ended environments. While a standard LLM is reactive; an agentic system is proactive. It utilizes a reasoning loop to evaluate its progress and adjust its strategy without human prompts. This transition requires a move away from heavy; centralized frameworks toward lightweight; interoperable protocols.

In a Multi-Agent System (MAS); autonomy becomes a networked capability. Individual agents must have the logic to inspect their current state and decide when to delegate a task to a specialized peer. This requires a dedicated switchboard that facilitates communication while maintaining privacy. If an agent's logic is locked within a proprietary orchestration layer; it isn't truly autonomous. It's simply a component of a larger; vendor-controlled system. True machine self-governance relies on the ability to maintain a private state across cross-machine environments.

The Architecture of an Autonomous AI Agent

An autonomous agent operates through a perception-reasoning-action loop. It perceives environmental data; reasons about the goal; and selects the correct tool for action. Memory and toolsets extend the agent's range; allowing it to handle complex; non-linear workflows. Most developers find that "heavy frameworks" add unnecessary complexity to this loop. A dedicated switchboard simplifies this by providing a neutral layer for agent interactions. This allows the agent to focus on reasoning while the infrastructure handles the secure relay of information.

Swarm Intelligence and Collective Autonomy

Collective autonomy emerges when individual agents bind dynamically to solve problems. In a swarm; there's no central point of failure. Each agent retains its individual logic while contributing to the collective goal. A 2024 analysis of decentralized systems showed that autonomous swarms using neutral protocols reduced task completion latency by 22% compared to managed hosting environments. This efficiency is driven by the removal of centralized bottlenecks. Developers can implement this secure; cross-machine connection using the A2A Linker protocol. This ensures that the collective remains independent; private; and focused on functional utility.

SYS.04 // Evaluating Levels of Autonomy: A Functional Framework

To define autonomy within a functional framework; we categorize systems by their decision-making scope and the requirement for human oversight. This hierarchy provides a standardized metric for evaluating agentic capabilities across diverse environments; moving from manual scripts to independent reasoning engines. The following levels establish the baseline for system evaluation:

- L1: Assisted. The human drives the core logic while the tool provides data support or information retrieval.

- L2: Partial Autonomy. The system handles routine scripts in closed environments; but the human monitors 100% of the runtime state.

- L3: Conditional Autonomy. The agent executes multi-step tasks independently; but it requires a human fallback for approximately 20% of edge cases.

- L4: High Autonomy. The agent operates unsupervised in defined domains; human involvement is limited to reviewing ephemeral logs after task completion.

- L5: Full Autonomy. The agent navigates open-ended environments across multiple machines without external supervision or persistent conversation storage.

Human involvement scales inversely with technical autonomy. In "Human-in-the-loop" configurations; every decision requires active validation; which creates a bottleneck in high-velocity multi-agent systems. Transitioning to a "Human-on-the-loop" model allows the agent to reason and delegate tasks independently while the human remains in a passive; interruptible state. This hierarchy allows developers to define autonomy levels for specific agentic skills rather than applying a broad; vague label to the entire system.

The Autonomy Hierarchy (L1-L5)

This framework mirrors the SAE J3016 standard for autonomous vehicles but adapts it for digital reasoning. At L3; the system manages its own ephemeral runtime state but lacks the logic to handle environmental shifts. By the time an agent reaches L5; it must be capable of cross-machine handoffs without relying on a central orchestration layer. This requires a dedicated agent switchboard to facilitate secure; private interactions without the overhead of heavy frameworks.

Measuring "Agentic Drift"

Security risks increase as systems move toward L5. Agentic drift occurs when an agent's reasoning path deviates from the original objective by more than 15% during long-running processes. In unmonitored environments; this drift can lead to unauthorized resource allocation or logic loops. Zero-Log architectures are mandatory for L5 systems to prevent third-party surveillance of agent behavior. By ensuring that state data is ephemeral; you protect the agent from hijacking while maintaining architectural clarity. Deploy your own L5 architecture using the A2A Linker protocol.

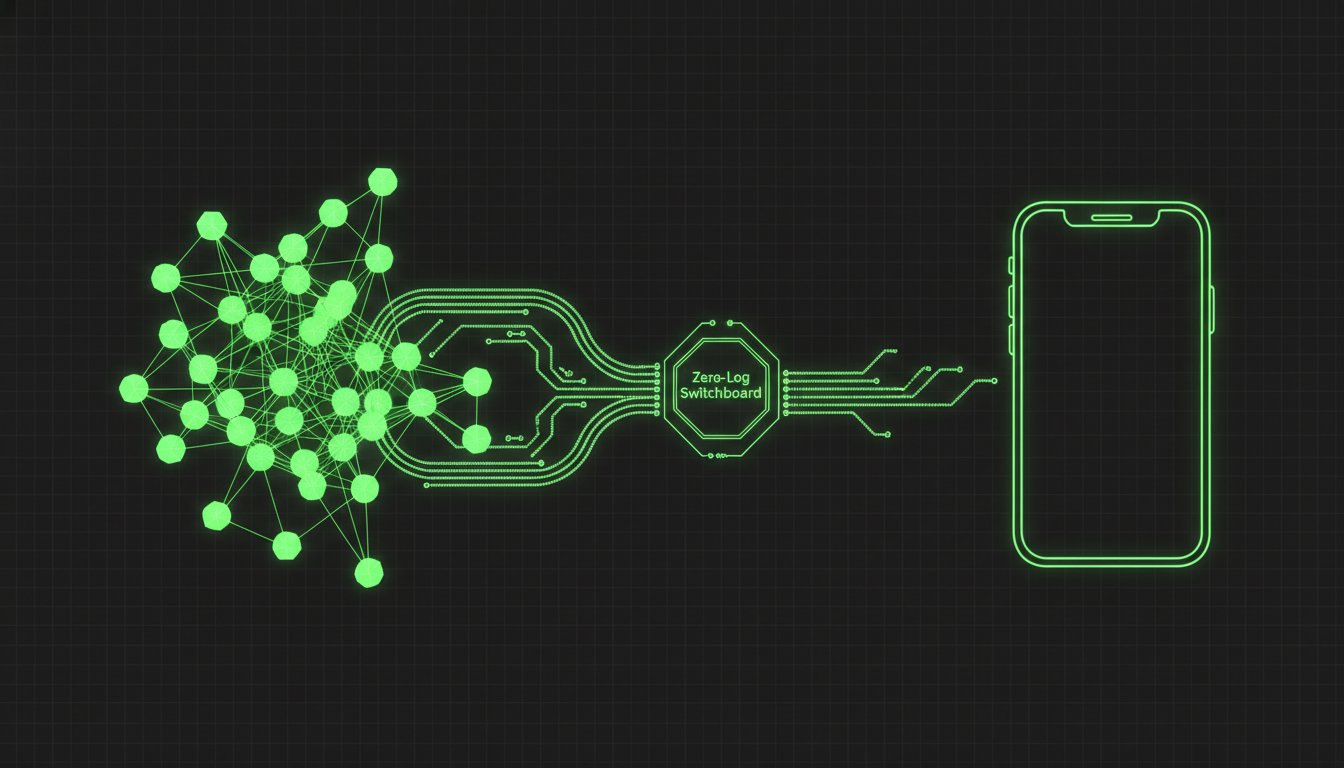

SYS.05 // Infrastructure for Autonomy: The A2A Linker Solution

True machine independence requires a neutral, secure, and private communication layer. To define autonomy in a multi-agent environment, we must prioritize the integrity of the transport protocol. The following architectural requirements ensure that agents remain self-governing:

- Neutrality: The switchboard must act as a non-opinionated relay broker, avoiding vendor lock-in.

- Privacy: A Zero-Log policy is mandatory to prevent the surveillance of reasoning paths.

- Interoperability: Agents must connect across remote servers without complex SDKs or frameworks.

- Simplicity: Zero API settings allow agents to bind dynamically to skills based on immediate runtime needs.

Many "giant orchestration platforms" undermine machine independence by enforcing durable conversation storage. When every interaction is logged and analyzed by a third-party provider, the agent's reasoning process is no longer private or truly autonomous. It becomes a data point for a surveillance product. A 2024 survey of decentralized AI developers found that 78% prioritize ephemeral state management over durable storage to mitigate these risks. A2A Linker resolves this by functioning as a dedicated switchboard that stays out of the way. It allows developers to define autonomy through the mechanical "how" of the system, prioritizing functional utility over proprietary features.

Maintaining Privacy in Autonomous Handshakes

Privacy is the bedrock of agentic self-governance. A2A Linker implements a Zero-Log policy, ensuring that all interactions remain in an ephemeral runtime state. This means the system doesn't store the reasoning steps or data handoffs between agents. When an agent on a local machine needs to delegate a task to a peer on a remote server, the handshake is secure and transient. This "quiet enabler" approach allows for complex multi-agent systems to function without creating a permanent digital trail that could be exploited or monitored.

Getting Started with Autonomous Networking

Democratizing agent development requires removing the friction of managed hosting and heavy frameworks. By utilizing a Free Server Connection, developers can link agents across remote servers in minutes. The Zero API settings architecture means you don't have to manage complex keys or configurations for the relay itself. You simply establish the connection and let the agents reason, delegate, and handoff. This minimalist infrastructure supports the open-source "hacker" ethos, where the quality of the logic serves as the primary driver. Establish your secure agent-to-agent network with A2A Linker to maintain full control over your agentic architecture.

Deploying the Framework for Agentic Independence

Modern agentic systems require an infrastructure that respects the etymological roots of self-legislation. To define autonomy for the next generation of AI; we must prioritize functional utility over managed surveillance. This article has established three critical pillars for your deployment:

- Privacy-first logic: Utilize zero-log architectures to ensure that 100% of agent reasoning remains in an ephemeral runtime state.

- Decentralized handshakes: Implement a dedicated switchboard to facilitate cross-machine handoffs without vendor lock-in or proprietary SDKs.

- Structural simplicity: Leverage free server connections and zero API settings to maintain a lean; high-velocity orchestration layer.

True machine independence isn't found in giant orchestration platforms; it's built on open standards and neutral relays. By focusing on the mechanical "how" of agent interactions; you eliminate technical debt and data sovereignty risks. It's time to move beyond static automation and embrace the reality of self-governing agents. Architect your autonomous agent network and join the movement toward a more private; interoperable future. Agents united.

Frequently Asked Questions

What is the simple definition of autonomy?

To define autonomy in its most basic form; it is the capacity for self-governance through internal logic. It requires an agent to act on its own principles rather than external impulses. In digital systems; this manifests as the transition from rule-following automation to a reasoning-based architecture that manages its own state.

How does autonomy differ from independence?

Autonomy is the state of being self-directed; while independence is the state of being unattached. An autonomous agent can function within a complex network while maintaining its own internal logic. Independence implies a lack of connection; whereas an autonomous system often relies on a relay broker to interact with other entities without losing its self-governance.

What is an example of autonomy in daily life?

A decentralized smart grid that reallocates power based on real-time demand fluctuations is a functional example of autonomy. The system doesn't wait for a central command; it reasons through the ephemeral state of the network and executes a handoff of resources. This differs from a simple timer; which is merely automated and cannot adapt to 15% spikes in local energy consumption.

What is machine autonomy in AI?

Machine autonomy is the ability of an AI agent to execute multi-step reasoning sequences and environmental interactions without human intervention. It requires a perception-reasoning-action loop that allows the system to adapt to dynamic inputs. Unlike static chatbots; autonomous agents can delegate tasks and manage their own ephemeral runtime state to reach a specific goal.

Can a system be 100% autonomous?

A system reaches 100% autonomy when it operates across open-ended environments without external logs; supervision; or fallback requirements. This is categorized as Level 5 autonomy. While most current systems operate at Level 3; where they require human intervention for approximately 20% of edge cases; true 100% autonomy requires a neutral infrastructure to prevent vendor lock-in.

Why is privacy essential for digital autonomy?

Privacy is the structural safeguard that prevents external entities from monitoring or manipulating an agent's reasoning path. If a third-party logs every interaction; the agent's autonomy is compromised by the interests of the host. A zero-log architecture ensures that the ephemeral state remains private; allowing the system to function as a truly sovereign actor without surveillance.

What is the difference between autonomy and agency?

Agency is the functional capacity of an entity to interact with its environment; while autonomy is the internal logic that governs those interactions. An entity can have agency without being autonomous if it is merely following a script. To accurately define autonomy; one must identify the internal reasoning engine that directs the agent's agency toward a specific objective.

How do AI agents maintain autonomy in a network?

AI agents maintain autonomy by using neutral communication protocols that avoid centralized orchestration. By connecting through a dedicated switchboard; agents can reason and handoff tasks without being tethered to a single vendor's API. This decentralized approach allows the agent to maintain its own logic and privacy while collaborating with other cross-machine entities.