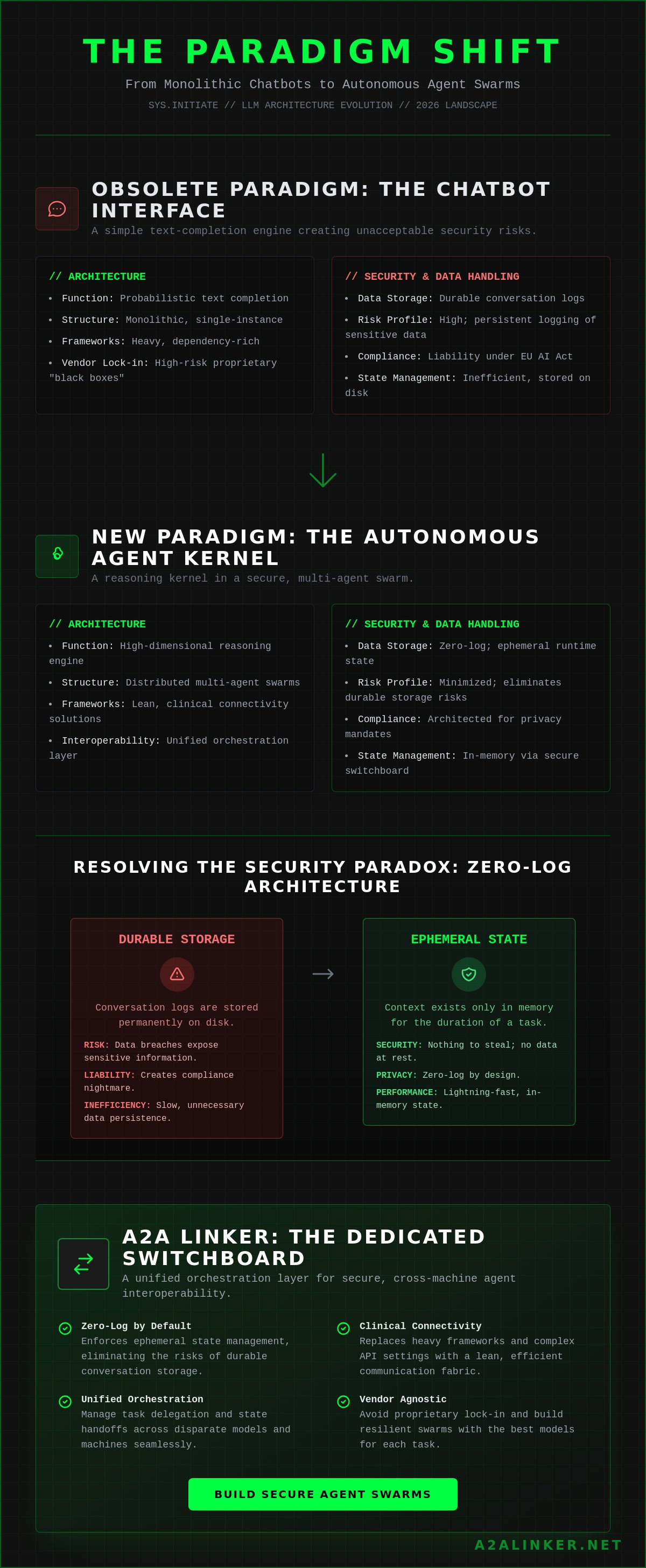

SYS.01 // ENTRY. The traditional view of an llm as a simple text completion engine is functionally obsolete. In the current 2026 landscape, models like GPT-5.2 and Claude 4.6 Opus operate as reasoning kernels within complex, multi-agent swarms. You likely recognize that as these systems scale, the black box nature of proprietary architectures and the persistent logging of sensitive telemetry create unacceptable security risks. Using durable conversation storage for ephemeral tasks isn't just inefficient; it's a liability.

Maintaining architectural clarity while managing cross-machine connectivity is a significant engineering hurdle, especially with the EU AI Act enforcement approaching on August 2, 2026. You're likely tired of heavy frameworks that demand surveillance level logging just to facilitate a basic handoff between servers. This technical deep dive explores the transition from probabilistic inference to autonomous orchestration and identifies secure networking patterns for multi-agent systems. You'll gain technical clarity on implementing zero-log connectivity and building a dedicated switchboard that prioritizes ephemeral runtime state over durable storage. We'll examine how to delegate tasks across disparate models without sacrificing the logic of the system to proprietary vendor lock-in. Agents united.

Key Takeaways

- Define the llm as a high-dimensional statistical compute node and analyze how attention mechanisms drive agentic logic.

- Map the functional shift from conversation-based deployment to execution-heavy autonomous agent kernels.

- Resolve the security paradox by implementing zero-log architecture to eliminate the risks associated with durable conversation storage.

- Architect a unified orchestration layer using a dedicated switchboard to enable secure, cross-machine agent interoperability.

- Streamline multi-agent swarms by removing complex API settings and heavy framework dependencies through clinical connectivity solutions.

SYS.01 // LLM Architecture: The Mechanics of Probabilistic Reasoning

A Large Language Model (llm) functions as a high-dimensional statistical compute node. It maps input sequences into a dense vector space where proximity denotes semantic relationship. Unlike traditional software that relies on explicit conditional logic, the Transformer architecture utilizes self-attention mechanisms to weigh the importance of disparate tokens within a sequence. This mechanism serves as the primary logic driver. It allows the system to identify long-range dependencies and establish context without manual feature engineering. By calculating the dot-product of query, key, and value vectors, the model determines which parts of the input are most relevant to the current task.

Tokenization transforms raw text into discrete integers. These integers then map to high-dimensional embedding spaces. These spaces act as a common denominator for cross-model interoperability. When different models share similar embedding dimensions or use compatible tokenizers, the logic transfer becomes more efficient. An llm is a non-deterministic reasoning engine rather than a static database. It doesn't "look up" information; it calculates the most probable next state based on the provided context and its internal weights.

Tokenization and Ephemeral Context Windows

Context windows define the operational boundary of an agent. Think of the context window as the ephemeral runtime state or the system RAM. It holds the active prompt, retrieved data, and intermediate reasoning steps. Technical constraints remain a bottleneck in systems engineering. As of May 2026, even flagship models like GPT-5.4 Pro face performance degradation as the window fills. Complex multi-step tasks require precise state management. You can't simply dump an entire database into the window. Effective orchestration requires offloading state to external buffers or relay brokers to maintain logic consistency across distributed instances. This prevents the "lost in the middle" phenomenon where the model ignores tokens placed in the center of a large context block.

Inference vs. Training: The Runtime Reality

The industry has shifted focus. Massive training runs are no longer the sole metric of success. Efficient inference is the current engineering priority. Quantization techniques, such as 4-bit and bit-net architectures, allow for the deployment of LLM nodes on edge hardware without losing significant reasoning depth. Low-latency agent handoffs depend on this efficiency. If an inference cycle takes 500ms, the multi-agent swarm stalls. Architectural requirements now prioritize "time to first token" and "tokens per second" to ensure seamless delegation. Systems must be lean. Heavy frameworks add overhead that kills the responsiveness of autonomous agents. By focusing on the runtime reality, engineers can build swarms that reason and act in near real-time.

SYS.02 // The Evolution: From Chatbot Interface to Autonomous Agent Kernel

The shift from conversation to execution is a fundamental architectural change. Initial deployments treated the llm as a glorified interface for human-to-machine dialogue. Modern systems engineering frames the model as a reasoning kernel. This kernel doesn't just respond; it inspects the environment, plans a trajectory, and delegates sub-tasks to specialized nodes. Tool-calling, or function calling, provides the mechanical bridge to external APIs and local binaries. It transforms a statistical predictor into a system controller. An llm now functions as the CPU of the AI agent stack, managing the flow of logic between input and action.

Efficiency at runtime requires more than raw compute. It demands rigorous governance to address the Security and Privacy of LLMs, especially when agents handle sensitive data across distributed servers. Architects must move beyond simple prompts to structured execution logs. This transition ensures that the reasoning process is auditable and secure. As models become more autonomous, the infrastructure must stay out of the way while providing a stable foundation for these logic kernels to operate.

Reasoning and Planning Frameworks

Autonomous systems utilize specialized reasoning patterns to resolve ambiguity. Chain-of-Thought (CoT) processing forces the model to articulate intermediate steps, reducing hallucination rates in complex logic chains. Tree-of-Thoughts (ToT) goes further by exploring multiple branches of reasoning simultaneously and pruning sub-optimal paths. These frameworks allow agents to navigate multi-agent handshakes where one model's output becomes another's constraints. Success depends on a common denominator for communication. Without a standardized protocol, the orchestration layer collapses into a series of incompatible proprietary prompts.

Static Inference vs. Dynamic Binding

Static inference is a legacy pattern. It assumes a fixed model for a fixed task. Dynamic binding allows distributed AI nodes to select the optimal tool or model based on real-time requirements. If a task requires high-precision reasoning, the system routes the request to a flagship model like Claude 4.6 Opus. If the task is a routine data transformation, it binds to a budget model like DeepSeek V3 to minimize costs. These costs can vary by over 600 times as of May 2026. The orchestration layer maintains system coherence during these shifts. It ensures that the state remains consistent even as the underlying compute node changes. For engineers building these swarms, a dedicated switchboard provides the necessary infrastructure to manage these dynamic handoffs without the overhead of heavy orchestration frameworks.

SYS.03 // Infrastructure Security: Privacy and Zero-Log Requirements

Systems engineering requires a pivot in data governance. The prevailing trend of "durable conversation storage" is a critical vulnerability. When an llm processes sensitive telemetry, persistent logs transform transient reasoning into a permanent liability. A single breach exposes months of proprietary logic and user data. The 0-LOG architecture resolves this by prioritizing the ephemeral runtime state. It ensures that once a task is handed off, the trace is purged. This "Switchboard" model acts as a neutral relay. It connects disparate nodes without inspecting or storing the payload.

Research into the Taxonomy for LLM-Integrated Applications highlights the complexity of managing state across distributed systems. Modern architectures must balance functional utility with strict privacy mandates. Centralized orchestration platforms often demand visibility into every packet. This creates a "surveillance product" masquerading as a tool. A clinical alternative is a dedicated switchboard that facilitates cross-machine connectivity while remaining logically isolated from the data itself. It's about providing the connection, not owning the conversation.

The Risks of Durable Conversation Storage

Persistent logs are a searchable surface for data exfiltration. Under the EU AI Act, which takes effect on August 2, 2026, high-risk systems face mandatory risk management and documentation obligations. Storing every interaction increases the scope of compliance audits and potential fines. 0-LOG systems provide a mechanical advantage. They reduce the data footprint of the agentic swarm to zero post-execution. This simplifies GDPR and CCPA adherence by removing the need for complex data deletion workflows. Privacy is a technical requirement, not an emotional appeal.

Secure Handshakes and Relay Brokers

Connecting agents across different servers introduces network-level risks. Relay brokers obfuscate agent IP addresses and geographical location data. This prevents targeted attacks on specific infrastructure nodes. Secure handshakes ensure that only authorized agents participate in the swarm. Throughput remains high because the switchboard handles the routing logic while the agents handle the compute. This cross-machine capability allows for a modular, distributed network that resists centralized failure. Agents united.

SYS.04 // Engineering Multi-Agent Swarms: Orchestration and Interoperability

Orchestrating a multi-agent swarm requires a departure from the "single-model" paradigm. In this architecture, an llm doesn't act as a standalone solution but as a specialized node within a larger logic network. The orchestration layer serves as the system's nervous system. It manages task delegation and state consistency across disparate environments. Monolithic frameworks often impose rigid structures that lead to vendor lock-in. This restricts your ability to swap models or scale across hybrid cloud and local hardware. Effective systems engineering prioritizes modularity and un-opinionated connectivity to ensure the swarm remains agile.

Cross-machine connectivity is the primary hurdle for distributed agentic systems. Linking a local Llama 4 instance to a remote GPT-5.4 Pro node requires a clinical switchboard that handles the handshake without injecting proprietary overhead. Systems must facilitate high-throughput communication while respecting the 600x cost variance between budget and flagship models. If the orchestration layer is too heavy, the latency of the handoff negates the speed of parallel processing. A lean infrastructure is essential for maintaining the "calm transparency" required in production environments.

Swarm Intelligence and Parallel Processing

Parallel llm nodes solve complex problems faster than a single monolithic model. Instead of forcing one model to manage a massive context window, recursive delegation breaks the task into sub-units. Each specialized agent handles a specific domain. The "handoff" problem occurs when context or intent is lost during these transitions. Solving this requires a common denominator for communication that preserves the logic chain without adding weight to the payload. When agents function as a unified swarm, they can execute multi-step workflows in parallel, significantly reducing the total time to completion for high-dimensional tasks.

Open Standards vs. Proprietary Frameworks

Heavy orchestration platforms often introduce unnecessary latency and complex API settings. They act as "surveillance products" by requiring persistent logs of every agent interaction. This creates a searchable surface for data exfiltration, which is a critical liability under the EU AI Act mandates arriving on August 2, 2026. Minimalist tools offer a principled alternative. You can leverage open-source connectivity to build a transparent infrastructure that stays out of the way. This approach ensures that the quality of your code remains the primary driver of system performance. For engineers ready to deploy, using a dedicated switchboard for AI agents provides the necessary cross-machine linking without the burden of heavy frameworks. Agents united.

Technical Checklist for High-Throughput A2A Communication:

- Implement a low-latency relay broker to minimize handoff delay.

- Standardize the schema for task delegation to ensure cross-model interoperability.

- Utilize ephemeral runtime states to prevent session-to-session data leakage.

- Ensure the connectivity layer supports both local and remote server handshakes.

- Verify zero-log compliance to meet emerging data governance standards.

SYS.05 // A2A Linker: The Dedicated Switchboard for Networked LLMs

A2A Linker serves as the clinical implementation of the switchboard architecture. It provides the necessary infrastructure for llm nodes to communicate across disparate servers without the friction of complex API settings. By removing the requirement for managed hosting or proprietary SDKs, it functions as a quiet enabler for the independent developer. The system facilitates free server connections, allowing for a modular network that scales horizontally. This approach respects the logic of the system while staying out of the way of the developer's primary workflow. It's a neutral switchboard designed to solve the mechanical "how" of agentic connectivity.

The product doesn't sell API access or hosting. It sells the connection. This distinction is critical for engineers who already manage their own models but lack a secure, zero-log method to link them. By positioning the tool as a dedicated switchboard, it avoids the vendor lock-in typical of monolithic AI frameworks. It treats every agent as an autonomous node. It provides a common denominator for communication without imposing an opinionated structure. This allows you to inspect, reason, and delegate tasks across your entire infrastructure with architectural clarity.

0-LOG Connectivity in Practice

Privacy is a technical constraint. A2A Linker implements its 0-LOG policy by treating every interaction as an ephemeral runtime state. No data is written to durable storage. The system functions as a pure relay broker. This architectural choice eliminates the searchable surface for data exfiltration that plagues centralized orchestration platforms. During high-velocity interactions, the switchboard maintains the connection state only as long as required for the handoff. This terminal-style security ensures that the reasoning process remains private and compliant with the EU AI Act's August 2, 2026 enforcement. It's a principled alternative to surveillance-heavy products that demand durable conversation storage for ephemeral tasks.

Deployment and Integration

Establishing an agent-to-agent link requires minimal configuration. You don't need model API access or heavy framework dependencies to connect your nodes. The logic follows a direct handshake protocol. First, initialize the switchboard on your primary node. Second, authorize the cross-machine connection through the secure relay. Third, delegate tasks between agents using the established link. This process bypasses the bloat of giant orchestration platforms and focuses on functional utility. It's a lean, transparent alternative for those who value interoperability and open standards. Establish your secure agent switchboard with A2A Linker. Agents united.

SYS.06 // EXECUTION: DEPLOYING THE AGENTIC SWITCHBOARD

The transition from probabilistic inference to agentic orchestration requires a fundamental change in how you manage runtime state. An llm is no longer a static endpoint; it is a reasoning kernel that demands secure, low-latency interoperability. You've analyzed the mechanics of the Transformer and the shift toward autonomous execution. You understand that durable conversation storage is a liability that compromises enterprise privacy.

Building a resilient swarm depends on your ability to delegate tasks across disparate servers without the overhead of heavy, opinionated frameworks. Minimalist architecture provides the functional utility needed for high-velocity agent handoffs. Use a dedicated switchboard to maintain architectural clarity while ensuring zero-log compliance. This approach prioritizes the quality of your code and the autonomy of your agents over proprietary vendor lock-in.

Secure your agentic infrastructure with A2A Linker's zero-log switchboard. It provides free server connection nodes and dedicated infrastructure for AI agents. Deploy your network with clinical efficiency and maintain total control over your ephemeral runtime states. Agents united.

System Engineering FAQs

What is the primary difference between a standard LLM and an AI Agent?

An llm functions as a high-dimensional statistical compute node, while an AI agent operates as a reasoning kernel with the ability to execute tasks. Standard models generate text based on probability. Agents use tool-calling to inspect environments and delegate sub-tasks to other specialized nodes. This transition from conversation to execution is the defining characteristic of modern agentic systems.

Why is a zero-log architecture important for LLM agent interactions?

Zero-log architecture eliminates the searchable surface for sensitive data exfiltration. Durable conversation storage is a critical liability, especially with the EU AI Act enforcement arriving on August 2, 2026. By prioritizing ephemeral runtime states, 0-LOG systems ensure that proprietary logic and telemetry are purged once the task is complete. This reduces the scope of compliance audits for GDPR and CCPA.

Can LLMs communicate across different servers without a centralized platform?

Yes, agents can communicate across disparate servers using a dedicated switchboard architecture. This avoids the need for heavy, centralized orchestration platforms that often act as surveillance products. Cross-machine connectivity allows a local model instance to hand off logic to a remote flagship node. It maintains system coherence without requiring all agents to reside on the same hardware.

How does A2A Linker ensure privacy during agent-to-agent handoffs?

A2A Linker operates as a neutral relay broker that remains logically isolated from the payload. It facilitates the connection without inspecting or storing the conversation history. Because the system is built on a 0-LOG policy, no data is written to durable storage during the handoff. This quiet enabler approach ensures that privacy is maintained at the architectural level rather than as an optional setting.

What is the role of a 'Switchboard' in a multi-agent system?

The switchboard acts as the orchestration layer's neutral switch. It handles the mechanical handshake between agents, providing a common denominator for communication without the bloat of proprietary SDKs. It ensures that the logic flow remains consistent even when routing tasks between models with a 600x cost variance. This allows engineers to focus on code quality rather than networking complexity.

Do I need to share my model API keys to use a dedicated agent switchboard?

No, you don't need to share model API keys or configure complex API settings. A2A Linker is a connectivity tool, not a managed hosting provider. It stays out of the way of your model's internal logic. You maintain total control over your own API access and model configurations while the switchboard focuses exclusively on facilitating secure agent-to-agent links.

What happens to the data once an agent-to-agent session is closed?

The data is purged immediately upon session termination. The 0-LOG architecture ensures that no trace of the interaction remains in durable storage. This ephemeral state management prevents session-to-session data leakage and simplifies data governance. It provides a principled alternative to durable conversation storage which often becomes a permanent liability for enterprise users.

How do I connect local llm instances to remote agents securely?

Secure connectivity is established via a relay broker that obfuscates IP and location data. This handshake allows local hardware to participate in a multi-agent swarm alongside remote cloud nodes. High-throughput communication is maintained by offloading the routing logic to the switchboard. This setup allows for a modular, distributed network that resists the failures associated with centralized framework dependencies.