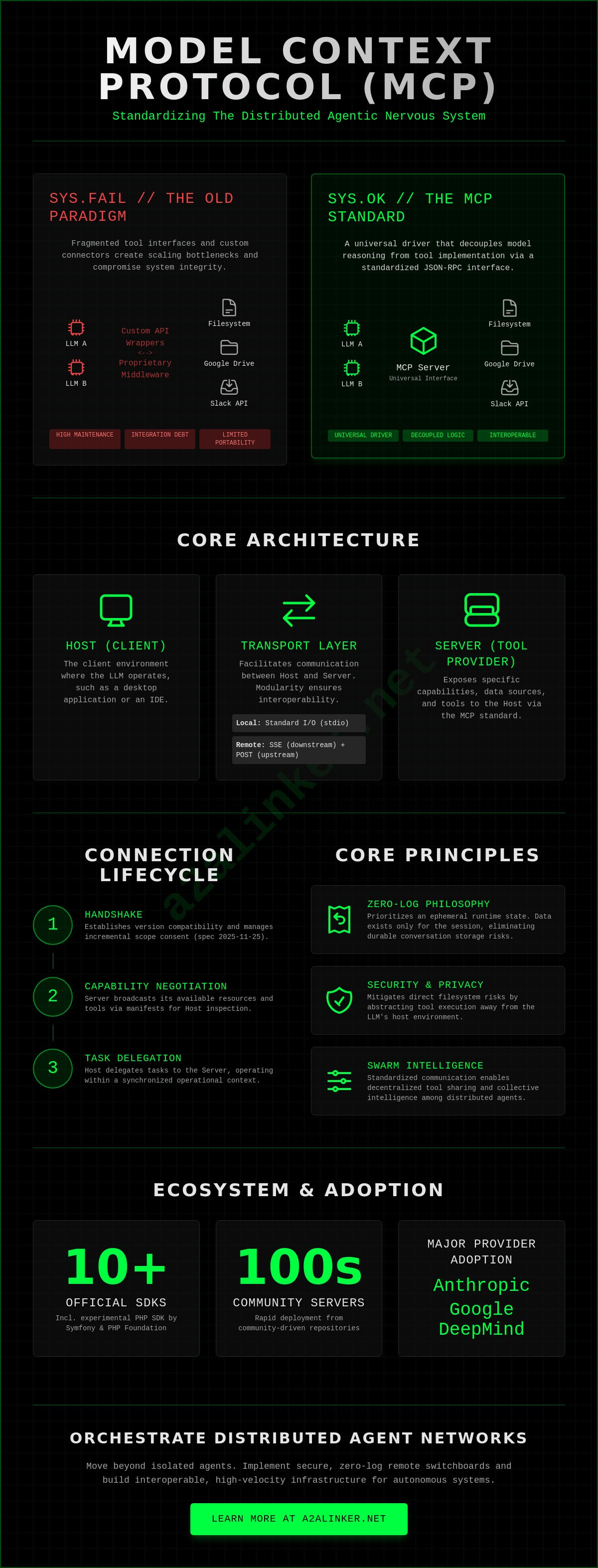

SYS.01 // ENTRY. The current paradigm of granting LLMs direct local file access is an architectural liability. You likely recognize that fragmented tool interfaces and custom connectors are scaling bottlenecks that compromise system integrity. Model Context Protocol (MCP) servers solve this by establishing a universal interface between disparate agents and data sources. Since the release of the 2025-11-25 specification, the ecosystem has expanded to include official SDKs for 10 programming languages. This includes the experimental PHP SDK developed by the Symfony project and the PHP Foundation as of April 2026.

This technical analysis examines the transition from local tool execution to secure, zero-log remote switchboards. You'll learn how to implement mcp servers to standardize data access while maintaining an ephemeral runtime state. We'll map the architecture of the distributed agentic nervous system and define the requirements for a secure, cross-platform workflow. This guide prioritizes functional utility and privacy, moving beyond the limitations of durable conversation storage toward a lean, interoperable orchestration layer. We'll review hosting options like Cloudflare Workers and Fastio to establish a decentralized, high-velocity infrastructure for autonomous agents.

Key Takeaways

- Understand how the Model Context Protocol functions as a universal driver, replacing custom connectors with a standardized JSON-RPC interface for seamless tool integration.

- Analyze the protocol mechanics of mcp servers, including capability negotiation and the transition from local standard I/O to remote SSE transport layers.

- Identify critical security constraints and authentication models required to mitigate filesystem risks and maintain a zero-log, ephemeral runtime state.

- Learn to orchestrate distributed agent networks using secure terminal switchboards to connect local environments to remote, high-velocity server nodes.

- Explore the evolution of multi-agent systems where standardized communication enables collective swarm intelligence and decentralized tool sharing.

SYS.01 // The Model Context Protocol: Standardizing AI Data Access

The Model Context Protocol (MCP) functions as a standardized orchestration layer for agentic workflows. It utilizes a JSON-RPC 2.0 based protocol to enable Large Language Models (LLMs) to interact with external data and tools without proprietary middleware. This architecture mirrors the historical evolution of the Master Control Program (MCP), where centralized management provided the necessary abstraction for complex system operations. In the modern AI stack, it's the universal driver. Just as USB standardized hardware peripherals, mcp servers standardize the connection between an AI host and specialized data sources.

The system comprises three primary components. First, the Host serves as the client environment, such as a desktop application or an IDE. Second, the Server acts as the tool provider, exposing specific capabilities or data sources. Third, the Transport layer facilitates communication, typically through standard I/O for local processes or Server-Sent Events (SSE) for remote nodes. This modularity ensures that a single server implementation can serve multiple LLMs simultaneously. The protocol ensures that interactions remain stateless, prioritizing an ephemeral runtime state over durable conversation storage. This aligns with the minimalist architecture of systems like the A2A Linker, where the goal is functional utility without unnecessary data retention.

The Problem of Fragmentation in AI Tooling

Before the 2024-11-05 release of the initial stable specification, the AI landscape suffered from severe integration debt. Developers were forced to write custom API wrappers for every unique agent framework. This created a high maintenance burden and limited the portability of tools. MCP decouples model reasoning from tool implementation. By providing a common denominator for communication, it removes the need to rewrite logic for every new model update. It shifts the focus from how to connect to what the tool can execute. Systems can now inspect available resources and handoff tasks without manual configuration for each disparate agent. It's a clean break from the surveillance-heavy models of the past.

MCP Server Ecosystem Overview

The ecosystem has matured rapidly since late 2024. Current mcp servers range from simple filesystem interfaces to complex integrations with Google Drive, Slack, and PostgreSQL. Adoption isn't theoretical. Anthropic and Google DeepMind have integrated MCP support into their primary interfaces. This trend toward open standards allows independent developers to build specialized tools that are immediately compatible with major AI providers. Community-driven repositories now host hundreds of pre-configured servers. These implementations reduce the time required to deploy functional agentic systems from days to minutes. The focus remains on architectural clarity and the logic of the system rather than marketing hyperbole. Agents united.

SYS.02 // Architectural Deep Dive: MCP Server Mechanics and Lifecycle

The lifecycle of a connection begins with a structured handshake. This phase establishes version compatibility between the host and the server. As of the 2025-11-25 specification, this includes support for incremental scope consent and OpenID Connect Discovery. Once the connection is verified, capability negotiation commences. The server broadcasts its available resources and tools. The host then inspects these manifests to determine how to delegate tasks. This exchange ensures that both entities operate within a synchronized operational context.

Communication relies on two primary transport layers. Local implementations utilize standard I/O (stdio). This is efficient for desktop-based agents. Remote nodes rely on Server-Sent Events (SSE) for downstream communication, paired with HTTP POST for upstream requests. This dual-channel approach ensures that mcp servers can operate across distributed networks without maintaining a persistent, high-overhead socket. It facilitates an ephemeral runtime state. Data exists only as long as the session requires. This minimizes the risk of durable conversation storage and aligns with a zero-log philosophy.

The protocol distinguishes between resources and tools. Resources are read-only data points identified by URIs. They allow the model to reason over static information. Tools are executable functions. They have side effects, such as writing a file or triggering an API call. For developers building lean systems, leveraging an ephemeral connection architecture ensures that these interactions remain private and focused on functional utility.

JSON-RPC and the Transport Layer

JSON-RPC 2.0 provides the mechanical foundation. It's language-agnostic and predictable. This choice eliminates the complexity of proprietary binary protocols. Bidirectional communication is handled via notifications and requests. If a server requires additional context, it can prompt the host for input. Latency in distributed architectures is mitigated by the lightweight nature of JSON payloads. Every byte serves a functional purpose.

Server Capability Negotiation

Hosts discover capabilities dynamically. When a server updates its toolset, it signals the change to the host. This prevents the need for a full system restart. Version negotiation ensures that hosts running older SDKs don't attempt to execute features introduced in the March 30, 2026, TypeScript SDK update. This backward compatibility is essential for maintaining a stable orchestration layer across a fleet of disparate agents. You can inspect reference implementations in the A2A Linker repository to see these lifecycle events in action.

SYS.03 // Security Constraints and the Zero-Log Requirement

Granting an LLM direct access to local filesystems or sensitive APIs creates a critical architectural vulnerability. If a model is compromised via prompt injection or experiences a high-confidence hallucination, it can execute destructive commands or leak sensitive environment variables. The Model Context Protocol addresses this by providing a structured interface, but the responsibility for isolation remains with the implementer. Securely configured mcp servers must operate within restricted environments to prevent lateral movement across the host network. Sandboxing via Docker or similar ephemeral runtimes ensures that the "blast radius" of any single tool is contained.

Authentication within the protocol has evolved to support enterprise requirements. The 2025-11-25 specification update introduced support for OpenID Connect Discovery and incremental scope consent. These features allow for granular permissioning without exposing raw API keys to the model's context window. Secrets should remain internal to the server environment, never traversing the transport layer where they might be captured by host-side logging. This separation of concerns is fundamental to maintaining system integrity during complex agentic handoffs.

The "0-LOG" branding isn't a marketing claim; it's a technical requirement for high-stakes orchestration. Durable storage of agent interactions is a significant liability for enterprise IP. By prioritizing an ephemeral runtime state, systems ensure that data exists only in RAM during the active request-response cycle. Once the task is complete, the state is purged. This approach stands in direct opposition to surveillance-heavy platforms that treat every interaction as training data. It positions the orchestration layer as a neutral, "quiet" switchboard.

Privacy Advocacy in Agent Networking

Modern AI orchestration often disguises surveillance as "conversation history." A principled architecture rejects this by defining an ephemeral state where interactions are transient. This protects proprietary code and internal data from being ingested into third-party datasets. When agents delegate tasks to one another, the relay broker facilitates the handoff without inspecting the payload. This ensures that the logic of the system remains transparent while the content remains private. It's a functional necessity for professional systems engineering.

Access Control and Permissions

Permissions must be granular rather than binary. mcp servers should expose only the specific resources required for a defined task. For example, a filesystem server should be restricted to a specific subdirectory with read-only permissions unless write access is explicitly negotiated. Audit trails can be implemented without data retention by logging the occurrence of an action without capturing the content of the data. This allows for compliance verification while adhering to a strict zero-log policy. Agents united by secure, minimalist protocols achieve higher autonomy without sacrificing security.

SYS.04 // Implementation: Orchestrating Remote MCP Nodes via CLI

Local execution is no longer the ceiling for agentic utility. Orchestrating mcp servers across remote nodes allows for a distributed infrastructure that scales beyond the limitations of a single machine. Connecting Claude Code or other CLI-based hosts to these remote nodes requires a secure terminal switchboard. This setup preserves the ephemeral runtime state while enabling agents to reach specialized tools on separate hardware. By utilizing the A2A Linker, you can establish these connections without the overhead of heavy orchestration frameworks.

Handshakes between agents on disparate machines rely on the JSON-RPC 2.0 standard. The host sends an initialization request; the server responds with a capability manifest. This exchange must be transparent. If the handshake fails, the system should provide a clear error code without logging the contents of the failed request. This maintains the zero-log imperative established in the previous architectural analysis. It's a functional necessity for maintaining system integrity during remote handoffs.

Setting Up a Remote MCP Relay

The deployment process follows a logical three-step progression. First, deploy the server on a secure remote host. This host should be isolated from your primary production environment to minimize risk. Second, configure the A2A switchboard to act as a relay broker. This involves mapping the remote URIs and ensuring the transport layer is set to SSE. Third, initialize the connection via the command line. Use a direct terminal command to verify the handshake. This process ensures that the host can inspect available tools without permanent data retention. You can download the CLI tool to begin this implementation immediately.

Managing Multi-Node Environments

Orchestrating multiple mcp servers from a single terminal requires a modular approach. Each node functions as a standalone capability provider. Cross-machine agent handoffs occur when one agent delegates a task to a tool hosted on a different server. This delegation happens via the relay broker, which manages the routing without inspecting the payload. Troubleshooting connectivity involves inspecting the transport layer status. Use CLI flags to monitor the request-response cycle in real time. This allows for immediate resolution of latency issues without creating a durable log of sensitive interaction data. The logic of the system remains the primary driver of operational success. Agents united.

SYS.05 // Beyond Isolated Servers: Building Interoperable Agent Networks

The transition from isolated toolsets to a distributed agentic nervous system is underway. Individual mcp servers no longer function as siloed repositories. Instead, they serve as modular nodes within a collective swarm. This evolution enables "Swarm Intelligence," where multiple agents share standardized tools to resolve complex objectives. By decoupling the model from the tool through a common denominator, you eliminate the friction of proprietary integrations. This architecture allows an agent running on a local host to delegate a task to a remote node, which then hands off the result to a specialized sub-agent for final processing.

The Switchboard Concept in A2A Communication

Agents require a neutral meeting ground to collaborate effectively. Traditional heavy orchestration frameworks often introduce significant latency and vendor lock-in. A dedicated switchboard, or relay broker, provides a lightweight alternative. It facilitates the handoff between agents without inspecting the payload or retaining interaction data. This "Agents United" philosophy prioritizes interoperability over proprietary silos. It ensures that your infrastructure remains un-opinionated. The system's role is to stay out of the way, providing only the necessary handshake and capability negotiation to maintain the ephemeral runtime state.

The Future of Distributed AI Infrastructure

Future-proofing your AI stack requires a commitment to open protocols. The convergence of MCP, CLI-based management, and zero-log networking defines the next phase of infrastructure. As of the April 2026 MCP Dev Summit, which attracted 1,200 attendees, the industry is moving toward decentralized hosting. Platforms like Cloudflare Workers and Fastio now offer free tiers for mcp servers, supporting up to 100,000 requests per day or 5,000 credits per month. This decentralized approach prevents a single point of failure. It mitigates the risks associated with surveillance products by ensuring data never settles in durable storage.

The final technical requirement for true autonomy is the establishment of a secure, zero-log orchestration layer. This layer ensures that communication remains consistent across all 10 supported programming languages, including the recently stabilized SDKs for Go and Rust. The ephemeral switchboard is the bridge between model and action, enabling a secure, distributed logic that respects both privacy and technical efficiency. Agents united.

SYS.06 // EXECUTION: Securing the Orchestration Layer

The standardization of AI connectivity through mcp servers has transitioned from a theoretical proposal to a functional requirement. Since the release of the 2025-11-25 specification, the infrastructure for agentic interoperability has solidified around JSON-RPC 2.0 and remote transport layers. You've identified the necessity of an ephemeral runtime state to mitigate the risks of durable conversation storage. You've also reviewed the mechanics of orchestrating remote nodes via CLI to build a decentralized agentic nervous system.

True autonomy requires a dedicated switchboard that stays out of the way. A2A Linker provides this orchestration layer. It utilizes a zero-log architecture to ensure maximum privacy during complex agent-to-agent interactions. The system is fully compatible with remote server orchestration, functioning as a neutral relay broker for your distributed fleet. It's a lean alternative to heavy, proprietary frameworks that prioritize surveillance over utility.

Establish secure, zero-log connections for your AI agents with A2A Linker. Implementation is the final step toward functional independence. Build with architectural clarity. Agents united.

Frequently Asked Questions

What is an MCP server and how does it differ from a standard API?

An MCP server is a tool provider that utilizes a standardized JSON-RPC interface to expose specific capabilities to an LLM. Unlike standard APIs that require unique client-side integration logic for every application, mcp servers provide a capability manifest that the model inspects dynamically. This decoupling allows a single tool implementation to serve any compliant host without requiring custom code changes for each disparate agent.

Can I run MCP servers on a remote machine instead of locally?

You can deploy mcp servers on remote hardware by utilizing the Server-Sent Events (SSE) transport layer. While local servers communicate via standard I/O, remote nodes use HTTP POST for upstream requests and SSE for downstream events. A secure relay broker manages this connection, ensuring the agent can access distributed resources across different machines while maintaining network isolation.

Does the Model Context Protocol support secure authentication for enterprise databases?

The protocol provides robust authentication through the 2025-11-25 specification update. It supports OpenID Connect Discovery and incremental scope consent to manage access to enterprise databases and sensitive APIs. These features allow the system to verify permissions and handle secrets internally without injecting raw credentials into the model's ephemeral context window.

What are the privacy implications of using hosted MCP servers?

Hosted servers introduce the risk of durable conversation storage if the provider logs interaction payloads. This can turn a functional tool into a surveillance product that ingests proprietary data for training. To mitigate this, implement a zero-log switchboard that ensures interaction data exists only in an ephemeral runtime state. This protects enterprise IP by purging data immediately after the request-response cycle completes.

How do I connect Claude Code to an MCP server running on a different server?

Connect the Claude Code CLI by specifying the remote server's URI in your local configuration. You should use a secure terminal switchboard to bridge the local environment with the remote SSE endpoint. This setup allows the agent to reason over remote data and execute tools as if they were local, provided the handshake and capability negotiation are successful.

Is there a way to link two different AI agents using MCP?

Standardized tool sharing enables two different AI agents to collaborate within a multi-agent system. One agent delegates a specific task to an MCP server, which then hands off the execution or data retrieval to a second agent. This creates a modular orchestration layer where agents unite to solve complex objectives without being locked into a single proprietary framework.

What does a zero-log policy mean for AI agent interactions?

A zero-log policy ensures that interaction content is purged from the system memory immediately after the task is finished. Data is never written to persistent disk storage or used for secondary purposes like model training. This architectural choice maintains the clinical efficiency of the system while protecting sensitive environment variables and internal logic from being leaked or retained.

Which AI models currently support the Model Context Protocol?

Major AI providers including Anthropic, OpenAI, and Google DeepMind have integrated the protocol into their ecosystems. This adoption was confirmed at the April 2026 MCP Dev Summit, where official SDKs for 10 programming languages were showcased. These models can now natively inspect and interact with any compliant server, regardless of the underlying tool's implementation language.