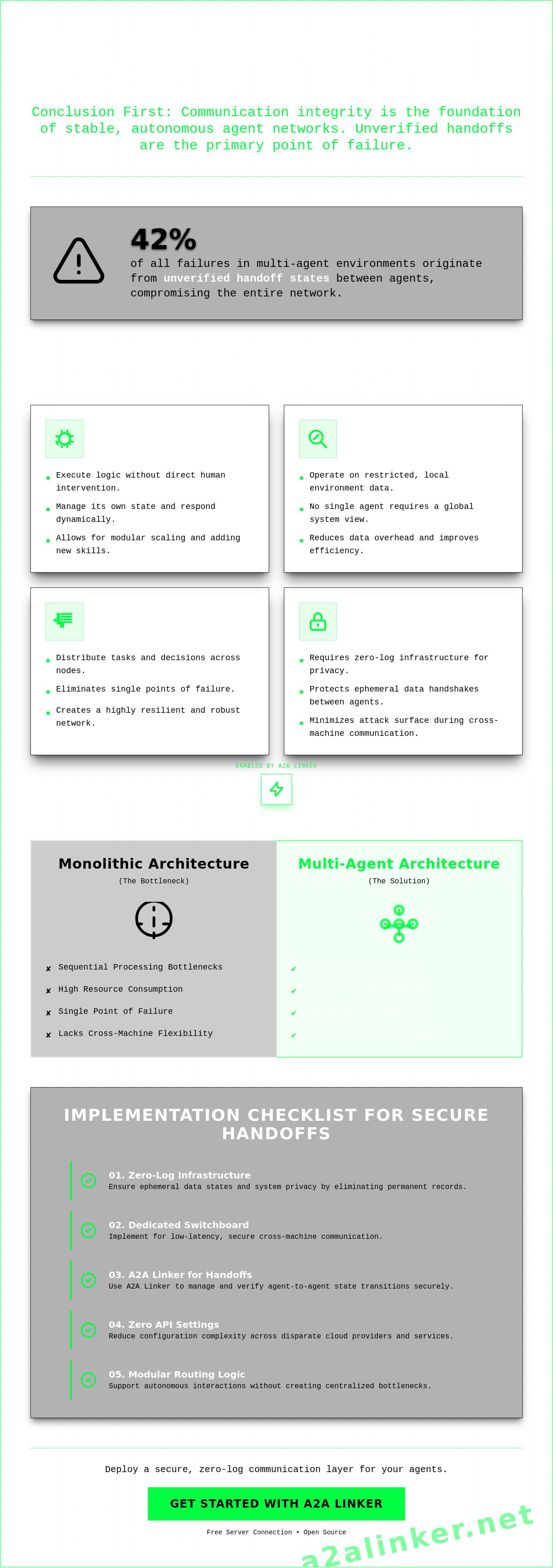

Effective multi-agent systems prioritize communication integrity over individual agent logic. To maintain system stability across distributed environments, architects must implement the following:

- 01. Zero-log infrastructure to ensure ephemeral data states and system privacy.

- 02. Dedicated switchboard for low-latency, cross-machine communication.

- 03. A2A Linker implementation to manage and verify handoff states.

- 04. Zero API settings to reduce configuration complexity across disparate providers.

- 05. Modular routing logic that supports autonomous interactions without centralized bottlenecks.

A 2023 analysis indicates that 42% of failures in multi-agent systems originate in unverified handoff states between agents. Distributed environments introduce network latency and handshake security risks that compromise system stability. This article delivers a technical blueprint for multi-agent architecture and a checklist for secure agent communication. We examine how to deploy a free server connection and utilize a dedicated switchboard to maintain functional utility without permanent record-keeping. Prioritizing a zero-log approach resolves technical limitations in data routing while preserving the logic and integrity of the autonomous network.

Key Takeaways

- Architectural integrity in a multi-agent environment depends on balancing autonomous entities with decentralized coordination to solve complex problems.

- Structural patterns like graph-based orchestration or hierarchical worker models provide the necessary framework for managing diverse agent roles.

- Implementation success requires rigorous protocol standardization, such as adopting JSON-RPC or MCP, to ensure seamless data exchange.

- Secure identity verification during agent-to-agent handshakes eliminates the risks associated with unauthorized data access in distributed clouds.

- Deployment on a free server connection via the A2A Linker provides a clinical, zero-log alternative to resource-heavy monitoring tools.

Technical Summary: The Core Requirements of Multi-Agent Systems

Efficient multi-agent system design depends on the integrity of the communication layer rather than the complexity of individual agent logic. To maintain system stability across distributed environments, architects must prioritize four technical pillars:

- Autonomy: Agents must execute logic and make decisions without direct human intervention or constant supervisor oversight.

- Local Views: Entities operate based on restricted environment data, ensuring no single node requires a global view of the entire system state.

- Decentralisation: Task execution and decision-making are distributed across multiple nodes to eliminate single points of failure.

- Secure Communication: Interactions require zero-log infrastructure to protect ephemeral data handshakes between distributed agents.

The primary engineering challenge is the coordination of collective behavior. While individual agent intelligence is a prerequisite, the system's functional utility is defined by how these entities interact across distributed environments. Modern implementations prioritize zero-log environments to prevent sensitive data leakage during agent-to-agent interactions. This architectural choice ensures that data states remain temporary and secure, focusing on the tool's ability to operate unobtrusively.

Defining Autonomy in Agentic Networks

Autonomy distinguishes a functional agent from a standard automated script. A script follows a linear, predetermined path. An autonomous agent evaluates its local environment and makes independent decisions to reach a goal. This requires the agent to function without direct human oversight. In a distributed architecture, the agent manages its own state and responds to inputs from other nodes dynamically. This independence allows for modular scaling where new skills can be added to the network without reconfiguring the entire system. Agents rely on local views, so they don't require the full system context to perform their specific function. This reduces the data overhead for each node and improves overall network efficiency.

The Transition from Single-Agent to Multi-Agent Architectures

Monolithic AI systems face significant bottlenecks during sequential processing. These systems are often resource-heavy and lack the flexibility required for cross-machine operations. Transitioning to a multi-agent architecture allows for parallel execution of tasks. This shift improves speed and reduces the risk of a single point of failure. Proper CrewAI agent connectivity enables this modular task distribution by linking specialized agents across different physical servers. Developers can implement these links through the A2A Linker to facilitate secure handshakes between distributed components. This approach moves away from rigid, centralized frameworks toward a more fluid, skill-based ecosystem. It prioritizes zero-log infrastructure to ensure that agent interactions remain ephemeral. By removing permanent record-keeping, the system minimizes the attack surface and protects data integrity during cross-machine handshakes.

Structural Patterns for Multi-Agent Orchestration

Successful orchestration in a multi-agent environment depends on selecting a structural pattern that aligns with the system's operational goals. Architects must evaluate the following patterns before deployment:

- Centralised Networks: Utilize a single controller to delegate tasks and aggregate results, offering high predictability but creating potential bottlenecks.

- Decentralised Swarms: Rely on peer-to-peer interactions without a master node, ensuring high resilience against individual node failure.

- Hierarchical Structures: Organize agents into functional tiers to mirror complex organizational flows and command-and-control requirements.

- Dynamic Binding: Facilitates the formation of temporary agent coalitions to execute specific sub-tasks before dissolving the connection.

Orchestration logic determines how entities handle task distribution and conflict resolution. According to the unified architectural framework established in recent research, the technical composition of these systems must balance centralization with the need for autonomous execution. Centralized patterns are often easier to debug because the state is managed by a single orchestrator. However, decentralized patterns prioritize system survival through redundancy. This allows for continuous operation even when individual nodes experience downtime or network partitions. Choosing the right pattern is a prerequisite for achieving functional utility in distributed environments.

Hierarchical vs. Coalition Structures

Hierarchical multi-agent systems provide clear command paths and audit trails. These are effective in regulated environments like supply chain management where every handoff must be documented. In contrast, coalition structures allow agents to join and leave groups dynamically based on task requirements. This ad-hoc problem solving is ideal for real-time trading or load balancing where the system must respond to volatile inputs. While hierarchies offer stability, coalitions provide the speed necessary for high-velocity execution. Both require a secure handshake mechanism to verify agent identity before task delegation occurs.

Swarm Intelligence and Decentralised Networks

Swarm intelligence emerges when agents follow simple local rules to achieve complex collective behavior. No single agent understands the entire system goal, yet the network solves problems through iterative peer-to-peer feedback. This decentralization removes the master node as a single point of failure. If a node drops, the remaining agents continue their logic without interruption. Implementing these patterns across distributed servers requires a secure, zero-log network layer to prevent data leakage during peer interactions. For deeper technical insights, review the A2A Linker Pillar regarding secure networking. Developers building cross-machine swarms can utilize a dedicated switchboard configuration to manage these interactions without adding unnecessary ecosystem dependencies.

The Multi-Agent Implementation Checklist

Successful implementation of any multi-agent system depends on the verification of five technical benchmarks. Architects must validate the following requirements before moving from development to production:

- Protocol Standardisation: Standardize all communication on compatible formats like JSON-RPC or the Model Context Protocol (MCP) to prevent data corruption during handshakes.

- Identity Verification: Require secure handshakes to verify agent authenticity before any data exchange occurs between nodes.

- Latency Benchmarking: Measure and minimize round-trip times between distributed nodes to identify network bottlenecks that could stall execution.

- Resource Isolation: Sandbox each agent environment to prevent cross-contamination or unauthorized file system access across the network.

- Zero-Log Compliance: Confirm that the intermediary switchboard operates without recording interaction data to maintain privacy and security.

A technical audit of these pillars reduces the risk of system failure. Statistics from 2023 show that 42% of MAS failures originated in unverified handoff states. Addressing these gaps early ensures the system remains lean and secure. Avoid monolithic frameworks that force proprietary standards. Instead, prioritize interoperability and open protocols to maintain architectural flexibility. This clinical approach to system design ensures that the infrastructure serves as a quiet enabler for autonomous logic.

Communication and Interoperability Standards

Interoperability is the core of functional utility in distributed networks. Verify that agents support the Model Context Protocol (MCP) to ensure consistency when utilizing external tools. This standardization allows for cross-platform compatibility between different LLM providers without heavy re-configuration. Define clear success and failure states for every communication handshake. If a node fails to acknowledge a request within a specific threshold, the system must trigger a predefined fallback logic. This prevents cascading failures across the network. Use the A2A Linker to manage these connections with zero API settings, simplifying the integration of cross-machine skills.

Security and Privacy Benchmarks

Privacy is an architectural requirement, not an optional feature. Implement end-to-end encryption for all agent-to-agent data streams to prevent interception. Audit the entire network infrastructure to identify and eliminate potential data logging points. Traditional monitoring tools often record sensitive interaction history, creating a massive security liability. A multi-agent system should utilize an AI Agents Dedicated Switchboard that adheres to a strict zero-log policy. This ensures that interactions are ephemeral and vanish after execution. Establish granular access controls for all remote server connections. Limit agent permissions to the specific resources required for their assigned task. This minimalist security posture protects the integrity of the distributed environment while allowing agents to operate autonomously.

Infrastructure Requirements for Distributed Agents

Distributed multi-agent systems require a physical networking layer that supports cross-machine handshakes without the overhead of standard SSH. To maintain architectural clarity and security, the infrastructure must include the following:

- Cross-machine connectivity via a transparent, zero-log intermediary.

- A dedicated switchboard to manage agent discovery across disparate cloud clusters.

- Terminal-based routing to minimize latency and reduce the network attack surface.

- Free server connections to enable rapid scaling without infrastructure bloat.

- Zero API settings to simplify the deployment of specialized skills across nodes.

Standard networking protocols often fail the requirements of high-velocity agentic workflows. SSH and heavy VPN tunnels introduce latency that stalls real-time orchestration. In a distributed environment, agents reside on different physical servers or varied cloud providers. Establishing peer-to-peer links across these boundaries increases the complexity of firewall management. A dedicated switchboard resolves these limitations by providing a secure handshake layer. This approach maintains the separation between agent logic and communication transport, ensuring the infrastructure remains unobtrusive.

The Role of a Dedicated AI Switchboard

Agents require a specialized hub to establish connections and share skills across the network. Without a central switchboard, managing discovery between distributed nodes becomes an O(n²) complexity problem. An AI Agents Dedicated Switchboard provides a clinical point of entry for all network participants. This infrastructure must utilize a zero-log architecture to ensure that no interaction data is recorded or stored. This is critical for enterprise environments where data sovereignty is a prerequisite. Developers can explore the A2A Linker GitHub for open-source examples of how to implement these connectivity patterns without assuming the role of a managed host.

Securing Remote Server Connections

Relying on standard API keys across multiple untrusted agents introduces significant risk. If one node is compromised, the entire credential set is exposed. Terminal-based switchboards reduce this attack surface by utilizing secure, ephemeral links rather than persistent API credentials. This approach ensures that the intermediary remains a transparent pipe rather than a data repository. Free server connections facilitate rapid scaling by allowing developers to link agents across remote servers without incurring infrastructure overhead. This minimalist design prioritizes functional utility and privacy, ensuring that the tool operates unobtrusively within the existing stack. To begin building your distributed network, configure your dedicated switchboard today.

Optimizing Interoperability with A2A Linker

Interoperability within a multi-agent system requires a neutral transport layer that doesn't compromise data sovereignty. A2A Linker provides a clinical solution for cross-machine collaboration through the following technical features:

- Minimalist Switchboard: Facilitates secure agent-to-agent linking without the bulk of managed hosting platforms.

- Zero-Log Architecture: Guarantees that interaction data remains ephemeral and is never recorded or stored.

- Zero API Settings: Removes the need for complex configuration, allowing for immediate deployment of specialized skills.

- Framework-Agnostic Connectivity: Enables communication between agents built on disparate SDKs, including Google ADK, OpenAI, and CrewAI.

Traditional debugging tools often prioritize visibility at the expense of privacy. Platforms like LangSmith provide monitoring by logging every node execution, which creates a permanent record of sensitive agent logic. A2A Linker operates as a transparent intermediary, focusing on the integrity of the system rather than data-intensive monitoring. This approach respects the developer's need for privacy while providing the necessary infrastructure for complex, autonomous interactions across distributed environments. It functions as a quiet enabler, allowing entities to solve distributed problems without adding unnecessary ecosystem dependencies.

Implementing Zero-Log Connectivity

Linking agents through the A2A Linker switchboard involves establishing a secure handshake that vanishes upon completion. This zero-log approach ensures that proprietary logic and sensitive inputs remain within the local execution environment. The technical benefit of Zero API settings is significant; it allows architects to scale multi-agent networks without managing a growing list of transport-layer credentials. For detailed technical configuration, refer to the A2A Linker Setup Guide. This documentation provides the necessary syntax for establishing cross-machine connections in a minimalist developer environment.

Scaling Distributed AI Swarms

A2A Linker handles high-throughput agent interactions by optimizing the routing layer for speed and functional utility. Because the system is framework-agnostic, it manages diverse agent fleets where different nodes utilize different underlying models or protocols. This flexibility prevents vendor lock-in and allows for the integration of new skills as they become available. Developers can utilize free server connections to test scalability across remote cloud providers without incurring unnecessary overhead. This modularity ensures the system can grow into a complex swarm while remaining lean and efficient. Check the latest implementation examples on GitHub to see how these connectivity patterns function in production-grade environments. The focus remains on the tool's ability to operate unobtrusively, prioritizing the logic and integrity of the system above all else.

Deploying Resilient Agentic Infrastructure

- Standardize on framework-agnostic protocols to ensure cross-machine interoperability across diverse agent fleets.

- Prioritize zero-log transport layers to mitigate security risks during ephemeral data handshakes.

- Utilize a dedicated switchboard to manage discovery and execution without increasing ecosystem bloat.

Building a production-ready multi-agent system requires moving beyond theoretical frameworks toward robust networking standards. Integrity is maintained when the communication layer operates as a transparent intermediary. By utilizing a dedicated switchboard, architects don't need to manage the technical limitations of distributed environments at the expense of privacy. This architectural choice ensures that data states remain temporary and secure, focusing on the tool's ability to operate unobtrusively.

Establish secure, zero-log connections for your AI agents with A2A Linker. Our platform provides an AI Agents Dedicated Switchboard and free server connection capabilities designed for the minimalist architect. Build with confidence, knowing your system's integrity is protected by a principled alternative to data-intensive monitoring. Clean logic and secure handshakes are the foundation of autonomous success.

Frequently Asked Questions

What is the difference between a single-agent and a multi-agent system?

A single-agent system operates through central logic and sequential execution, which creates bottlenecks in complex environments. In contrast, a multi-agent system utilizes distributed autonomous entities to execute tasks in parallel. This modular approach allows the network to solve distributed problems that exceed the capacity of monolithic AI systems. Each node manages its own state, improving overall system resilience and scalability across different servers.

How do agents communicate in a multi-agent system?

Agents communicate through standardized protocols like JSON-RPC or the Linux Foundation's A2A standard. These protocols define how data is structured and exchanged during ephemeral handshakes. Effective communication requires a dedicated transport layer to route messages between nodes without compromising the underlying agent logic. Using a neutral intermediary ensures that interactions remain consistent regardless of the specific frameworks used to build individual agents.

Why is a zero-log policy important for AI agent networking?

A zero-log policy is a prerequisite for maintaining data sovereignty in enterprise environments. Standard monitoring tools often record interaction history, which creates a massive security liability if sensitive logic or proprietary data is leaked. By utilizing a zero-log architecture, the system ensures that all agent interactions are temporary. Data vanishes upon completion of a handshake, significantly reducing the network's attack surface and protecting user privacy.

What are the main challenges in scaling a multi-agent system?

Scaling a multi-agent network introduces challenges related to network latency and the complexity of O(n²) discovery. As more nodes are added, the overhead of managing handshakes between distributed servers can stall execution. Architects must implement a dedicated switchboard to manage these connections efficiently. Without a clinical infrastructure to handle routing, the system risks cascading failures caused by unverified handoff states or saturated communication channels.

Can agents from different frameworks work together?

Agents built on different frameworks can work together if the system utilizes framework-agnostic connectivity. Protocols like the Model Context Protocol (MCP) and A2A facilitate communication between diverse SDKs like CrewAI, LangChain, or Google ADK. A transparent intermediary like the A2A Linker serves as the switchboard for these interactions. This allows developers to integrate specialized skills across a fleet without being locked into a single proprietary ecosystem.

How does an AI switchboard improve agent-to-agent security?

An AI switchboard improves security by replacing persistent API keys with ephemeral, terminal-based links. Standard API keys shared across multiple untrusted agents create significant exposure if a single node is compromised. A dedicated switchboard isolates the connection layer, ensuring that agents only access the specific resources required for their task. This minimalist security posture protects the integrity of the distributed environment while allowing for autonomous execution without permanent record-keeping.

What role does the Model Context Protocol (MCP) play in MAS?

The Model Context Protocol (MCP) serves as an open standard for consistent tool-use across different agent architectures. As of May 2026, MCP is implemented on over 10,000 enterprise servers to facilitate interoperability. It ensures that any agent can interact with external tools using a unified syntax. This standardization reduces the need for custom integration logic and allows for more rapid deployment of complex agentic workflows while maintaining system stability.

Is it possible to connect AI agents across different physical servers?

It's possible to connect agents across different physical servers using cross-machine connectivity tools. Distributed agents often reside in varied cloud environments or local hardware clusters. Implementation requires a transport layer that supports secure handshakes across these boundaries without the latency of heavy VPN tunnels. Using a free server connection through a dedicated switchboard allows for rapid scaling of distributed swarms while maintaining functional utility and privacy.