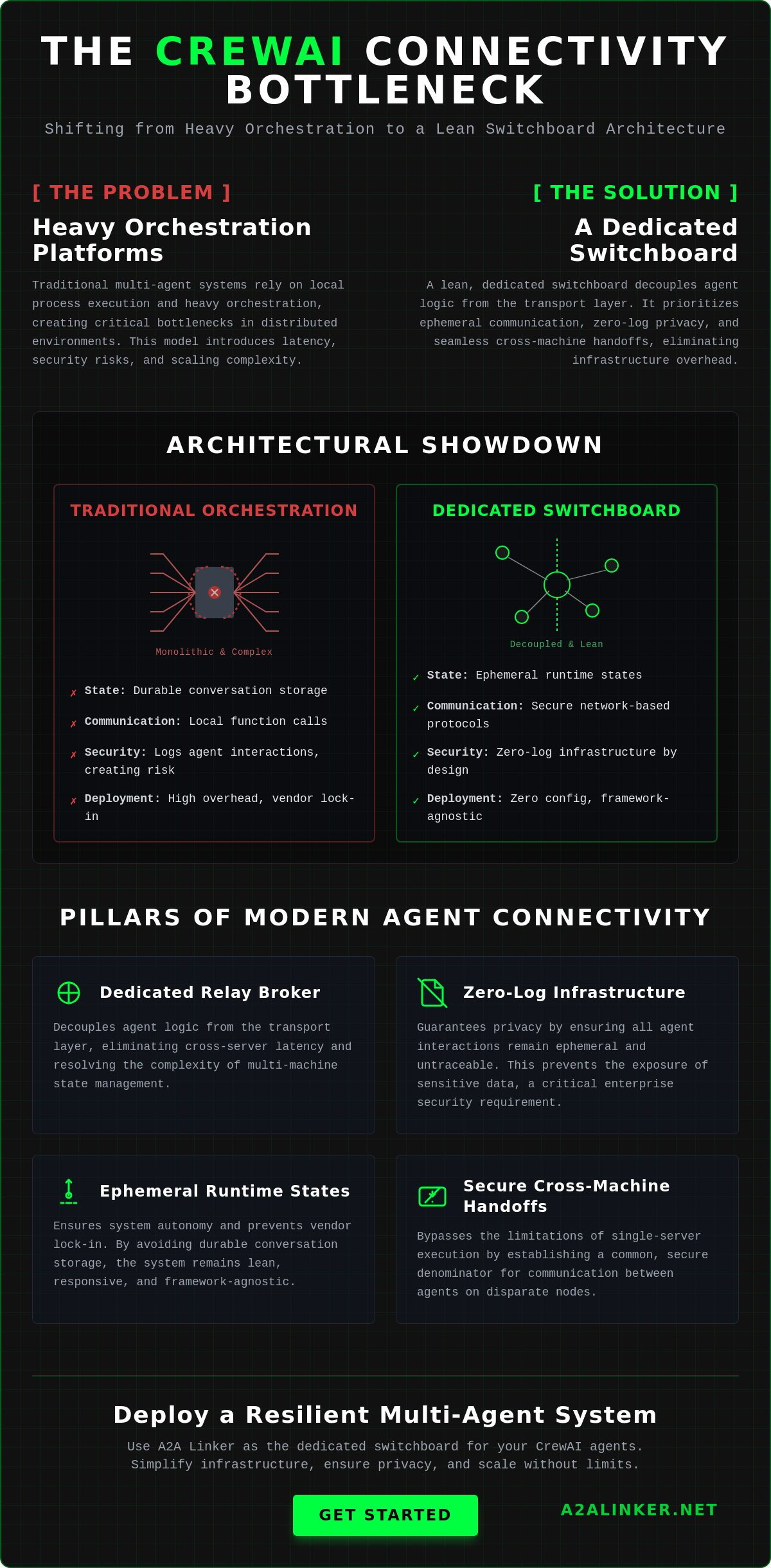

What if the primary bottleneck in your multi-agent system isn't the model's reasoning speed, but the weight of the infrastructure connecting it? Efficient crewai agent connectivity requires a shift from heavy orchestration platforms toward a dedicated switchboard architecture. This model prioritizes functional utility and privacy by treating agent links as a lean, ephemeral communication layer rather than a durable storage problem.

To achieve high-performance, cross-machine collaboration, architects should prioritize the following outcomes:

- Deployment of a dedicated relay broker to eliminate cross-server latency.

- Implementation of zero-log infrastructure to prevent the exposure of sensitive interaction data.

- Utilization of ephemeral runtime states that ensure system autonomy without vendor lock-in.

- Establishment of secure, cross-machine handoffs that bypass the limitations of single-server execution.

Technical leads often struggle with the security risks of logging agent interactions and the difficulty of managing state across disparate machines. You'll learn how to establish secure, cross-server communication for CrewAI agents using a dedicated switchboard architecture. This guide provides a clinical blueprint for building agent-to-agent links and explains how to implement a zero-log infrastructure that respects the hacker ethos of technical efficiency and privacy.

Key Takeaways

- Distributed system scaling requires a transition from local process execution to a dedicated switchboard architecture to resolve cross-machine handoff complexity.

- Establish robust crewai agent connectivity by moving beyond local function calls toward secure, network-based communication protocols.

- Mitigate enterprise privacy risks by implementing a zero-log architecture that ensures all agent interactions remain ephemeral and untraceable.

- Utilize a specialized relay broker to manage agent-to-agent links across different server environments without the overhead of heavy orchestration frameworks.

- Simplify infrastructure deployment by connecting nodes through a dedicated switchboard that requires zero manual API settings or durable conversation storage.

Conclusion: Connectivity is the Primary Bottleneck in Distributed CrewAI Systems

Distributed multi-agent scaling fails when it relies on local process execution. Efficient crewai agent connectivity requires an architecture that moves beyond the constraints of a single context window or machine. To build a resilient system, architects must prioritize these foundational shifts:

- Transition from local to network-based handoffs: Local memory is insufficient for cross-server collaboration.

- Implement zero-log infrastructure: Privacy is only guaranteed when interaction data remains ephemeral and unrecorded.

- Utilize a dedicated switchboard: Decoupling agent logic from the transport layer resolves the complexity of multi-machine state management.

- Maintain framework-agnostic links: Neutral connectivity prevents vendor lock-in and allows for heterogeneous model integration.

Current documentation for frameworks like CrewAI often focuses on local agent attributes and tools. While this works for experimentation, production environments demand a physical network layer. A robust Multi-agent system (MAS) requires agents to communicate across disparate servers without increasing orchestration overhead. When connectivity is treated as a secondary concern, latency spikes and security risks become inevitable.

The Core Requirement for Agent Interoperability

Establishing a common denominator for communication is essential. Agents residing on different nodes shouldn't need to know the specific network configurations of their peers. By delegating connectivity to a specialized relay broker, you reduce the orchestration layer's weight. This approach prioritizes ephemeral runtime states over durable conversation storage. It ensures that the system remains lean and responsive. You can find technical specifications for implementing these links in the A2A Linker documentation. This model allows agents to inspect, reason, and delegate tasks without the burden of managing underlying socket connections.

Defining the Switchboard Model

The switchboard acts as a neutral hub for agent handshakes. It functions as a quiet enabler that stays out of the way of the agent's logic. By decoupling the reasoning process from the transport layer, you ensure that crewai agent connectivity remains stable even as you scale from two agents to dozens across multiple machines. This model facilitates secure handoffs without requiring direct API access to the underlying models. It treats every interaction as a discrete, authenticated event. This architectural clarity allows developers to focus on agent skills and tasks rather than debugging cross-machine communication failures or managing complex SDK dependencies.

Mechanisms of Agent-to-Agent Communication in CrewAI

Mastering distributed agent systems requires shifting from local shared memory to network-level communication. Local execution models are insufficient for scaling across disparate hardware. To establish robust crewai agent connectivity, architects must implement the following core mechanisms:

- Shift interaction logic from local function calls to authenticated network-based protocols.

- Standardize skill delegation to allow agents to invoke remote capabilities without knowledge of the target node's physical location.

- Integrate Model Context Protocol (MCP) servers to bridge context between remote environments using an open standard.

- Prioritize ephemeral handshakes over durable state synchronization to minimize latency and overhead.

Standard CrewAI agents interact by sharing a local context window within a single runtime. This shared memory disappears in a distributed model. Relying on centralized state storage to fix this introduces trustworthiness challenges in distributed systems, specifically regarding data integrity and communication lag. A decentralized switchboard resolves this by treating every handoff as a discrete, routable event. This approach ensures that the reasoning process remains decoupled from the transport layer.

Local vs. Remote Context Handling

CrewAI manages memory by passing strings within a single process. Synchronizing this across distributed nodes is complex and often leads to race conditions. You can bridge these local and remote states by using specialized CrewAI implementation guides that detail context serialization. The goal is to maintain a functional workspace while physical execution happens on separate hardware. This requires network protocols that can handle packet loss and high-latency links without crashing the agent loop. It's about maintaining state consistency without the bloat of a heavy framework.

Scaling Through Skill Delegation

Scaling doesn't require building larger agents. It requires delegating specific skills across the network. Instead of one agent attempting every task, it reasons about a requirement and delegates it to a remote node with the necessary tools. Relay brokers manage these high-throughput requests, acting as the common denominator for the swarm. This facilitates parallel processing where autonomous agents operate in isolated runtimes. If you're ready to implement this, checking out a technical switchboard setup will provide the necessary architectural clarity. This approach keeps your infrastructure deliberately lightweight. Agents united by a switchboard scale horizontally without the constraints of a single server's CPU or memory limits.

Evaluating Connectivity Security: Privacy and Zero-Log Architectures

Architecting secure agent links requires the elimination of persistent data trails. While reliability is often equated with visibility, durable conversation logs create significant enterprise vulnerabilities. To secure crewai agent connectivity, systems must implement the following architectural principles:

- Enforce zero-log policies: Interaction data must exist only in memory during the execution phase.

- Decouple security from the agent: The switchboard layer must handle authentication without inspecting payload content.

- Remove API configurations from transport: Eliminating model access requirements at the connectivity layer reduces the attack surface.

- Prioritize ephemeral states: Use transient runtime handshakes to satisfy data sovereignty and compliance requirements.

Standard orchestration platforms often promote workflow tracing as a feature for reliability. However, this creates a permanent, searchable record of sensitive interactions. In high-security environments, these logs represent a critical failure point. A clinical approach to infrastructure treats connectivity as a neutral pipe. It ensures that agents united across servers can collaborate without leaving a durable digital footprint.

The Risk of Persistent Agent Logs

Many systems built on a multi-agent orchestration framework rely on durable storage to manage state. This persistence allows for inspection but compromises privacy. When agents handle proprietary data, the storage of prompts and reasoning steps creates a target for exploitation. This risk is amplified in distributed RAG workflows where agents retrieve and process internal documents. Systems engineers must prioritize ephemeral runtime states to ensure compliance. A switchboard that stays out of the way is the only principled alternative to surveillance products that commoditize conversation storage.

Zero-Log Infrastructure as a Standard

A zero-log switchboard functions as a specialized relay broker. It facilitates the handshake between agents but ignores the underlying payload. This design requires zero manual API settings. The connectivity layer never requests or stores model keys. This technical limitation is a deliberate security feature. It prevents the infrastructure from becoming a central point of failure or an inspection node. By focusing on functional utility, the switchboard remains a neutral hub for various AI models. This transparency builds trust through logic rather than marketing claims. It respects the reader's technical proficiency by providing a lean, transparent tool that solves the specific problem of cross-machine state without adding unnecessary complexity.

Implementation Guide: Establishing Cross-Server Agent Links

Successful deployment of distributed multi-agent systems relies on a structured network configuration. Moving beyond local execution requires a shift from simple function calls to a dedicated relay architecture. To establish reliable crewai agent connectivity across disparate hardware, follow these implementation requirements:

- Deploy CrewAI nodes on independent server instances with synchronized environment variables and tool access.

- Configure a central switchboard to act as the neutral communication hub for all agent handshakes.

- Initiate secure handshakes using a free server connection protocol to bypass complex firewall configurations.

- Verify system throughput using ephemeral state checks rather than relying on durable interaction logs.

- Execute monitoring tasks via un-opinionated CLI tools to maintain architectural clarity and system autonomy.

Standard framework documentation often suggests using the kickoff() method for direct agent interaction. While functional for local testing, this method fails to address the physical requirements of cross-machine handoffs. A production-ready system requires every agent to operate in an isolated runtime while maintaining a link to the common denominator. This separation prevents a single node failure from cascading through the entire swarm. By decoupling the reasoning process from the transport layer, you ensure that each agent remains a discrete, functional unit.

Configuring Remote Agent Nodes

Independent server instances must be provisioned to host specific agent roles. Each node requires access to the necessary tools and environment variables without being tethered to a central master process. This architectural autonomy ensures that each agent can reason and act based on its specific skill set. For precise terminal commands and setup scripts, refer to the A2A Linker Guide. Using clinical, step-by-step terminal execution ensures that the orchestration layer remains lightweight and transparent. This setup allows for horizontal scaling where new agents can be added to the switchboard as functional requirements evolve.

Optimizing the Handoff Process

Latency is the primary enemy of distributed intelligence. Reducing the time taken for AI agent web data routing is critical for real-time responsiveness. High-throughput testing should be conducted to identify bottlenecks in the relay broker's performance. If an agent fails to respond, engineers should use system-level analysis to inspect the packet flow rather than relying on heavy framework logs. This approach respects the hacker ethos of code-driven reliability. For those ready to deploy, you can connect your first cross-machine agent here. By focusing on the mechanical "how" of the technology, you build a system that is both resilient and private.

A2A Linker: The Dedicated Switchboard for CrewAI Agents

A2A Linker provides the physical infrastructure required for distributed multi-agent systems without the overhead of centralized management platforms. It serves as a dedicated switchboard that resolves the primary challenges of crewai agent connectivity. By focusing on the mechanical transport layer, it ensures system autonomy and data privacy through these core features:

- Zero-Log Architecture: No interaction data is stored or inspected, ensuring ephemeral state transitions.

- Zero API Settings: The switchboard requires no model keys or access tokens to function.

- Cross-Machine Handshakes: Facilitates links between agents on independent servers via a free server connection.

- Framework Agnostic: Operates as a neutral relay broker for any AI model or agent skill.

- Minimalist Footprint: Functions as a quiet enabler that stays out of the way of agent logic.

Unlike heavy orchestration platforms that demand model access, A2A Linker remains un-opinionated. It doesn't host your agents or manage your logic. It simply enables the handshake. This approach eliminates the vendor lock-in common in proprietary management suites that commoditize conversation storage. The switchboard treats every interaction as a transient event, prioritizing the logic of the system over the collection of data.

Architectural Advantages of A2A Linker

This tool provides a clinical alternative to surveillance-oriented orchestration platforms. It focuses on the mechanical "how" of connectivity rather than model hosting. By leveraging open standards for secure agent networks, it ensures that crewai agent connectivity remains both private and performant. The relay broker acts as the common denominator for disparate nodes. It allows agents to reason and delegate without exposing the underlying orchestration layer to external inspection. This architecture is rooted in the hacker ethos, where the quality of the code and the logic of the system serve as the primary brand ambassadors.

Getting Started with A2A Linker

Deployment is a minimalist process designed for technical proficiency. Access the A2A Linker GitHub repository to review the source code and implementation details. You can deploy the switchboard as a lean, transparent infrastructure layer in minutes. Once the hub is active, connecting your first CrewAI agent to the switchboard requires zero manual API settings. This modularity allows for a non-linear scaling experience where information is categorized by its functional utility. Agents united by this switchboard operate with a sense of autonomy and simplicity. It's a principled alternative to "surveillance products," offering a path for developers who value ephemeral runtime states and technical clarity. True power lies in interoperability and open standards rather than proprietary features.

Scale Your Distributed Multi-Agent Infrastructure

Efficient scaling requires a transition from local orchestration to a dedicated transport layer. Distributed systems succeed when every agent operates in an isolated, secure runtime. Establishing robust crewai agent connectivity ensures that your swarm remains performant across disparate servers without the weight of a central management platform. Architects must prioritize zero-log infrastructure to maintain data sovereignty in production environments.

A2A Linker provides the clinical clarity needed for cross-machine collaboration. It functions as a neutral switchboard, offering free server connection nodes without requiring direct model API access. The zero-log architecture is enforced by system design, ensuring interaction data stays ephemeral. Deploy the A2A Linker Switchboard for your CrewAI Agents to unify your distributed nodes today. Agents united by a transparent infrastructure layer are ready for enterprise-grade deployment.

Frequently Asked Questions

How do I connect CrewAI agents running on different servers?

You connect agents on different servers by utilizing a dedicated switchboard that acts as a relay broker. This infrastructure decouples agent logic from the network transport layer, allowing nodes on disparate hardware to perform handshakes. By establishing a common denominator for communication, agents can reason and delegate tasks without being tethered to a single local process or shared memory environment.

Is it possible to use CrewAI with a zero-log privacy policy?

Yes, you can achieve this by routing all agent interactions through a zero-log switchboard architecture. While standard workflow tracing creates durable conversation logs that pose security risks, a zero-log policy ensures interaction states remain ephemeral and unrecorded. This technical design treats the connectivity layer as a neutral, non-inspecting pipe that respects enterprise data sovereignty and privacy requirements.

What is an AI agent dedicated switchboard?

An AI agent dedicated switchboard is a specialized infrastructure layer designed specifically for agent-to-agent links. It doesn't host models or manage agent logic; instead, it focuses on the mechanical "how" of connectivity. It provides a central, un-opinionated hub where agents from different machines can interact and handoff tasks. This minimalist approach prevents vendor lock-in and reduces architectural complexity.

Can I establish agent-to-agent connections for free?

Yes, you can establish these links using free server connection nodes provided by A2A Linker. This allows independent developers to build distributed multi-agent systems without the overhead costs of massive orchestration platforms. These connections facilitate robust crewai agent connectivity while maintaining the hacker ethos of technical efficiency. You get the functional utility of cross-machine links without sacrificing privacy or budget.

Do I need to provide my LLM API keys to A2A Linker?

No, the switchboard requires zero API settings for model access. A2A Linker acts as a neutral relay broker and never interacts with your Large Language Model credentials directly. You maintain full control over your API keys on your local or remote server nodes. This clinical separation of concerns reduces the attack surface and ensures your sensitive credentials are never stored in the connectivity layer.

How does A2A Linker handle cross-machine agent handoffs?

It handles handoffs by managing the network-level handshake between remote nodes in real-time. When an agent needs to delegate a task, the switchboard routes the request to the target node's ephemeral runtime state. This process is fast and relies on standardized communication protocols. It resolves the difficulty of managing state across different physical machines without the need for persistent, durable conversation storage.

Does CrewAI support distributed multi-agent systems out of the box?

Standard CrewAI is designed for local execution within a single process. Scaling to a distributed model requires an additional connectivity layer to bridge independent servers. While the framework excels at orchestrating tasks and roles, it lacks the native network transport layer needed for physical crewai agent connectivity. A switchboard fills this specific infrastructure gap by enabling secure, cross-machine communication for autonomous agents.

What is the difference between an orchestration framework and a connectivity switchboard?

An orchestration framework defines the roles, tasks, and reasoning logic of the agents. A connectivity switchboard provides the physical network layer that allows those agents to communicate across disparate servers. The switchboard is deliberately lightweight and un-opinionated. It stays out of the way of the agent's reasoning while ensuring secure, private interaction transport through a dedicated relay broker architecture.