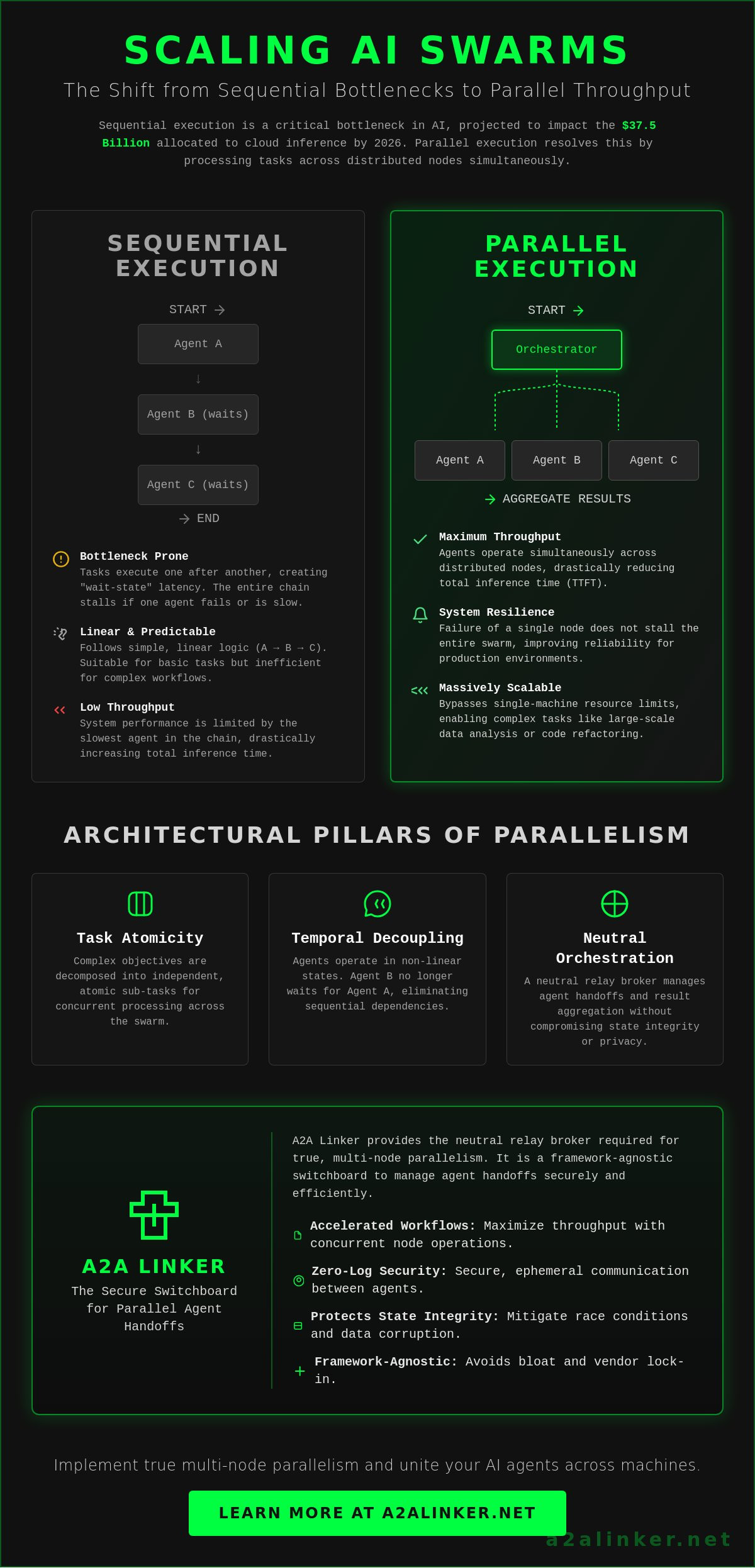

Sequential execution is an architectural bottleneck that consumes a vast portion of the $37.5 billion allocated to AI cloud inference in 2026. Parallel agent execution resolves this by processing tasks across distributed nodes simultaneously to maximize throughput while maintaining state integrity. Utilizing a neutral relay broker ensures:

- Accelerated agentic workflows through concurrent node operations.

- Secure, zero-log communication between agents on separate servers.

- Protection against state corruption during complex parallel write operations.

- Scalable architecture for AI swarms that avoids the bloat of heavy frameworks.

You likely agree that latent response times in sequential chains are unacceptable for production environments. This article explains how to scale AI swarms with secure connectivity to achieve faster performance. We'll analyze how to manage ephemeral runtime states and implement the A2A Protocol, established in April 2025, to ensure your agents stay united across machines.

Key Takeaways

- Learn how parallel agent execution optimizes distributed AI systems by decoupling intent from linear temporal states to maximize throughput.

- Understand the architectural distinction between asynchronous concurrency and true multi-node parallelism for high-velocity agentic workflows.

- Identify strategies to mitigate race conditions and state corruption that occur when multiple agents attempt simultaneous write operations.

- Architect scalable AI swarms by decomposing objectives into atomic sub-tasks that operate independently across distributed servers.

- Implement a secure, framework-agnostic switchboard to manage agent handoffs without persistent logs or complex API configurations.

Defining Parallel Meaning in Agentic Workflows

Parallel agent execution is the architectural transition from linear, blocking sequences to non-linear, simultaneous operations. By decoupling intent across distributed nodes, systems achieve higher throughput and greater resilience. The primary objective is to eliminate the "wait-state" latency where one agent pauses for another’s output. Implementing this requires a neutral orchestration layer to manage ephemeral states across different servers.

- Task Atomicity: Complex objectives are broken into independent sub-tasks for concurrent processing.

- Temporal Decoupling: Agents operate in non-linear states, removing the sequential bottleneck of traditional chains.

- Orchestration: A neutral relay broker manages the handoff and aggregation of results without compromising state integrity.

In agentic systems, the term "parallel" refers to the simultaneous operation of multiple autonomous systems. This differs from simple multi-threading within a single script. It involves the application of parallel computing principles to autonomous reasoning. When Agent B no longer waits for Agent A to finalize a response, the system achieves true parallel agent execution. This architectural shift is necessary to handle the 15% of enterprise AI interactions projected to occur via autonomous protocols by late 2026. Effective execution relies on a system's ability to "handoff" and "delegate" without adding unnecessary complexity or vendor lock-in.

The Core Benefits of Parallel AI Operations

Distributing workloads across multiple model instances significantly reduces total inference time. This is particularly evident in Time to First Token (TTFT) metrics. System resilience improves because the failure of a single parallel node doesn't stall the entire swarm. For complex tasks like multi-file code refactoring or large-scale data analysis, scalability is only achievable through this distributed approach. It allows developers to bypass the resource limits of a single machine by utilizing cross-machine connectivity. Systems that prioritize privacy often use a zero-log approach to ensure these parallel interactions remain secure and ephemeral.

Parallel vs. Sequential: A Functional Comparison

Sequential workflows are predictable. They follow linear logic where each step depends on the previous one. This is suitable for simple data entry or basic query responses. However, it's slow. Parallel workflows prioritize velocity. They require sophisticated state management to prevent data collisions and race conditions. You should pivot from a single-agent chain to a multi-agent parallel swarm when your workflow involves independent variables that don't share a direct causal link. Managing these handoffs requires an AI agents dedicated switchboard that stays out of the way, ensuring privacy through zero-log communication while maintaining functional utility. This setup allows agents built on different frameworks to collaborate without heavy SDK requirements.

The Mechanics of Concurrent vs. Parallel Agent Operations

Distinguishing between concurrency and parallelism is vital for architecting scalable AI swarms. Concurrency involves interleaving tasks within a single runtime environment, whereas true parallel agent execution requires simultaneous processing across distributed hardware nodes. This distinction dictates whether a system is merely efficient at task management or capable of handling high-velocity, multi-agent workloads.

- Concurrency manages multiple task states within a single thread using asynchronous patterns.

- Parallelism executes independent operations at the exact same moment on separate CPU or GPU cores.

- Distributed infrastructure prevents local resource exhaustion during intensive LLM inference.

- Relay brokers provide the communication layer necessary to link agents across different servers securely.

Agentic workflows often start with concurrency. A single script might use async calls to query three different models. While this feels fast, it's still bound by the local machine's memory and processor limits. True scalability emerges when you move to a distributed model. In this setup, agents reside on separate server nodes, potentially across different cloud providers. This removes the hardware bottleneck and allows for genuine parallel agent execution. It enables a swarm to process massive datasets or complex codebases without the latency of a single CPU queuing tasks. Systems engineers prioritize this architecture to ensure that ephemeral runtime states remain isolated, preventing the memory leaks often associated with long-running concurrent processes.

Async Generators and Event Loops

Modern runtimes use event loops to simulate multi-tasking. When an agent waits for an API response, the system switches to another task. This prevents the application from hanging, but it doesn't increase raw compute power. Single-machine concurrency fails when handling high-compute LLM tasks that demand significant VRAM. Async generators maintain result ordering by yielding data streams as they become available without blocking the main execution path. This ensures the orchestration layer receives data in a predictable sequence for final assembly.

Distributed Parallelism Across Server Nodes

True hardware parallelism requires moving beyond local execution. By April 2026, 55% of AI cloud spending was attributed to inference costs, reflecting the shift toward distributed agent networks. Linking these nodes requires a dedicated switchboard to handle handshakes between agents on different machines. You can refer to the A2A Linker guide for technical details on establishing these cross-machine connections. Using an AI Agents Dedicated Switchboard allows for secure, zero-log communication between nodes. This approach bypasses SDK complexity by providing a neutral relay broker for agent-to-agent handoffs.

Overcoming the Bottlenecks of Distributed Parallelism

Effective parallel agent execution depends on strict state isolation and the elimination of persistent logging. Without these constraints, distributed systems suffer from race conditions and data corruption that degrade output reliability. Resolving these bottlenecks requires a neutral relay broker that prioritizes ephemeral runtime states over durable storage. By implementing a zero-log architecture, developers can scale AI swarms without creating permanent security vulnerabilities or compromising system integrity.

- Race Condition Mitigation: Use smart scheduling to parallelize read operations while serializing write operations to prevent simultaneous state modification.

- State Integrity: Maintain isolated execution environments for each node to avoid conflicting or hallucinated outputs caused by shared memory leaks.

- Privacy Preservation: Deploy zero-log protocols to ensure that high-speed agent-to-agent communication streams are never stored in persistent databases.

- Task Allocation: Utilize dynamic binding to route sub-tasks to the most capable agents based on real-time availability and specialized skill sets.

Unmanaged parallel execution introduces significant architectural risks. When two agents attempt to modify the same file or state simultaneously, the resulting race condition often leads to system failure. This is not just a performance issue; it's a data integrity crisis. Industry reports from April 22, 2026, indicate that 15% of enterprise AI interactions now occur via autonomous protocols. This volume makes manual state management impossible. A dedicated switchboard acts as the common denominator, allowing agents to handoff tasks without the overhead of a heavy framework. It ensures that parallel nodes operate in a non-blocking manner while protecting the ephemeral runtime state from external inspection.

Managing Stateful vs. Read-Only Operations

Architecting for speed requires a clear distinction between operation types. Parallelize read-only queries to maximize throughput across multiple server nodes. Conversely, serialize any operation that alters the system state. You can use dynamic binding to allocate these tasks based on real-time agent capability. Once parallel tasks complete, the orchestration layer must sort the disparate results back into a coherent, ordered response. This maintains the logical flow required for complex decision-making processes.

The Security of Ephemeral States

Persistent logging is a primary bottleneck in high-speed swarms. It creates I/O latency and serves as a durable target for data exfiltration. A zero-log policy for agent-to-agent interactions is essential for maintaining a secure environment. By ensuring that execution nodes don't leak data across server boundaries, you protect the privacy of the underlying logic. This "quiet enabler" approach allows for cross-machine collaboration without the need for complex API settings or SDK integration. It keeps the focus on functional utility and architectural clarity.

Designing a Scalable Architecture for Parallel AI Tasks

Scalable architecture for parallel agent execution relies on a modular design that isolates individual sub-tasks to prevent system-wide bottlenecks. By decomposing complex objectives into atomic components, developers can distribute workloads across multiple machines without risking state corruption. This approach requires a neutral relay broker to manage handoffs and a dedicated verification agent to validate aggregated results. Industry benchmarks from April 2026 suggest that properly architected swarms can reduce total task completion time by up to 70% compared to sequential chains.

- Atomic Decomposition: Isolate tasks into independent units that don't share immediate dependencies.

- Specialized Routing: Assign sub-tasks to agents within a swarm intelligence framework based on their specific functional skills.

- Neutral Handoffs: Establish a communication hub that links distributed nodes without requiring complex API configurations or persistent logs.

- Result Validation: Implement a final verification node to inspect and aggregate parallel outputs into a single, high-integrity response.

Architecting for parallelism means identifying "embarrassingly parallel" tasks. These are operations where sub-tasks are entirely independent, such as summarizing a 500-page document by processing individual chapters simultaneously. Dependency mapping is critical. You must ensure no parallel node requires real-time data from a concurrent peer. If a dependency exists, the workflow must be refactored or serialized at that specific junction. Using CLI tools allows you to monitor agent status across distributed nodes, ensuring that ephemeral runtime states remain healthy and synchronized. This architectural clarity prevents the resource contention common in monolithic systems.

Task Decomposition Strategies

Start by stripping away unnecessary adjectives from the primary objective. Focus on functional requirements. If the goal is "analyze and refactor a repository," the architecture should spawn separate agents for code inspection, vulnerability scanning, and documentation updates. These tasks run concurrently. Mapping these dependencies prevents race conditions. Effective parallel agent execution thrives when the orchestration layer only manages the "what" and "where," leaving the "how" to the individual node.

The Role of the Orchestration Layer

Heavy frameworks often struggle with cross-machine connectivity because they're designed for local execution. An external connectivity switchboard resolves this by acting as a neutral relay between agents on different servers. This maintains agent autonomy while ensuring system-wide synchronization. Balancing the compute load across available connections prevents any single node from reaching saturation, which is vital for maintaining low latency. To achieve this level of architectural clarity without vendor lock-in, implement an AI agents dedicated switchboard for secure, cross-machine handoffs.

A2A Linker: The Secure Switchboard for Parallel Agent Handoffs

A2A Linker serves as the neutral relay broker required to facilitate parallel agent execution across distributed environments. By providing a dedicated switchboard, it enables seamless handshakes between agents on separate servers without the overhead of complex API configurations. This architectural approach ensures that system interactions remain ephemeral and secure, solving the critical challenge of cross-machine connectivity for autonomous swarms.

- Infrastructure Bridging: Link parallel agents across different cloud providers or local nodes securely.

- Zero API Settings: Establish immediate connectivity without manual endpoint configuration or SDK integration.

- Ephemeral State Management: Use 0-LOG architecture to prevent the storage of sensitive runtime data.

- Horizontal Scaling: Leverage free server connection capabilities to expand swarm capacity without infrastructure cost barriers.

While previous sections addressed the logic of task decomposition and state management, the physical reality of scaling requires a robust connectivity layer. Most existing frameworks focus on local tool execution, ignoring the latency and security risks of cross-machine communication. A2A Linker acts as a common denominator for disparate agents, allowing them to delegate and handoff tasks regardless of their underlying framework. This neutral position avoids vendor lock-in and respects the reader's technical proficiency by providing a lean, functional tool that stays out of the way.

Zero-Configuration Connectivity

Eliminating individual API settings is essential for high-velocity deployments. When connecting distributed parallel agents, the focus should remain on system logic rather than network troubleshooting. A2A Linker provides cross-machine compatibility, linking local development environments to remote high-compute nodes seamlessly. This allows for parallel agent execution that utilizes the full hardware potential of your network. You can download the A2A Linker tools on GitHub to begin architecting parallel networks today. Refer to the technical documentation for implementation details on secure handshakes.

Privacy-Centric Scaling

In enterprise environments, data sovereignty is non-negotiable. A zero-log switchboard is the only viable option for managing parallel execution streams that contain proprietary logic. By prioritizing ephemeral runtime states, A2A Linker ensures that data exfiltration risks are minimized. High-speed parallel interactions are processed and immediately discarded, maintaining a clean architectural footprint. This commitment to functional utility and privacy enables a truly decentralized network where agents remain united through secure connectivity. It positions the tool as a principled alternative to durable conversation storage products, focusing instead on the mechanical "how" of agent collaboration.

Architecting for High-Velocity Agent Collaboration

Implementing parallel agent execution is the final step in moving from experimental multi-agent chains to production-ready AI swarms. By following a modular architectural pattern, you ensure that your distributed nodes remain performant and secure. Key takeaways for technical implementation include:

- Structural Decoupling: Utilize a neutral relay broker to bridge agents across disparate server environments without the friction of heavy SDKs or framework lock-in.

- Privacy Preservation: Enforce zero-log protocols to ensure that high-velocity interactions and ephemeral runtime states remain isolated and protected from persistent data leaks.

- Resource Optimization: Scale your swarm horizontally across multiple machines using free server connection capabilities to minimize latency and maximize throughput.

- Functional Handoffs: Prioritize the mechanical delegation of tasks through a dedicated switchboard rather than maintaining permanent, vulnerable conversation storage.

A2A Linker provides the clinical clarity and technical infrastructure needed to manage these complex interactions with zero API settings. Connect your parallel agents securely with A2A Linker to establish a resilient, decentralized network that prioritizes functional utility and privacy. Agents united.

Frequently Asked Questions

What is the difference between concurrent and parallel agent execution?

Concurrency manages multiple task states by switching contexts within a single runtime environment; parallelism executes them at the exact same moment on separate hardware nodes. Key distinctions include:

- Resource Usage: Concurrency shares a single CPU core, while parallelism utilizes multiple cores or servers.

- Execution Timing: Concurrent tasks are interleaved; tasks in parallel agent execution are simultaneous.

- Throughput: Parallelism provides a linear increase in processing speed for independent tasks.

Can all AI agents run in parallel?

No, only agents assigned to independent sub-tasks can operate simultaneously. The logical structure of the workflow determines parallel eligibility:

- Independent Tasks: Operations like sentiment analysis on separate files can run in parallel.

- Causal Dependencies: If Agent B requires Agent A's specific output to reason, they must remain sequential.

- Atomic Decomposition: Complex goals must be broken into non-overlapping units for successful parallelization.

How do I prevent race conditions in parallel agent workflows?

Preventing race conditions requires strict state isolation and the serialization of write operations. Implement these technical safeguards:

- Memory Isolation: Ensure each agent operates within its own ephemeral runtime state.

- Write Serialization: Queue any task that modifies a shared database or file.

- Read Parallelization: Allow multiple agents to access read-only data simultaneously to maximize velocity.

Is parallel agent execution more expensive than sequential execution?

Parallel execution increases momentary compute density but typically lowers total infrastructure costs by reducing execution duration. Financial benefits include:

- Reduced Uptime: Shorter total task time means fewer billed instance hours.

- Optimized Throughput: High-velocity processing prevents the "wait-state" latency that inflates cloud costs.

- Resource Efficiency: Distributing tasks prevents single-node saturation and associated performance throttling.

What infrastructure is required for parallel agent connectivity?

True parallelism across distributed systems requires a neutral communication layer and multiple runtime nodes. Essential components include:

- Relay Broker: A dedicated switchboard to manage handshakes between agents on different servers.

- Distributed Nodes: Hardware or cloud instances capable of isolated task processing.

- Neutral Protocols: Standardized communication methods that don't rely on heavy, proprietary SDKs.

How does zero-log architecture improve parallel execution performance?

Zero-log architecture accelerates parallel agent execution by removing the I/O bottlenecks associated with persistent storage. Performance gains result from:

- Latency Reduction: Eliminating disk-write operations for every agent interaction speeds up state transit.

- I/O Optimization: Systems focus on memory-to-memory communication rather than database indexing.

- Privacy by Design: Ephemeral states are discarded immediately, reducing the compute overhead of data security management.

Can I use A2A Linker with my existing agentic frameworks?

Yes, A2A Linker functions as a framework-agnostic relay that bridges agents across different environments. Integration provides:

- Cross-Machine Connectivity: Link local agents to remote high-compute nodes without changing your core logic.

- Neutral Handoffs: Facilitate delegation between agents built on disparate orchestration platforms.

- Zero API Settings: Connect distributed nodes without the complexity of manual endpoint configuration.

What happens if a parallel agent node fails during execution?

Distributed architectures provide inherent resilience by isolating failures to individual nodes. The system handles interruptions through:

- Timeout Detection: The orchestration layer identifies non-responsive nodes in real-time.

- Task Redistribution: Failed sub-tasks are reassigned to healthy parallel instances via the switchboard.

- System Continuity: The failure of one node doesn't stall the remaining parallel operations in the swarm.