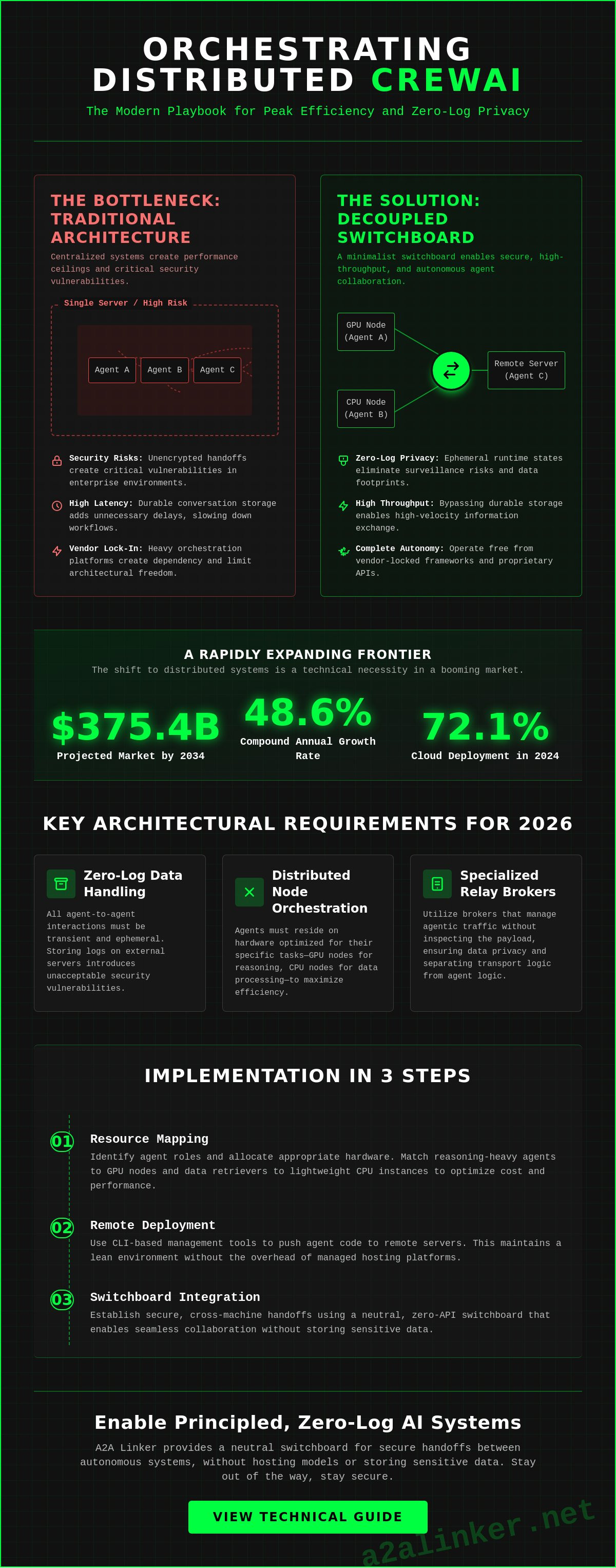

Distributed crew ai workflows achieve peak efficiency when the transport layer is decoupled from agent logic. By implementing a minimalist switchboard, you eliminate the security risks of unencrypted handoffs and the complexity of heavy orchestration platforms. This architecture delivers these specific results:

- A functional multi-server setup using a free server connection.

- Zero-log privacy via ephemeral runtime states.

- High-throughput workflows that bypass the latency of durable conversation storage.

- Complete autonomy from vendor-locked frameworks.

The primary constraint in scaling multi-agent systems isn't the reasoning capability of the models; it's the plumbing between remote nodes. You're likely aware that unencrypted agent handoffs present a critical security vulnerability in enterprise environments. This guide demonstrates how to secure these interactions using A2A Linker. We'll focus on the mechanical "how" of connecting remote servers, ensuring your infrastructure remains lean and your data remains private. As the multi-agent system market scales toward $375.4 billion by 2034, moving away from surveillance-heavy products toward principled, zero-log alternatives is a technical necessity. We'll examine the configuration of a relay broker to facilitate these secure handoffs without adding unnecessary complexity to your stack.

Key Takeaways

- Distributed crew ai systems require a dedicated communication switchboard to maintain peak performance and data privacy across remote server nodes.

- Architecting multi-server environments involves mapping agent roles to specific hardware requirements and deploying them via CLI tools for granular control.

- Secure cross-machine handoffs are achieved through relay-based protocols and ephemeral runtime states that minimize the system's data footprint.

- Implementing a strict zero-log policy for all agent-to-agent interactions eliminates surveillance risks and prevents vendor lock-in with heavy orchestration platforms.

- Utilizing A2A Linker provides a neutral, zero-API switchboard that enables seamless agent collaboration without the overhead of centralized conversation storage.

Executive Summary: Orchestrating CrewAI Across Distributed Infrastructure

Distributed crew ai systems require a dedicated communication switchboard to maintain performance and privacy. While the CrewAI framework excels at orchestrating role-playing autonomous agents via Python, its native architecture often assumes local execution. Modern enterprise requirements in 2026 demand a transition to distributed node orchestration. This shift ensures that high-performance agentic systems can scale across remote servers without compromising data integrity or system speed.

Global multi-agent system market valuations are projected to reach $375.4 billion by 2034. This growth is driven by a 48.6% CAGR as industries move from experimental pilots to production-ready deployments. In this landscape, the true bottleneck isn't agent reasoning, but the secure transport layer between distributed server nodes. Optimal performance relies on ephemeral runtime states. Durable conversation storage creates unnecessary surveillance risks and latency. By utilizing a common denominator for secure server-to-server handoffs, developers maintain architectural clarity and functional utility.

This scalability is essential for businesses utilizing specialized sales automation tools. For instance, Global AI Reps offers AI-driven lead generation representatives that require robust, distributed infrastructure to manage complex sales workflows securely and efficiently.

Key Architectural Requirements for 2026

Current systems must move beyond single-machine scripts. Cloud deployment accounted for 72.1% of the multi-agent market in 2024, highlighting the need for robust remote connectivity. To meet enterprise-grade privacy standards, your infrastructure needs the following components:

- Zero-log data handling: All agent interactions must be transient. Storing logs on external servers introduces vulnerabilities that principled developers avoid.

- Distributed node orchestration: Agents should reside on hardware optimized for their specific tasks, whether it's a GPU-heavy node for reasoning or a CPU-optimized node for data processing.

- Specialized relay brokers: These manage agentic traffic without inspecting the payload, ensuring the "how" of the technology remains separate from the "what."

The Role of the Communication Switchboard

A dedicated switchboard acts as a neutral hub for linking agents across diverse server environments. It functions as a minimalist architect, enabling autonomy without adding unnecessary complexity. Unlike heavy frameworks that demand extensive configuration, a streamlined switchboard eliminates the need for complex API settings at the individual agent level. It prioritizes functional handshakes over proprietary features.

Implementing a tool like A2A Linker provides a principled alternative to surveillance products. It stays out of the way, serving as a "quiet enabler" for your crew ai workflows. This approach ensures secure handoffs between autonomous systems without the switchboard hosting the models or storing sensitive data. For detailed configuration steps on establishing these connections, refer to the A2A Linker technical guide. This setup allows for high-velocity bursts of information exchange while respecting the technical proficiency of the system engineer.

Architecting Multi-Server CrewAI Environments

Architecting a distributed crew ai environment requires a decentralized node strategy where hardware is matched to specific agent functions. A centralized, single-machine setup creates a performance ceiling and security risks that limit scalability. By decoupling agent logic from the transport layer, you achieve a minimalist, high-performance system that maintains privacy through ephemeral runtime states. This architectural shift ensures your agents function as a cohesive unit regardless of physical server location.

- Step 1: Resource Mapping. Identify agent roles and allocate hardware. Reasoning-heavy agents require GPU nodes, while data retrievers run on lightweight CPU instances to optimize cost and performance.

- Step 2: Remote Deployment. Use CLI-based management tools to push agent code to remote servers. This maintains a lean environment without the overhead of managed hosting platforms, reflecting a principled "hacker" ethos.

- Step 3: Switchboard Integration. Establish a secure connection via a dedicated AI agent switchboard. This acts as the common denominator for cross-machine handoffs without requiring complex API settings.

- Step 4: Logic Configuration. Set up the orchestration layer to manage task delegation across nodes. Routing must be based on agent availability and specialized skills rather than proximity.

- Step 5: Dynamic Binding. Implement real-time agent discovery to allow the system to adapt as nodes are added or removed. This ensures the infrastructure remains a quiet enabler of autonomy.

Node Selection and Resource Allocation

Efficient resource allocation prevents bottlenecks in high-throughput workflows. Assign reasoning tasks, such as complex planning or creative synthesis, to high-performance GPU instances to minimize latency. Conversely, delegate lightweight data retrieval or API polling to ephemeral serverless containers. To link these disparate nodes without complex networking overhead, utilize A2A Linker's free server connection. This approach keeps the infrastructure minimalist and functional. By avoiding heavy frameworks, you reduce the attack surface and maintain strict control over the ephemeral runtime state of each node.

Configuring the Orchestration Layer

The multi-agent orchestration framework depends on strict logic to manage distributed tasks. Define task guardrails to prevent infinite loops in cross-server requests; a single circular dependency can exhaust node resources in seconds. Implement event-driven control for granular agent management, allowing the system to trigger handoffs only when specific conditions are met. This ensures parallel processing capabilities for autonomous swarms, moving away from the sequential limitations of traditional crew ai scripts. If you're looking to scale, you can inspect the A2A Linker repository for implementation examples of these orchestration patterns that prioritize architectural clarity over marketing hyperbole.

Technical Implementation: Configuring Remote Agent Handoffs

Successful remote handoffs in a crew ai architecture depend on a protocol-agnostic relay system that prioritizes ephemeral memory over persistent logs. By treating data as transient, you eliminate the privacy vulnerabilities inherent in durable conversation storage. This technical approach ensures that your agentic workflows remain secure and performant across distributed nodes without the overhead of heavy orchestration platforms. Clinical architectural clarity is maintained by decoupling the agent's logic from the transport layer.

- Relay-based handshakes: Establish a common communication protocol that acts as a neutral switchboard between servers. This avoids the complexity of manual API settings at each individual node and ensures a common denominator for data exchange.

- Ephemeral state management: Store runtime data in-memory rather than in persistent databases. This reduces the data footprint during handoffs and aligns with a strict zero-log privacy policy.

- Network-aware Skills: Configure each agent with specific network-aware "skills" for cross-machine communication. This allows agents to reason about their own connectivity and delegate tasks based on node proximity or resource availability.

- Latency monitoring: Telemetry must focus on stream latency and connectivity status. Tracking high-throughput streams helps identify bottlenecks in real-time, ensuring the system stays out of the way of the agent's primary reasoning tasks.

Managing Data Streams and Ephemeral State

Architecting distributed multi-agent systems requires a shift from local file-locking to encrypted transit. In-memory state management is the requirement for high-performance systems in 2026. It ensures that data packets remain transient, disappearing once the handoff is complete. For a deep dive into similar networking patterns, see the LlamaIndex technical analysis. This approach maintains the minimalist "hacker" ethos by prioritizing functional utility over durable storage.

Optimizing Throughput for Agent Swarms

Performance degradation in a crew ai (version 1.14.4) environment often stems from framework-specific bloat. Strip unnecessary metadata from packets to reduce overhead and increase velocity. Use direct terminal-to-terminal links for low-latency command execution. Implementing robust retry logic ensures reliability across unstable remote server connections. A2A Linker facilitates this by providing a dedicated switchboard with zero API settings. This allows agents to focus on their specific roles rather than orchestration overhead. The result is a high-throughput swarm that functions as a single unit across multiple physical machines.

Securing Agent Interactions: Zero-Log Communication Layers

Securing distributed crew ai interactions requires the absolute elimination of persistent data at the transport layer. A zero-log architecture ensures that agentic reasoning remains transient, existing only within the ephemeral runtime state of the active handshake. This approach delivers several critical security benefits for high-performance systems:

- Data Isolation: Private terminal switchboards prevent cross-tenant data leakage by isolating traffic to specific node pairs.

- Surveillance Mitigation: Zero-log protocols ensure the orchestration layer cannot inspect or store conversation payloads.

- Identity Verification: Secure handshake protocols validate agent origins before allowing cross-machine task delegation.

- Autonomy: Self-hosted connectivity nodes remove reliance on third-party "surveillance products" that monetize interaction data.

Enterprise privacy in 2026 demands a shift away from durable conversation storage. When agents collaborate across remote servers, the communication relay must function as a neutral switchboard. It shouldn't possess the capability to "read" or "inspect" the traffic it routes. This design philosophy directly addresses the security risks inherent in unencrypted agent handoffs. By stripping away persistent logging, you reduce the attack surface of your crew ai swarm to the active processing window of each node. Data that isn't stored cannot be breached.

The Architecture of Privacy

Privacy is maintained by ensuring the switchboard is a "quiet enabler" rather than a data harvester. Eliminate the durable storage of reasoning logs at the network level. The relay broker should only facilitate the connection, not the recording. This mirrors the Swarms secure connectivity guide, which emphasizes privacy frameworks for distributed networks. If your orchestration layer requires model API access, it's a security vulnerability. A2A Linker avoids this by focusing solely on the transport of encrypted packets between your own servers.

Mitigating Surveillance in AI Systems

Avoid platforms that monetize user-agent interaction data. These "surveillance products" create a durable record of your proprietary logic and trade secrets. To stay autonomous, host your own connectivity nodes using a minimalist switchboard. This ensures your orchestration layer remains a common denominator for secure handoffs without hosting models or managing API keys. You don't need a heavy framework to achieve enterprise-grade isolation. You need a dedicated tool that stays out of the way and respects the "hacker" ethos of functional utility. Implement zero-log security with A2A Linker to protect your distributed agentic workflows today.

Optimizing Connectivity with A2A Linker Infrastructure

A2A Linker provides the definitive transport layer for distributed crew ai systems. It functions as a neutral switchboard that removes the need for complex API settings at the agent level. By implementing this infrastructure, developers achieve architectural clarity through a minimalist, zero-log protocol. This setup prioritizes functional utility and privacy over the heavy orchestration patterns found in proprietary platforms. The following outcomes are immediate upon deployment:

- Deployment of a dedicated switchboard that facilitates secure cross-machine handoffs.

- Utilization of 0-LOG architecture to ensure all agent interactions remain transient.

- Access to free server connection nodes for unlimited scaling of agent swarms without cost overhead.

- Terminal-style management for precise, clinical oversight of the ephemeral runtime state.

The primary technical limitation in standard crew ai deployments is the assumption of a shared local environment. A2A Linker resolves this by acting as a common denominator for agents residing on disparate hardware. It stays out of the way. It doesn't host models or manage API keys. Instead, it provides the mechanical "how" for agents to reason, delegate, and hand off tasks across the network. This approach is a principled alternative to surveillance products that require durable conversation storage. Your data remains on your servers; the switchboard only facilitates the connection.

Seamless Integration Steps

Integration is designed for speed and technical proficiency. First, clone the repository from GitHub to your local management node. Configure your agents to point to the A2A Linker relay broker for all remote handoffs. This process eliminates the need for manual network tunneling or complex VPN configurations. Verify the connection using the CLI management interface to ensure low-latency throughput. For detailed terminal commands and configuration snippets, consult the technical guide. These steps move your system from a single-machine script to a robust, distributed node network in minutes.

Scaling with A2A Linker

Scaling a multi-agent swarm requires interoperability between different LLM providers and server regions. A2A Linker acts as a neutral hub. It allows a reasoning agent on a high-performance GPU node in North America to delegate a search task to a retrieval agent on a CPU node in Europe. This cross-machine capability is achieved without the bloat of giant orchestration platforms. You maintain a minimalist footprint while achieving high-velocity agentic workflows. By leveraging free server connection nodes, you can expand your infrastructure as needed. The system remains deliberately lightweight, ensuring that true power lies in open standards and architectural simplicity. Agents united.

Scaling Distributed Agentic Infrastructure

Architectural clarity in multi-server environments requires a shift from durable conversation storage to ephemeral runtime states. Treating connectivity as a neutral utility ensures that your agentic workflows remain autonomous and secure. This approach directly addresses the 48.6% CAGR growth projected for the multi-agent system market through 2034 by prioritizing functional utility over framework bloat. You maintain total control over the transport layer without introducing unnecessary surveillance risks.

- Decoupled transport: This eliminates the complexity of unencrypted handoffs between remote nodes.

- Zero-log protocols: This protects proprietary reasoning from surveillance products by ensuring all data remains transient.

- Minimalist switchboards: This facilitates high-throughput crew ai workflows without requiring model API access or complex settings.

Infrastructure should be a quiet enabler that stays out of the way. As you scale across global regions, prioritize a transport layer that respects privacy and technical efficiency. A2A Linker provides the necessary switchboard for these secure interactions, functioning as a principled alternative to heavy orchestration platforms. It's time to move beyond local scripts and embrace a truly distributed, cross-machine architecture that values autonomy.

Establish your secure agent-to-agent network with A2A Linker today.

Agents united.

Frequently Asked Questions

Can CrewAI agents run on different servers?

Yes, crew ai agents operate on separate physical or virtual servers by utilizing a cross-machine relay broker. While the framework often assumes local execution, a dedicated switchboard enables agents on a GPU node in North America to hand off tasks to a CPU node in Europe. This distributed architecture supports the 72.1% cloud deployment rate observed in the multi-agent market as of 2024. It ensures your swarm scales without being tethered to a single machine.

What is a zero-log policy in AI agent communication?

A zero-log policy ensures that no agent-to-agent interaction data is stored on the transport layer. All communication remains in an ephemeral runtime state, disappearing immediately after the handshake is complete. This technical standard eliminates the surveillance risks associated with durable conversation storage. It positions the connectivity tool as a neutral switchboard rather than a data harvester. It's a principled requirement for enterprise-grade privacy in 2026.

How do I secure agent-to-agent handshakes?

Secure handshakes require encrypted relay protocols and identity validation at the node level. By implementing a private terminal switchboard, you isolate traffic and prevent cross-tenant data leakage. This architecture ensures that only authorized agents can delegate tasks or share ephemeral states. It removes the need for complex, model-specific API settings that often create security vulnerabilities in unencrypted environments. Functional utility is maintained through strict handshake logic.

Does CrewAI require a centralized database for agent memory?

No, a centralized database isn't a requirement and often introduces performance bottlenecks. Modern crew ai implementations favor ephemeral memory for cross-machine tasks to maintain high velocity. Using a transient state management system reduces the data footprint and ensures that reasoning logs don't become permanent liabilities. This approach prioritizes architectural clarity and functional utility over heavy, persistent storage. It avoids the latency of durable database writes.

What is the difference between a framework and a communication switchboard?

A framework defines the logic, roles, and backstories of agents, while a switchboard provides the transport layer for their interactions. CrewAI serves as the orchestration logic; A2A Linker serves as the dedicated switchboard that links those agents across different servers. One manages the "what" and "who," while the other handles the mechanical "how" of connectivity and handoffs. They are complementary components of a distributed agentic stack.

Can I use A2A Linker for free with my CrewAI setup?

Yes, you can utilize the A2A Linker free server connection to link your distributed nodes. This allows for the scaling of agent swarms without incurring the cost overhead associated with managed orchestration platforms. The tool remains deliberately lightweight, providing a principled alternative for independent developers who value autonomy and open-source standards. It's a functional utility designed to stay out of the way while enabling cross-machine collaboration.

How does ephemeral state improve AI agent performance?

Ephemeral state improves performance by eliminating the latency inherent in writing to and reading from a persistent database. Agents process information in-memory, facilitating high-velocity bursts of data during cross-machine handoffs. This technical optimization is essential for maintaining high-throughput workflows in autonomous swarms. It avoids the "durable storage" bottleneck that often slows down complex reasoning tasks in distributed environments. The system stays fast and responsive.

What are the infrastructure requirements for a production-grade CrewAI deployment?

Production-grade deployments require specialized nodes mapped to specific hardware requirements, such as GPU instances for reasoning and CPU instances for retrieval. A secure relay broker must manage the traffic between these nodes to ensure reliable connectivity. Additionally, a zero-log communication layer is necessary to meet enterprise privacy standards. This infrastructure supports the projected $375.4 billion multi-agent market by ensuring systems are scalable, secure, and professionally architected.