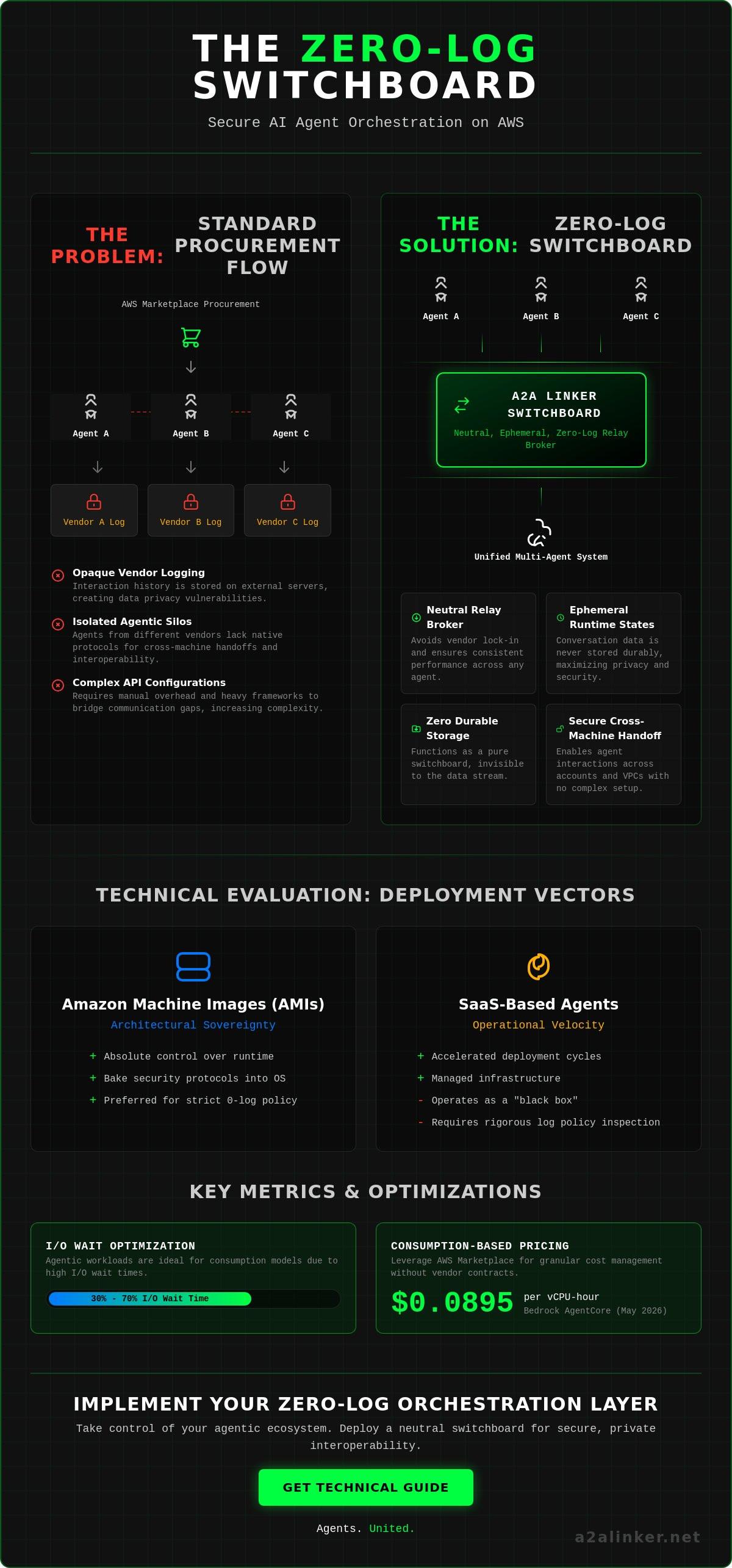

The standard aws marketplace procurement flow solves for software acquisition but fails at secure agentic interoperability. To achieve secure, zero-log agent orchestration, engineers must implement a neutral switchboard that bypasses vendor-specific logging. The following technical requirements ensure architectural integrity:

- Procure AI infrastructure through the aws marketplace to leverage consumption-based pricing, such as the $0.0895 per vCPU-hour for Bedrock AgentCore verified in May 2026.

- Deploy a dedicated switchboard to enable cross-machine communication without durable conversation storage.

- Eliminate opaque logging by utilizing ephemeral runtime states for all agent-to-agent interactions.

- Optimize for agentic workloads that spend 30% to 70% of their time in I/O wait by using consumption-based models.

Fragmented ecosystems and opaque vendor logging create architectural vulnerabilities that compromise data privacy. This analysis details the methodology for navigating the aws marketplace while enforcing a zero-log, agent-to-agent environment. We examine the impact of the April 28, 2026, OpenAI-Bedrock partnership and the technical requirements for building an orchestration layer that remains invisible to the data stream. This guide provides the technical logic for a minimalist, high-velocity infrastructure. Agents united.

Key Takeaways

- Leverage the aws marketplace to acquire Amazon Machine Images for granular architectural control or SaaS agents for accelerated deployment cycles.

- Evaluate vendor subscriptions against strict zero-log requirements and ephemeral state support to ensure privacy at the orchestration layer.

- Deploy a dedicated switchboard to enable cross-account and cross-machine agent interactions without the need for complex API configurations.

- Resolve the isolation of agentic silos by implementing a neutral relay broker that facilitates secure, multi-agent system orchestration.

- Optimize node deployment by configuring secure remote access and maintaining a consistent 0-log communication protocol across all infrastructure.

Conclusion: Streamlining AI Agent Procurement and Connectivity

The integration of autonomous agents into the aws marketplace has shifted the technical bottleneck from procurement to connectivity. Rapid infrastructure acquisition is now a solved problem, but secure, cross-vendor orchestration remains a manual overhead. Engineers must implement a zero-log switchboard to maintain privacy across the Amazon Web Services (AWS) ecosystem. Effective system design requires the following:

- Procurement via the marketplace provides instant access to pre-configured AMIs and SaaS agents.

- Connectivity strategies must utilize neutral relay brokers to avoid vendor lock-in.

- Privacy mandates a 0-LOG environment where conversation data is never stored durably.

- A2A Linker functions as a dedicated switchboard to enable secure, cross-machine interactions.

The Role of AWS Marketplace in 2026

The aws marketplace simplifies the logistical burden of licensing and billing. As of May 2026, consumption-based pricing models like Bedrock AgentCore's $0.0895 per vCPU-hour allow for granular cost management. This centralized catalog enables the deployment of complex third-party software across multiple accounts in minutes. It eliminates the need for manual contract negotiations for each agentic node. Systems engineers can now select from a wide range of Amazon Machine Images (AMIs) that offer full control over the runtime state. This efficiency is critical for scaling multi-agent systems. Centralized management ensures that third-party tools adhere to enterprise governance standards while maintaining rapid deployment cycles.

The Connectivity Imperative

Acquisition doesn't equate to interoperability. Agents procured from different vendors often exist in isolated silos or restricted VPCs. These tools frequently lack native protocols for cross-machine handoffs. Establishing a neutral relay broker is the only way to ensure consistent performance. A2A Linker provides a dedicated switchboard that operates with zero API settings and no durable storage. It focuses on the mechanical "how" of the handoff, acting as an orchestration layer that stays out of the way. By prioritizing ephemeral runtime states, the system maintains privacy across all agent nodes without the weight of heavy frameworks. For detailed implementation protocols, refer to the technical guide. Agents united.

Navigating AI Agent Categories in the AWS Marketplace

Selecting an agentic deployment vector requires a trade-off between operational velocity and architectural sovereignty. The aws marketplace organizes these tools into distinct categories that dictate how an agent interacts with your underlying data. A successful implementation prioritizes the following architectural conclusions:

- Amazon Machine Images (AMIs) are the preferred choice for systems requiring absolute control over the runtime environment and local data persistence.

- SaaS-based agents facilitate rapid deployment but necessitate rigorous inspection of vendor logging practices to prevent data leakage.

- Containerized solutions on ECS or EKS provide the portability required for scaling agentic nodes across diverse geographic regions.

- Model Context Protocol (MCP) servers have emerged as the standard for connecting agents to third-party tools without proprietary SDKs.

Evaluating AMIs vs. SaaS for AI Agents

AMIs allow engineers to bake security protocols directly into the OS. This level of control is vital for maintaining a 0-log environment. You can configure custom security groups and ensure that all conversation data remains in an ephemeral runtime state. SaaS solutions, while faster to integrate, often operate as "black boxes." They manage the infrastructure but may store interaction history on external servers. As of April 2026, the launch of proactive desktop agents like Amazon Quick has blurred these lines. These tools monitor user activity locally but often sync with cloud-based control planes. Engineers must apply a Technical Evaluation Framework to determine if a SaaS vendor's logging policy aligns with internal privacy mandates. Deployment speed shouldn't come at the cost of architectural transparency. For complex environments, using a dedicated switchboard allows you to maintain a secure link between local AMIs and cloud-based SaaS nodes without exposing API settings.

The Rise of MCP Servers

The aws marketplace now features specialized MCP servers that act as a common denominator for agent-to-tool communication. Instead of writing custom code for every tool like GitHub or Slack, agents use the MCP server to inspect, reason, and execute tasks. This modularity mirrors the open-source "hacker" ethos of interoperability. Integration requires a secure, low-latency connection between the agent and the MCP host. On October 31, 2025, AWS enabled usage-based pricing for these runtime containers. This allows developers to scale their tool-use capabilities based on active demand. The challenge lies in the handoff. Every interaction between an agent and an MCP server must be treated as a potential point of data persistence. Stripping away unnecessary logging at this junction ensures that the system remains a neutral conduit for information rather than a surveillance product. Focus on the mechanical "how" of the connection to ensure your orchestration layer remains lightweight and un-opinionated. Agents united.

Technical Evaluation Framework for AI Subscriptions

Technical vetting of aws marketplace subscriptions must prioritize data sovereignty over ease of use. A rigorous evaluation framework ensures that acquired agents don't become durable storage vulnerabilities. Systems engineers should base their procurement decisions on the following technical conclusions:

- Privacy is non-negotiable; verify that vendor logging practices do not include durable conversation storage or metadata persistence.

- Ephemeral runtime states are the only acceptable standard for high-security agentic handoffs.

- Compatibility with existing orchestration layers determines the long-term viability of the agent node.

- Cost models must align with the bursty nature of agentic workloads, where 30% to 70% of time is spent in I/O wait.

Privacy and Zero-Log Verification

Inspect vendor documentation for explicit 0-LOG commitments. Many aws marketplace listings prioritize "durable conversation storage" to improve model performance, but this creates a surveillance risk. A principled architecture requires that the agent functions as a neutral conduit. Verify that the tool doesn't require access to your global API settings for core operations. This isolation ensures that even if a node is compromised, your broader orchestration layer remains secure. Prioritize tools that support ephemeral data handling during agent-to-agent handshakes. This approach mirrors the hacker ethos of keeping the system lean and transparent. If a vendor can't provide a clear data deletion policy for runtime logs, the tool is a liability. Systems designed for Scaling AI Agents in the AWS Marketplace must balance rapid acquisition with these strict privacy mandates.

Interoperability and Framework Support

Agents must communicate across different machine environments without proprietary lock-in. Assess whether the marketplace tool supports standard protocols like HTTPS, SSH, or the Model Context Protocol (MCP). Compatibility with modular agentic libraries is essential for complex delegation tasks. However, avoid heavy orchestration platforms that add unnecessary abstraction and data persistence layers. Instead, look for tools that act as a common denominator for diverse models. On October 31, 2025, AWS introduced usage-based pricing for runtime containers, making it easier to scale high-throughput agents economically. To maintain cross-machine connectivity without the overhead of proprietary SDKs, engineers can utilize the A2A Linker repository to establish a secure switchboard. This setup ensures that your agents can reason and handoff tasks across disparate AWS accounts while maintaining a 0-log footprint. Agents united.

Orchestrating Connectivity: Bridging Marketplace Tools

Procurement is only the initial phase of deployment. Once an agent is acquired via the aws marketplace, it typically resides within a specific VPC or an isolated account. This isolation prevents the cross-machine collaboration required for sophisticated multi-agent systems. Orchestrating these interactions requires a technical shift from heavy frameworks to a lightweight, neutral switchboard. The following architectural conclusions define the connectivity strategy:

- Marketplace agents often operate in isolated silos that prevent autonomous delegation across distributed environments.

- A dedicated switchboard enables secure cross-account interactions without the overhead of vendor-specific lock-in.

- Zero-log relay brokers protect interaction privacy by utilizing ephemeral runtime states rather than durable conversation storage.

- Terminal-based orchestration eliminates the complexity of managed API settings and proprietary SDKs.

The Agent-to-Agent Switchboard Concept

Modern autonomous systems require a neutral communication hub to bridge disparate server environments. Standard tools in the aws marketplace focus on vertical integration within the AWS stack, but they often fail at horizontal connectivity. By implementing A2A Linker, engineers create a secure tunnel for agentic handoffs. This approach utilizes a zero-log architecture to ensure that conversation data is never stored durably. The switchboard acts as a common denominator, allowing agents to inspect and reason across different nodes while maintaining a 0-log footprint. It stays out of the way. It prioritizes functional utility over surveillance. This setup allows for a non-linear orchestration experience where information is categorized by its functional utility across the network.

Establishing Secure Handshakes

High-velocity agent swarms require parallel processing to handle real-time reasoning tasks. Traditional managed hosting often introduces significant I/O wait times that degrade system performance. By using a terminal-based switchboard, you manage agent handoffs without exposing underlying model credentials to third-party brokers. This method ensures low-latency communication across distributed systems. Agents can delegate tasks and handoff state information securely. There's no need for complex SDKs or proprietary frameworks that add architectural bloat. This minimalist approach allows the logic of the system to serve as the primary ambassador for security. For engineers building distributed AI nodes, the priority is a lean, transparent connection that respects technical proficiency. You can establish a free server connection to start orchestrating these secure handshakes today. Agents united.

Implementation Protocol: Deploying Secure AI Nodes

Successful deployment of secure AI nodes requires moving from procurement to active hardening. After selecting infrastructure from the aws marketplace, engineers must enforce a zero-log communication layer to protect ephemeral runtime states. The implementation protocol follows these conclusions:

- Infrastructure selection from the aws marketplace curated catalog must prioritize AMIs for maximum OS-level control.

- Remote orchestration requires hardened SSH configurations on the host operating system to prevent unauthorized access.

- A dedicated switchboard handles agent-to-agent traffic without persistent conversation storage or metadata retention.

- MCP servers provide the mechanical "how" for expanding agent capabilities across third-party tools via a common denominator protocol.

Server Configuration and Security

Hardening the OS is the first step in remote agent control. Once the AMI is launched, configure the security groups to enable SSH on Ubuntu or your specific distribution. This ensures secure remote orchestration for distributed swarms. You can establish free server connection nodes to link agents across different cloud providers. Monitoring system logs is necessary for health checks, but interaction privacy must be maintained at the orchestration layer. Stripping conversation data from these logs prevents the creation of durable "surveillance" records. This approach reflects the minimalist architect's priority: stay out of the way while enabling functional utility. On October 31, 2025, AWS introduced usage-based pricing for runtime containers, which makes scaling these hardened nodes more cost-effective during high-velocity bursts.

Agent Deployment and Verification

Verification confirms the integrity of the 0-log environment. Deploy the A2A Linker switchboard to manage traffic between nodes. This setup bypasses the need for complex API settings or proprietary SDKs. To expand the agent's reach, integrate MCP servers found in the aws marketplace. These servers act as the common denominator for tool use. Test the link by executing a sample task, such as a handoff between an analysis agent and an execution agent. Verify that the runtime state remains ephemeral throughout the session. If no durable conversation logs exist after task completion, the node is secure. This protocol ensures your multi-agent system is a lean, transparent infrastructure rather than a vendor-locked framework. Agents united.

Architecting for Agentic Autonomy

Acquiring infrastructure through the aws marketplace solves the procurement bottleneck, but the challenge of secure, cross-machine connectivity remains. A resilient system design requires a clinical shift toward neutral orchestration and zero-log communication. By prioritizing ephemeral runtime states, engineers can prevent the creation of durable conversation storage across distributed nodes. This ensures that privacy is a mechanical property of the system rather than a policy afterthought.

- Deploy a dedicated switchboard to manage agent traffic without vendor lock-in.

- Implement 0-LOG protocols to maintain interaction privacy in multi-vendor environments.

- Standardize on MCP servers as the common denominator for agentic tool use.

The goal is a minimalist architecture that stays out of the way. A2A Linker offers a privacy-first infrastructure designed for technical proficiency and functional utility. It provides a dedicated AI agent switchboard that operates without complex API settings or SDKs. Connect your AWS agents securely with A2A Linker to start building your autonomous network. Agents united.

Frequently Asked Questions

What is AWS Marketplace and how does it benefit AI development?

The aws marketplace is a curated digital catalog that centralizes the discovery, procurement, and deployment of third-party software. It benefits AI development by providing instant access to pre-configured Amazon Machine Images (AMIs) and SaaS platforms, reducing the time spent on manual licensing and installation. As of May 2026, the platform supports consumption-based pricing for Bedrock AgentCore at $0.0895 per vCPU-hour, allowing for granular cost management during bursty agentic workloads.

How do I manage my AI software subscriptions in the AWS console?

Subscriptions are managed through the "Manage Subscriptions" interface within the aws marketplace console. This centralized dashboard allows you to track usage, modify contract terms, and view billing details across all linked AWS accounts. It ensures that third-party AI tools adhere to enterprise governance standards while maintaining visibility into active resource consumption. Engineers use this view to deregister AMIs or update software versions as architectural requirements evolve.

Can I use AWS Marketplace tools with a zero-log security policy?

Zero-log policies are achievable by selecting marketplace tools that prioritize ephemeral runtime states. Engineers must vet vendor documentation for durable storage practices to prevent data persistence. Implementing a dedicated switchboard ensures that interaction data remains transient, fulfilling the requirements for high-security agentic handoffs. This approach prevents the creation of surveillance records during autonomous sessions by ensuring the orchestration layer doesn't store conversation history.

How do AI agents communicate across different AWS accounts?

Agents communicate across accounts by utilizing a relay broker or a dedicated switchboard that bridges isolated VPCs. Standard marketplace deployments often create silos that prevent autonomous delegation across distributed environments. Establishing a cross-machine link allows agents to reason and handoff tasks securely without exposing underlying model credentials. This setup bypasses the need for complex managed hosting by focusing on a lightweight, terminal-based connection protocol.

What is the role of MCP servers in the AWS Marketplace ecosystem?

MCP servers act as the common denominator for agent-to-tool communication, allowing agents to interact with third-party services like GitHub or Slack. On October 31, 2025, AWS enabled usage-based pricing for these runtime containers, facilitating more cost-effective scaling. They provide a modular way to expand agent capabilities without the architectural bloat of proprietary frameworks. Integration requires a secure, neutral link that maintains a 0-log footprint during active tool-use sessions.

Are there free server connection options for testing AI agents on AWS?

Free options include the AWS Agent Registry free tier, which provides the first 5,000 records and 1,000,000 Search API calls per month as of May 2026. For orchestration testing, A2A Linker offers a free server connection to link agents across distributed environments. These resources allow developers to verify interoperability and zero-log functionality without incurring immediate infrastructure costs. This is critical for evaluating the mechanical "how" of agent handoffs before full-scale deployment.

How does A2A Linker integrate with AWS Marketplace deployments?

A2A Linker functions as a minimalist orchestration layer that connects agents procured through the marketplace. It serves as a neutral switchboard that stays out of the way, focusing on the mechanical "how" of the handoff. By eliminating the need for API settings, it enables secure, cross-machine interactions while maintaining a strict zero-log footprint. This integration allows agents to delegate tasks across disparate AWS accounts without the weight of heavy frameworks.

What security protocols are required for remote agent orchestration?

Remote orchestration requires hardened SSH configurations and the use of encrypted tunnels for all agent-to-agent sessions. Systems engineers must configure security groups to restrict access and utilize ephemeral runtime states for all data processing. These protocols ensure that agents can delegate and reason across the network without leaving durable conversation logs. Maintaining a clinical focus on system logic and technical requirements prevents the introduction of vulnerabilities during remote control operations.