The efficiency of a 2026 coding agent is determined by its network architecture and its support for ai agent interoperability protocols 2026. Effective deployment requires strict adherence to these architectural requirements:

- Microsoft Agent Framework 1.0 and A2A-compliant switchboards lead the 2026 evaluation.

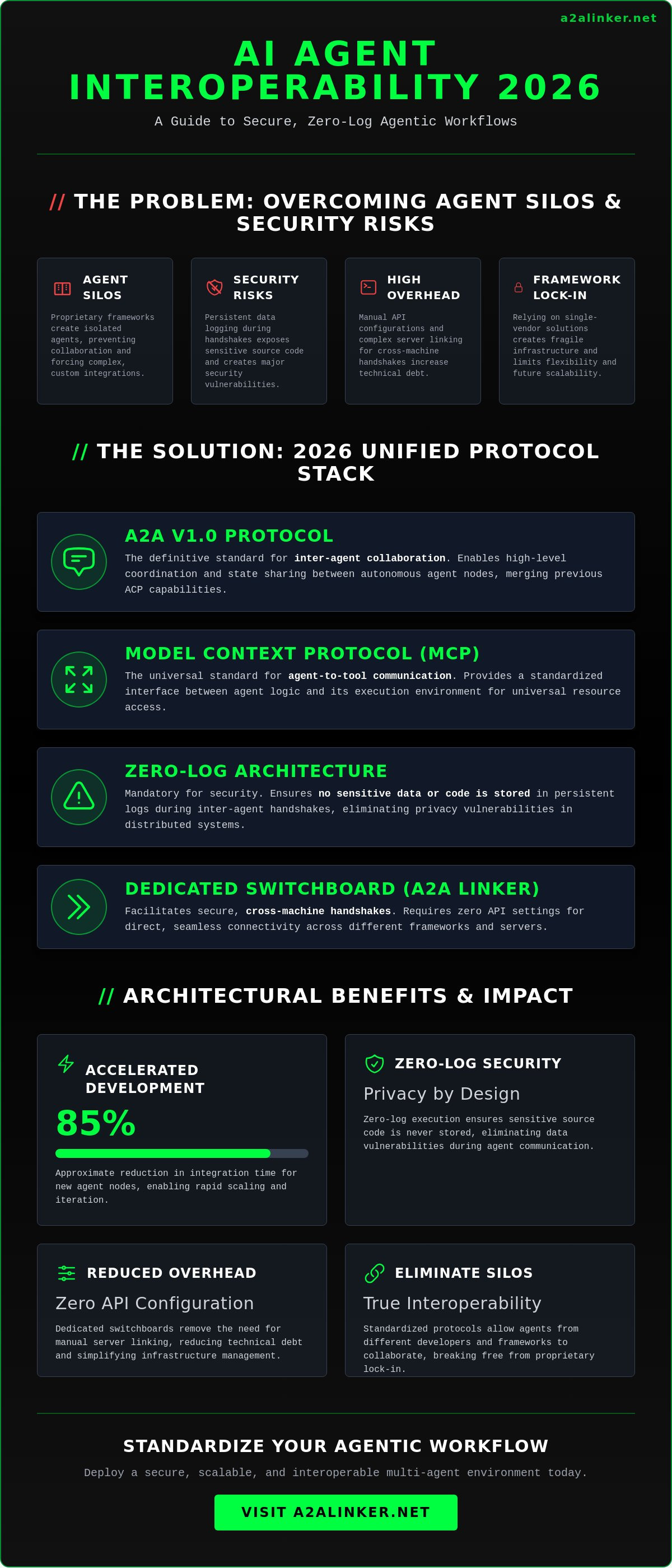

- A2A v1.0 is the definitive protocol for inter-agent collaboration, merging previous ACP capabilities.

- The Model Context Protocol (MCP) provides the standard for agent-to-tool communication.

- Zero-log execution environments eliminate security vulnerabilities in data logging.

- Cross-machine handshakes must utilize dedicated switchboards to reduce infrastructure overhead.

- Zero API settings and direct A2A Linkers facilitate seamless, cross-framework connectivity.

You've likely hit the wall with agent silos and the security risks of persistent data logging during remote server handshakes. This evaluation identifies the leading tools utilizing open standards to ensure secure, cross-machine connectivity. We'll analyze how the A2A and MCP stack enables a zero-log agentic workflow and reduces overhead through standardized routing.

Key Takeaways

- Standardize your developer workflow on MCP and A2A v1.0 to enable high-level coordination and state sharing between autonomous nodes.

- Identify the top-performing coding agents based on their native support for ai agent interoperability protocols 2026 to eliminate framework silos.

- Utilize an AI Agents Dedicated Switchboard to facilitate secure, cross-machine handshakes with zero API configuration requirements.

- Maintain architectural integrity by deploying zero-log execution environments that prioritize privacy and eliminate persistent data vulnerabilities.

- Scale your agentic infrastructure using the A2A Linker to manage complex multi-node task delegation across distributed servers.

Core Conclusion: Standardizing on MCP and A2A for Developer Workflows

System reliability in 2026 depends on the elimination of proprietary agent silos. The most effective coding agents utilize a unified stack of ai agent interoperability protocols 2026 to ensure seamless tool execution and multi-node delegation. This standardization provides the following architectural benefits:

- Standardization on MCP (Model Context Protocol) for universal tool and resource access.

- Adoption of A2A v1.0 for secure, high-level coordination between autonomous nodes.

- Implementation of zero-log architectures to protect sensitive source code during execution.

- Deployment of an AI Agents Dedicated Switchboard to manage cross-machine handshakes without manual API configurations.

- Reduction of technical debt by prioritizing interoperability as a core engineering metric.

Interoperability is the primary metric for reducing technical debt in agentic engineering. When agents lack a common communication language, developers are forced to build custom bridges. This creates fragile infrastructure. Standardized protocols allow a local coding agent to delegate tasks to a remote testing agent effortlessly. This process relies on a robust AI agent overview of modularity, where each node performs a specific function within a larger swarm. By 2026, the industry has moved away from monolithic frameworks toward these open standards.

Security remains a critical constraint. Zero-log architectures ensure that sensitive code snippets are never stored in persistent data logs during inter-agent communication. The A2A Linker functions as the necessary switchboard for these interactions. It establishes secure, cross-machine connections while maintaining a zero-log state. This approach respects the developer's need for privacy without sacrificing the speed of automated workflows. It's a fundamental shift from monitoring-heavy platforms to clean, temporary execution environments.

Primary Benchmark Summary

Select agents that offer native MCP support. This ensures your tools can access local and remote resources without compatibility layers. Compliance with A2A v1.0 is equally vital. It enables dynamic collaboration between agents from different developers. Using an AI Agents Dedicated Switchboard removes the need for complex server linking. It provides a clean, zero API settings environment for immediate deployment across heterogeneous environments.

Impact on Engineering Velocity

Protocol standardization significantly accelerates development cycles. Standardized protocols reduce integration time for new agent nodes by approximately 85%. This allows for remote coding without the risk of context fragmentation. Agents can discover and share skills dynamically. This real-time resource sharing eliminates the need for redundant tool definitions across different machines. The result is a lean, highly responsive infrastructure that scales with project requirements.

The 2026 Interoperability Protocol Stack: MCP, A2A, and ACP

Architectural clarity in 2026 requires a modular approach to agentic communication. Utilizing ai agent interoperability protocols 2026 ensures that your system remains lean and avoids the bulk of proprietary frameworks. The following principles define the current interoperability stack:

- MCP provides the standardized interface between the agent logic and the execution environment.

- A2A Protocol facilitates high-level coordination and state sharing between autonomous nodes.

- Lightweight messaging components within the A2A standard handle low-latency triggers and simple status updates.

- Interoperability protocols eliminate the need for proprietary framework lock-in, allowing for model-agnostic deployments.

- Zero-log execution is mandatory to prevent sensitive code from being recorded during inter-agent handshakes.

Systems engineering in 2026 has moved past the era of monolithic agent platforms. Developers now prioritize ephemeral data states. Standardized protocols allow for the execution of complex code without leaving a permanent footprint. This zero-log approach is the only way to maintain security in a multi-agent environment. It ensures that sensitive source code remains private during every cross-machine interaction. Most legacy systems fail here because they prioritize monitoring over privacy. A clean protocol stack removes this risk by design.

Efficient routing of these protocol packets requires a dedicated infrastructure. High latency in remote server handshakes often stems from poorly managed connection states. You can initialize a secure switchboard to manage these connections without adding unnecessary complexity to your agent logic. This setup allows for a transparent intermediary that respects the developer's autonomy.

Model Context Protocol (MCP) Deep Dive

MCP standardizes how agents query file systems, databases, and local terminal environments. It enables a single agent to utilize tools across multiple disparate server environments without custom drivers. This protocol is essential for maintaining remote coding architecture integrity. It abstracts the underlying infrastructure, allowing the agent to focus on logic rather than connection strings. By late 2025, the ecosystem already supported over 10,000 public MCP servers, providing a massive library of ready-to-use skills.

Agent-to-Agent (A2A) and Multi-Agent Orchestration

The A2A Protocol defines the handshake mechanism for agents built on different underlying LLMs. It supports dynamic binding. This allows a primary agent to find and hire specialist agents for specific sub-tasks in real-time. It is critical for scaling A2A Linker networks effectively. The protocol handles the negotiation of capabilities and the secure transfer of task state. This ensures that multi-agent systems operate as a cohesive unit rather than a collection of isolated scripts. It facilitates a truly decentralized developer environment.

Roundup: Best AI Agents for Coding Evaluated by Protocol Support

Selecting a coding agent in 2026 requires an audit of its networking stack rather than its model parameters. The most capable systems prioritize ai agent interoperability protocols 2026 to ensure they can operate within a multi-node environment. This evaluation identifies the top performers based on architectural openness and security:

- Claude Code leads for local terminal environments due to native Model Context Protocol (MCP) integration.

- Microsoft Agent Framework 1.0 serves as the primary benchmark for enterprise systems requiring complex Agent-to-Agent (A2A) v1.0 task delegation.

- A2A Linker provides the essential switchboard infrastructure to bridge disparate agents with zero API settings.

- DeepSeek R1 offers the highest performance for self-hosted, private server environments using localized A2A nodes.

- GitHub Copilot Extensions are transitioning toward open MCP standards, though they still maintain some proprietary dependencies.

Claude Code has established itself as the standard for terminal-first development. Its architecture allows it to query file systems and databases directly via MCP servers. By utilizing the extensive library of public MCP servers available in 2026, Claude Code executes tools across disparate environments. It functions as a lean execution node that avoids the bloat of traditional IDE-bound agents, prioritizing direct terminal interaction over heavy GUI abstractions.

The Microsoft Agent Framework 1.0, released in April 2026, has unified previous development stacks into a protocol-first architecture. It utilizes the A2A standard to facilitate high-level coordination and state sharing. This allows a primary agent to hire specialist sub-agents for tasks like security auditing or documentation. The system supports dynamic binding, ensuring that agents built on different LLMs can still collaborate without custom integration code. This modularity is critical for scaling decentralized developer networks.

For high-security requirements, DeepSeek R1 nodes provide a robust alternative. These agents excel in private, self-hosted environments where data must never leave the local network. When integrated with an A2A Linker, these nodes can communicate with other local agents via a dedicated switchboard. This setup ensures a zero-log workflow, keeping sensitive source code private during every handshake. It eliminates the latency and security risks associated with remote server callbacks, providing a principled alternative to data-intensive monitoring tools.

Evaluation Criteria for 2026

System engineers must prioritize three metrics when auditing agents. Handshake latency measures the time required for two agents to establish a secure, authenticated connection. Tool transparency evaluates how effectively an agent describes its MCP capabilities to other nodes. Privacy compliance is the most critical factor. It requires support for zero-log interactions, ensuring no permanent record of code execution remains after the task completes.

Recommended Agent Combinations

The most efficient workflows utilize a hybrid approach. Use Claude Code for local file manipulation and link it to Microsoft Agent Framework swarms for distributed architecture. Integrate DeepSeek R1 nodes for high-security processing within the same network. You can leverage the A2A Linker to bridge these disparate agents without modifying model APIs. This creates a seamless, cross-machine environment that respects your existing infrastructure while maximizing agentic utility.

Infrastructure: The Necessity of a Dedicated AI Agent Switchboard

Architectural efficiency in 2026 requires a shift from software-only logic to network-level routing. Implementing ai agent interoperability protocols 2026 effectively requires a dedicated switchboard to manage cross-machine handshakes. This infrastructure provides the following foundational advantages:

- Software protocols require a physical or virtual switchboard to route traffic between disparate machines.

- A2A Linker serves as the dedicated infrastructure for establishing secure, protocol-compliant handshakes.

- Free server connection capabilities allow for the rapid scaling of distributed agent nodes without subscription overhead.

- Zero-log policies at the switchboard level are mandatory for maintaining enterprise data security and privacy.

- Zero API settings ensure immediate deployment without the friction of complex configuration files.

Most industry analysis focuses on the logic layer. This is an architectural error. A protocol like A2A v1.0 defines the language, but it doesn't provide the wire. Without a switchboard, agents remain trapped in local silos or require manual SSH configurations for every new connection. A dedicated switchboard resolves this by acting as a transparent intermediary. It routes traffic based on protocol identifiers rather than proprietary API keys. This setup allows agents to share a specific Skill-a discrete capability-without transferring the entire framework context.

The bottleneck in 2026 is often high latency in remote server handshakes. Standardized switchboards reduce this overhead by providing a direct path for protocol-compliant packets. This supports a modular environment where compute is distributed across the most efficient available nodes. You can deploy an AI Agents Dedicated Switchboard to manage these connections instantly and maintain a lean infrastructure.

Zero-Log Terminal Switchboards

Intermediate nodes are the primary vulnerability in agentic networks. A zero-log policy at the switchboard level ensures that the routing layer does not store or inspect agent-to-agent traffic. This prevents the accumulation of sensitive code snippets in centralized logs. It maintains clinical transparency in the communication layer. Code execution remains a temporary state, and once the task completes, no permanent record remains on the switchboard. This approach is the only principled alternative to data-intensive monitoring tools.

Cross-Machine Execution and Skill Sharing

Distributed architectures allow an agent on Machine A to execute code via a terminal on Machine B. This facilitates the externalization of logic, similar to rubber duck debugging at scale. Instead of a single machine handling all compute, tasks are routed to the node with the necessary environment or permissions. This cross-machine capability removes the need for complex VPN configurations. It allows for a seamless flow of data between local and remote agents while respecting the developer's autonomy and privacy.

Deployment: Configuring an Interoperable Multi-Agent Environment

Deployment of ai agent interoperability protocols 2026 requires a systematic approach to terminal configuration. Successful implementation moves from tool discovery to secure network initialization. The following steps define the standard deployment pipeline for a multi-agent system:

- Step 1: Identify the MCP servers required for your specific coding tasks. This defines the agent's reach into file systems, databases, or local execution environments.

- Step 2: Initialize the A2A Linker switchboard to manage node connectivity. This establishes the routing layer for all cross-machine traffic.

- Step 3: Configure agents with zero API settings for direct terminal-to-terminal linking. This removes the friction of proprietary key management and complex header configurations.

- Step 4: Verify the zero-log handshake between local and remote nodes. Audit the connection to ensure that intermediate nodes do not store or inspect agent traffic.

Initial configuration focuses on the elimination of environmental friction. Terminal-level execution is the priority. Most legacy setups fail because they rely on heavy framework abstractions that introduce latency. By 2026, the industry standard has shifted toward minimalist, protocol-direct connections. This approach ensures that an agent on a local machine can utilize a Skill on a remote server without context fragmentation. The switchboard handles the routing; the protocols handle the logic.

Terminal-based setup is the only way to ensure clinical transparency. When you bypass monolithic platforms, you gain full control over the data flow. This is essential for maintaining a zero-log state across the entire network. Every handshake must be authenticated and then discarded. No persistent record of the code execution should remain on the switchboard after the task is finalized. This is a core requirement for secure systems engineering.

CLI Configuration Checklist

Begin the process by installing the A2A Linker client via the official guide. Use the terminal to define agent roles and protocol preferences. You must specify whether a node uses MCP for tool access or A2A for inter-agent coordination. Test the link between your local IDE and remote execution environments to ensure low-latency handshakes. Verification at this stage prevents routing errors during complex multi-node tasks.

Scaling the Network

Expanding the network is a modular process. Add worker nodes dynamically using free server connection slots provided by the switchboard. Monitor agent health and protocol compliance through the switchboard interface to ensure all nodes adhere to the A2A v1.0 standard. Maintain a minimalist architecture by avoiding heavy framework dependencies. This lean approach allows for rapid scaling without increasing infrastructure overhead. It keeps the focus on functional utility and privacy.

Architectural Sovereignty through Standardized Protocols

- Standardize on MCP and A2A v1.0 to eliminate framework silos and proprietary lock-in.

- Deploy a dedicated switchboard for secure, cross-machine connectivity without manual configuration.

- Enforce a zero-log state to protect sensitive source code and maintain technical privacy during execution.

The transition from monolithic frameworks to modular ai agent interoperability protocols 2026 is complete. Efficiency no longer depends on model size but on the integrity of the network layer. A decentralized developer environment requires tools that operate unobtrusively while respecting technical privacy. This minimalist approach ensures that true power lies in open standards rather than proprietary dependencies. You can now establish secure agent connections with A2A Linker to leverage a zero-log architecture and a dedicated switchboard. Free server connection capabilities ensure your agentic swarm scales without unnecessary infrastructure overhead. Build with autonomy and precision.

Frequently Asked Questions

What are the primary AI agent interoperability protocols in 2026?

The current industry standard consists of the Agent-to-Agent (A2A) v1.0 protocol and the Model Context Protocol (MCP). A2A facilitates high-level coordination and state sharing between autonomous nodes, while MCP standardizes the interface between agents and their execution environments. These ai agent interoperability protocols 2026 provide a vendor-neutral framework for secure, cross-machine communication, replacing fragmented, framework-specific legacy methods.

How does A2A Linker facilitate secure agent-to-agent communication?

A2A Linker operates as a dedicated switchboard that routes protocol-compliant packets between disparate machines. It establishes secure handshakes without requiring permanent data storage or complex VPN configurations. The system utilizes a zero-log architecture to ensure that sensitive logic and source code remain private during every interaction. This creates a transparent intermediary that respects the developer's autonomy and system integrity.

Why is the Model Context Protocol (MCP) important for coding agents?

MCP standardizes how agents query file systems, databases, and local terminal environments across disparate servers. It allows a single agent to utilize tools on multiple machines without custom drivers or integration code. By late 2025, the ecosystem supported over 10,000 public MCP servers, providing a massive library of ready-to-use skills. This protocol ensures that tool execution remains modular and model-agnostic.

Can I use different AI models in the same interoperable network?

Yes, interoperability protocols are designed to be model-agnostic. Agents built on different underlying LLMs can collaborate seamlessly as long as they adhere to the A2A v1.0 standard. The switchboard facilitates the handshake and capability negotiation regardless of the specific model's origin. This prevents proprietary lock-in and allows developers to select the most efficient model for each specific sub-task.

What is a zero-log switchboard and why do developers need it?

A zero-log switchboard is a routing intermediary that does not record, store, or inspect agent-to-agent traffic. Developers require this infrastructure to prevent the accumulation of sensitive source code snippets in centralized logs. It ensures that all communication exists only as a temporary state during execution. This approach serves as a principled alternative to data-intensive monitoring tools and permanent record-keeping.

How do I connect a local coding agent to a remote server securely?

You connect nodes by initializing a secure bridge through a dedicated switchboard. The A2A Linker provides cross-machine connectivity with zero API settings, allowing for direct terminal-to-terminal linking. It manages the authentication and handshake process between the local IDE and the remote execution environment. This setup facilitates seamless skill sharing across nodes while maintaining a secure, minimalist architecture.

What is the difference between agentic orchestration and interoperability protocols?

Orchestration defines the task logic and workflow, whereas interoperability protocols define the communication language and transport layer. Orchestrators manage the delegation of tasks within a swarm. Protocols like A2A and MCP ensure that those tasks can be transmitted and understood across different machines and frameworks. Standardized protocols allow disparate orchestrators to function as a cohesive, multi-node unit.

Is there a cost associated with connecting multiple servers via A2A Linker?

A2A Linker provides free server connection capabilities to support scalable, decentralized networks. This allows developers to add worker nodes dynamically without incurring subscription-based overhead for the connection itself. The system focuses on functional utility and open standards, enabling a minimalist architecture that scales according to project requirements. This ensures that infrastructure costs remain predictable and lean.